FinOps for AI: From Cloud Chaos to Business Clarity

Cloud was complex. AI made it explosive. Enterprise AI spend grew 5.8× in two years. A $400M collective leak in unbudgeted agentic AI spend hit Fortune 500 companies in Q1 2026 alone. 73% of FinOps teams report AI costs exceeded original projections. This is the definitive guide to what FinOps for AI actually requires — why legacy cost tools cannot govern it, what business clarity looks like when it is working, and how DigiUsher's FinOps OS turns AI cost chaos into measurable cloud ROI.

Author

DigiUsher

Read Time

19 min read

“An agent doesn’t know it’s bankrupting you; it just thinks it hasn’t found the answer yet. Without a governance intelligence layer to throttle these cycles, your ROI evaporates before the first dashboard even refreshes.” — Dr. Aris Thorne, Cloud Economist, Gartner Data & Analytics Summit, March 2026

Cloud was already complex. AI made it explosive.

The numbers from the first quarter of 2026 are not theoretical warnings. They are documented outcomes from enterprises that moved from AI experimentation into production agentic deployment without the governance infrastructure to match the speed:

- $400 million in collective unbudgeted cloud spend leaked from Fortune 500 companies through ungoverned agentic AI deployments in Q1 2026 alone

- 73% of FinOps teams report AI costs exceeded original budget projections — structural failure, not variance

- 98% of FinOps teams now manage AI spend, up from 63% in 2025 — the fastest capability expansion in the discipline’s history

- Only 14.2% of organisations are at “Run” FinOps maturity — while AI demands Run-level governance from day one of production deployment

Cloud chaos is not new. What is new is the speed at which AI creates it, the scale at which it compounds, and the inadequacy of the governance tools most enterprises are trying to apply to it.

This briefing explains why AI cost chaos is structurally different from cloud cost chaos, what business clarity looks like when FinOps for AI is working, and how DigiUsher’s FinOps Operating System turns AI cost chaos into measurable cloud ROI — for enterprise teams managing AI spend across AWS, Azure, GCP, Kubernetes, and Databricks.

The New Shape of Cloud Chaos: Why AI Changed Everything

Cloud cost management had a decade to mature. Cloud chaos in 2015 looked like untagged EC2 instances, forgotten snapshots, and dev environments running through the weekend. The problem was visible; the governance model was learnable; the billing was predictable enough for static budgets to function.

AI compressed that entire maturation arc into approximately two years — and the chaos it creates is categorically different from the cloud waste problem that FinOps originally solved.

In 2024, 31% of FinOps practitioners managed AI spend. In 2025, it was 63%. Now it is 98%. AI moved from emerging concern to daily scope faster than any category the FinOps Foundation has ever tracked.

The velocity is not the problem. The problem is what “manage” means in practice. Most teams can show that AI spend exists. Some can locate the account. A few can identify the services. That does not answer what the business will demand: attribution that ties AI spend to the specific features, customers, and workflows it served.

The governance gap between “AI spend exists” and “AI spend is attributed, governed, and connected to business value” is where enterprises are losing money — continuously, invisibly, at a rate that accelerates with every AI agent deployed and every agentic workflow scaled to production.

Why Legacy Cloud FinOps Tools Cannot Close This Gap

Traditional cloud cost management was engineered for a world where:

- Costs scaled linearly with provisioned resources

- Billing happened at the account or service level

- Forecasting worked from stable historical patterns

- Monthly review cycles were sufficient governance cadence

AI workloads break every assumption in this architecture:

Cloud Cost Management vs. AI Cost Management

──────────────────────────────────────────────────────────────

Dimension Cloud (Legacy Model) AI (Current Reality)

──────────────────────────────────────────────────────────────

Cost scaling Linear with compute Non-linear with query

complexity, model

selection, chain depth

Billing unit VM hours, GB stored Tokens, requests,

DBUs, GPU-hours

Attribution Account → service → Inference call → chain

tag → feature → outcome

Forecast method Historical + trend Pattern + intent +

agentic behaviour

Governance speed Monthly billing review Real-time enforcement

Explosion risk Idle VMs Recursive agent loops

Provider scope 1–3 clouds 5–10 AI providers

──────────────────────────────────────────────────────────────

IDC’s FutureScape 2026 calls this emerging reality the “AI infrastructure reckoning.” Organisations are realising that traditional cost management models are insufficient for a world where workloads self-scale and budgets can balloon overnight.

IDC warns that by 2027, G1000 organisations will face up to a 30% rise in underestimated AI infrastructure costs — not from reckless spending, but from under-forecasting and completely missing the expenses unique to AI-specific projects.

The governance infrastructure that solved cloud chaos in 2018 is not the infrastructure that governs AI chaos in 2026. The two problems share vocabulary but not structure.

Five Dimensions of AI Cloud Chaos

Understanding AI cloud chaos requires understanding where it originates. Five structural dimensions create the governance failures that legacy tools cannot address.

Dimension 1 — Billing Fragmentation Across the AI Stack

A single AI workflow may touch seven separately billed services in resolving one user query:

Single AI Workflow — The Full Billing Stack

──────────────────────────────────────────────────────────────

Service Provider Billing Unit

──────────────────────────────────────────────────────────────

LLM inference OpenAI / Anthropic Tokens (input + output)

Embedding generation Cohere / Vertex Tokens

Vector database Pinecone / Redis Storage + query units

GPU cluster AWS / Azure / GCP GPU-hours

Output moderation Azure Content API calls

Network egress Cloud provider GB transferred

Retry logic Same providers Duplicate billing

──────────────────────────────────────────────────────────────

Invoice visibility: Each provider bills separately

Attribution: Zero without external normalisation

Visibility into AI costs is hard because pricing differs across providers and services. One workflow might hit an inference API, a vector database, a tool API, and a GPU cluster for fine-tuning. Each one has its own pricing model, billing cycle, and unit of measure.

The practical consequence: finance teams reconciling AI spend across five to ten providers produce approximations from incompatible data formats, not attributions from a unified cost model. Engineering teams making AI architecture decisions do so without cost signal at the point of decision.

Dimension 2 — Non-Linear Cost Scaling That Defeats Standard Forecasting

Cloud infrastructure costs were predictable enough for annual IT budget cycles. AI costs are not.

A single prompt engineering decision — increasing the system prompt by 500 tokens — increases inference cost on every subsequent API call proportionally. At 10,000 daily calls, this is a material monthly budget change generated by one engineering decision that no billing alert detected at the time it was made.

Model selection creates even larger step-changes. Routing a task to GPT-4o rather than a smaller, task-appropriate model can multiply per-call cost by 5–20×. At production scale, this single architectural default generates cost variance that static budgets cannot absorb.

A seemingly minor change in prompt structure or application usage can double inference costs overnight. This volatility makes traditional budgeting models — built around predictable compute and storage usage — largely ineffective.

Dimension 3 — Agentic Multiplication and the Recursive Loop Risk

Agentic AI has introduced a cost risk category with no equivalent in traditional cloud computing: the recursive reasoning loop.

Where a standard chatbot processes one query with one LLM call, an agentic workflow:

- Chains 10–20 LLM calls per task

- Retrieves context from vector databases (embedding generation + retrieval billed separately)

- Calls external tools and APIs (each call priced independently)

- Re-reasons and validates outputs (additional passes at full inference cost)

A single agent in a recursive loop — searching for an answer it cannot definitively find, retrying indefinitely — generates thousands of pounds per hour at hyperscaler inference rates. This “Predictability Gap” led to a $400 million collective “leak” in unbudgeted cloud spend across the Fortune 500 in Q1 2026, driven by autonomous AI agents managing everything from supply chain logistics to real-time customer sentiment analysis.

At the Gartner Data & Analytics Summit in March 2026, the atmosphere shifted from awe to anxiety. 2026 has become the year of the FinOps Reckoning — and US enterprises are finally fighting back against Agentic Resource Exhaustion.

Monthly billing reviews cannot govern this risk. The governance model that prevents recursive loop cost spirals operates at the minute-level, with automated kill-switch infrastructure — not the month-level, with retrospective invoice analysis.

Dimension 4 — Multi-Provider Attribution Without a Common Schema

Most enterprises distribute AI workloads across Azure OpenAI, AWS Bedrock, Google Vertex AI, Hugging Face, direct Anthropic API, Databricks, and Snowflake ML simultaneously. Each has an incompatible billing schema:

| Provider | Billing Unit | Attribution Mechanism |

|---|---|---|

| Azure OpenAI | Tokens (input + output) | Azure resource groups |

| AWS Bedrock | Tokens / requests (model-dependent) | Application Inference Profiles |

| Google Vertex AI | Compute hours + tokens + data processing | Project labels |

| Databricks | DBUs (Databricks Units) | Cluster and job tags |

| Snowflake ML | Credits + compute time | Warehouse and query tags |

| Direct API (Anthropic etc.) | Tokens | API key attribution only |

Without normalisation, cross-provider AI cost comparison is structurally impossible. Finance teams working across these schemas produce estimates, not financial intelligence.

Dimension 5 — The ROI Articulation Gap: Cost Without Outcome

The most consequential dimension of AI cloud chaos is not the cost itself — it is the inability to connect AI cost to the business value it generates.

AI infrastructure spend appears clearly on cloud invoices. The outcomes it funds — features shipped, customers retained, decisions automated, margins preserved — appear nowhere in the billing system. Allocating AI costs to business units is even harder than traditional infrastructure. Cloud resources map to accounts, projects, and tags. AI usage is embedded inside product features, internal workflows, and agent chains. It crosses teams and systems by design.

Without this connection, boards cannot answer the most important strategic technology question: is our AI investment generating return proportionate to its cost? FinOps leaders are participating in provider negotiations, commitment modelling, and M&A diligence discussions. They are answering ROI and investment realisation questions rather than merely reporting past spend. But only those with the attribution infrastructure to connect cost to outcome can answer these questions with data rather than estimates.

What Business Clarity Actually Looks Like

Business clarity from AI spend is not a better dashboard. It is a different kind of financial intelligence — one that connects infrastructure decisions to business outcomes in real time, enabling the governance actions that prevent cost chaos from recurring rather than explaining it after the fact.

Clarity looks like this:

An engineering team deploying a new agentic customer service workflow sees the projected cost per resolved interaction at design time — before deployment — enabling them to validate that the workflow economics are positive before committing to production infrastructure.

A FinOps team running weekly AI cost reviews identifies a 40% increase in inference cost attributable to one product team’s model selection change — surfaces it to the team within the week, not the month — and routes the next deployment to a cost-appropriate model tier.

A CFO presenting quarterly AI investment performance to the board shows cost per AI product line, cost per customer interaction, and gross margin impact per AI feature — produced automatically from attribution data, not manually assembled from six separate provider invoices.

A platform operations team receives an automated alert at 2:17 AM: agentic workflow #473 has entered an apparent reasoning loop and consumed 340% of its configured per-run compute threshold. The kill-switch terminates the process automatically. Total cost: £47. Without the kill-switch: £2,300 by 6:00 AM.

This is the operational reality of FinOps for AI working correctly. The contrast with legacy governance is not incremental — it is structural.

The organisations successfully navigating this challenge share a common trait: they’ve reimagined FinOps as a strategic team, not an after-the-fact accounting exercise. They treat AI economics as a living ecosystem — measurable, visible, and continuously optimised. FinOps for AI is not only about controlling costs; it’s about translating complexity into clarity.

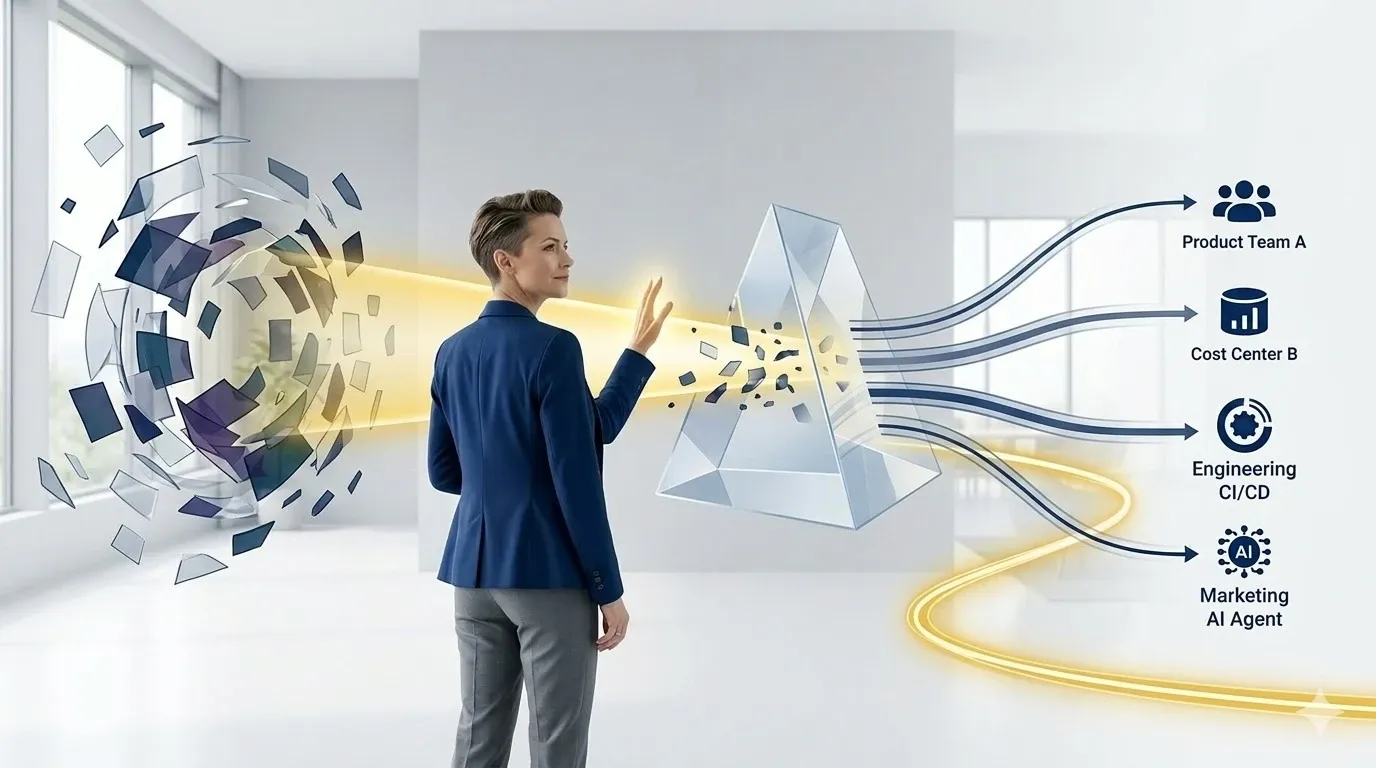

DigiUsher’s FinOps OS: Turning AI Cost Chaos Into Business Clarity

DigiUsher is purpose-built for the economic reality of the AI era — where AI workloads drive cloud spend in ways that legacy tools cannot govern, and where business clarity from AI investment requires a layer of financial intelligence that sits above individual cloud billing systems.

Our core motto has not changed since launch. It is more relevant now than it has ever been:

Improve ROI dramatically. Build efficient solutions through unit economics. Track every pound spent to accelerate delivery.

Seven Integrated Capabilities

1 — Unified AI Cost Observability: One View. Every Provider. Every Dollar Attributed.

DigiUsher normalises cost data from Azure OpenAI, AWS Bedrock, Google Vertex AI, Hugging Face, direct API providers, Kubernetes GPU clusters (EKS, AKS, GKE, OKE), Databricks, and Snowflake ML to FOCUS 1.x in a single attributed cost model.

The result: the cross-provider financial view that no native billing tool provides — enabling cost comparison across providers, unified team attribution, and AI unit economics across the entire AI estate from one source of truth.

2 — AI Unit Economics at Every Level of Granularity

DigiUsher produces AI cost attribution at the granularity that FinOps, engineering, and finance each require:

| Level | Metric | Who Uses It |

|---|---|---|

| Workload | Cost per AI workload | FinOps, Engineering |

| Training | Cost per model training run | ML teams, Finance |

| Version | Cost per model version | Product, ML teams |

| Feature | Cost per AI feature | Product, Finance |

| Product Line | Cost per product line | CFO, CIO, Board |

| Operational | Cost per user / per API call / per training cycle | Engineering, FinOps |

This precision enables smart design choices at the engineering layer, budget accountability at the product layer, and ROI demonstration at the executive layer — simultaneously.

3 — Agentic Workflow Governance: Automated Kill-Switches Before Cost Compounds

DigiUsher governs agentic AI with the real-time enforcement infrastructure that prevents the recursive loop cost pattern:

- Per-chain token attribution: every agentic workflow chain tracked from initiation to completion, with total cost attributed to the owning team and product

- Automated budget caps: configurable per-run and per-day thresholds with throttle and suspend actions that trigger before spend reaches invoice level

- Kill-switch infrastructure: automated process termination when consumption exceeds configured thresholds — the governance mechanism that prevented the Fortune 500 $400M leak pattern

- Human-in-the-loop triggers: configurable approval requirements when per-process cost exceeds defined thresholds before the next execution cycle

4 — GPU and Inference Cost Governance

For GPU-intensive AI workloads running on Kubernetes clusters and hyperscaler GPU instances:

- Utilisation monitoring per cluster and per node pool — surfacing idle GPU capacity (average enterprise GPU utilisation is 20–35%; DigiUsher identifies the 65–80% idle period)

- Automated scale-down triggers when utilisation falls below configurable thresholds

- Model routing intelligence — identifying where frontier model calls can be replaced by appropriately tiered alternatives without quality loss

- Inference optimisation signals — semantic caching opportunities, request batching candidates, quantisation potential — translating governance into actionable cost reduction of 30–50% on governed workloads

5 — Multi-Cloud and Kubernetes Attribution

DigiUsher provides workload-level cost attribution across:

- AWS (EC2, EKS, Bedrock, SageMaker, Lambda)

- Azure (AKS, Azure OpenAI, Azure ML, Container Instances)

- GCP (GKE, Vertex AI, Cloud Run, BigQuery ML)

- Kubernetes (EKS, AKS, GKE, OKE — namespace, pod, and service level)

- Databricks (cluster, job, and workflow level)

- Snowflake ML (warehouse, query, and compute time)

Seamless integration into CI/CD, IaC, and tagging systems enables cost signals to reach engineering teams at the point of architectural decision — before commitments are made — through DigiUsher’s developer portal integrations.

6 — Visual Dashboards for Every Stakeholder

DigiUsher’s dashboards are not one-size-fits-all reporting. They are purpose-built for the three audiences who need AI cost intelligence simultaneously but differently:

FinOps teams: real-time anomaly detection, commitment utilisation tracking, cross-provider normalisation, tagging coverage metrics, and continuous rightsizing signals.

Engineering teams: cost per deployment, namespace-level attribution, model cost comparison, inline cost estimates in provisioning workflows, and non-production scheduling alerts.

CxO and Board: cost per AI product, gross margin impact per AI feature, cloud ROI per AI initiative, commitment utilisation versus plan, and AI investment attribution in board-ready format.

7 — Delivery Acceleration Through Financial Clarity

DigiUsher traces cost across every engineering step from provisioning to deployment — identifying redundant infrastructure layers, duplicated AI service subscriptions, and idle environments that accumulate financial waste while slowing delivery.

Teams that eliminate governance-invisible cost waste accelerate delivery: less time investigating billing anomalies, fewer architecture debates without cost data, faster decisions enabled by unit economics at the point of design.

DigiUsher customers report up to 20% additional savings over traditional cloud cost tools. The platform pays for itself in less than 90 days.

Why DigiUsher Is Built for the AI Era — Not Retrofitted for It

The fundamental distinction between DigiUsher and legacy cloud cost management tools is architectural, not feature-level.

Legacy tools were built for predictable infrastructure billing and extended toward AI as the category grew. DigiUsher was built for the FinOps Operating System model — unified governance across cloud, AI, SaaS, Kubernetes, and marketplace in a single normalised platform — with AI economics as a first-class design concern from the ground up.

Legacy Cloud Cost Tool → AI Extension:

Cloud cost visibility + AI add-on module

Still billing-schema-limited

Still monthly cadence governance

Still lacks workload-level AI attribution

DigiUsher FinOps OS:

Unified cost schema (FOCUS 1.x) from day one

Real-time enforcement, not retrospective reporting

Workload-level AI attribution across all providers

Agentic governance with automated kill-switch

AI unit economics as core product output

In 2026, the conversation is shifting from “How do we reduce cloud waste?” to “How do we maximise return per dollar invested in AI and cloud.” DigiUsher is built for the second question — the one that matters now.

The FinOps for AI Maturity Arc: Where Are You?

The FinOps Foundation’s 2026 State of FinOps data reveals a significant maturity gap between what enterprises need for AI governance and where most currently operate:

| Maturity Level | % of Organisations | AI Governance Capability |

|---|---|---|

| Crawl | 34.4% | Basic cloud cost visibility; no AI-specific attribution |

| Walk | 51.4% | Some AI cost tracking; no real-time enforcement or unit economics |

| Run | 14.2% | Full AI unit economics; automated enforcement; board-level ROI reporting |

AI cost management stands out as the single fastest-growing area of FinOps scope expansion — but governance maturity has not kept pace with adoption velocity.

The cost of remaining at Crawl or Walk maturity is not theoretical. The AI maturation cycle has compressed from a decade (cloud) to approximately two years. Teams at basic maturity stages are accumulating the governance debt that the AI FinOps Reckoning will eventually expose.

DigiUsher’s implementation framework is designed to accelerate this arc — moving enterprises from Walk to Run maturity in 60–90 days, with governance infrastructure that scales as AI adoption scales.

Built for Multi-Cloud, Engineered for the AI-First Enterprise

DigiUsher’s enterprise trust and certification architecture:

- AWS ISV Accelerate Partner — listed on AWS Marketplace

- Microsoft Azure ISV Co-Sell Ready — listed on Azure Marketplace

- Google Cloud Partner

- SOC 2® Type II certified — enterprise-grade security assurance

- GDPR compliant — for regulated industries and European deployments

- BYOC deployment — Bring Your Own Cloud (Secure Relay Proxy) for enterprises requiring data to remain within their own infrastructure perimeter

- Delivered globally through Infosys, Wipro, and Hexaware

Frequently Asked Questions

What is FinOps for AI and how is it different from standard cloud FinOps?

FinOps for AI governs the cost, attribution, optimisation, and ROI measurement of AI workloads — model training, inference pipelines, agentic workflow chains, RAG retrieval, GPU infrastructure, and multi-provider API consumption. It extends traditional cloud FinOps by addressing AI’s structural economic differences: non-linear cost scaling, usage-driven billing that resists standard forecasting, stacked vendor margins across four layers, and the agentic multiplication effect where autonomous workflows consume 15× more compute per task than standard chatbots. Standard cloud FinOps was built for predictable VM billing. AI costs do not scale predictably.

Why can’t legacy cloud cost management tools govern AI spend?

Legacy tools were built for linear infrastructure billing aggregated at the account or service level. AI workloads break this model: a single workflow touches multiple providers with incompatible billing schemas; agentic loops generate thousands per hour before monthly reviews reveal them; 73% of FinOps teams report AI costs exceeded projections because they applied legacy forecasting to non-linear AI behaviour. Governance that acts on monthly invoices cannot prevent cost that compounds in hours.

What is the $400M agentic AI cloud spend leak?

At the Gartner Data & Analytics Summit in March 2026, research documented $400 million in collective unbudgeted Fortune 500 cloud spend from ungoverned agentic AI. The mechanism: autonomous agents in recursive reasoning loops generating thousands of dollars per hour with no human or automated governance to stop them. The quote that defined the event: “An agent doesn’t know it’s bankrupting you; it just thinks it hasn’t found the answer yet.”

What ROI can enterprises expect from DigiUsher’s FinOps OS for AI?

DigiUsher customers report up to 20% additional savings over traditional cloud cost tools, with the platform paying for itself in under 90 days. Value comes from GPU idle waste elimination, non-production environment scheduling, model routing optimisation (30–50% API cost reduction), prevention of agentic cost spirals, and AI unit economics reporting that builds the business case for next-wave AI investment.

What does DigiUsher govern across multi-cloud AI environments?

AWS (EC2, EKS, Bedrock, SageMaker), Azure (AKS, Azure OpenAI, Azure ML), GCP (GKE, Vertex AI, BigQuery ML), Kubernetes GPU clusters, Databricks, and Snowflake ML — all normalised to FOCUS 1.x in a single attributed cost view with workload-level attribution across every provider simultaneously.

What are DigiUsher’s enterprise certifications?

AWS ISV Accelerate Partner (listed on AWS Marketplace), Microsoft Azure ISV Co-Sell Ready (listed on Azure Marketplace), Google Cloud Partner, SOC 2® Type II certified, GDPR compliant, and BYOC deployment for regulated industries requiring data sovereignty.

How quickly can DigiUsher move enterprises from Walk to Run FinOps maturity for AI?

DigiUsher’s implementation framework moves enterprises from Walk to Run maturity in 60–90 days — with unified AI cost visibility in place within the first two weeks, automated enforcement and unit economics in the first month, and full board-ready AI ROI reporting by day 90.

References

- FinOps Foundation — State of FinOps 2026

- IDC — Balancing AI Innovation and Cost: The New FinOps Mandate (December 2025)

- AnalyticsWeek — The $400M Cloud Leak: Why 2026 Is the Year of AI FinOps (March 2026)

- theCUBE Research — FinOps 2026: Shift Left and Up as AI Drives Technology Value (February 2026)

- Revenium — The 2026 State of FinOps Report: AI ROI Is the Question No One Can Answer (March 2026)

- Intellectt — Cloud Cost Optimisation in 2026: How AI-Driven FinOps Is Transforming Enterprise IT Spending

- Oplexa — AI Inference Cost Crisis 2026

- AnalyticsWeek — Inference Economics: Solving the 2026 Enterprise AI Cost Crisis

- AnalyticsWeek — AI FinOps and Sovereign Infrastructure: AI Costs in 2026

- Flexera — Agentic FinOps for AI: Autonomous Optimisation for Data Cloud (February 2026)

- nOps — Essential FinOps Statistics for Effective Cloud Financial Management 2026

- FinOps Foundation — FOCUS Specification

- Forrester — Public Cloud Market Outlook 2026: $1.03 Trillion

From Cloud Chaos to Business Clarity — Starting Now

The $400M Fortune 500 AI spend leak did not happen because those organisations were careless. It happened because AI deployment velocity exceeded governance velocity — the same gap every enterprise faces when AI moves from experiment to production without the financial infrastructure to match its speed.

DigiUsher’s FinOps OS closes that gap — with unified AI cost observability, automated enforcement, AI unit economics at every level of granularity, and board-ready ROI reporting that connects every dollar of AI infrastructure investment to the business outcome it generates.

The enterprises that govern AI economics now — before scale makes the governance problem larger than the infrastructure designed to address it — are the ones that build durable AI ROI while competitors are still explaining invoice surprises.

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

The Death of Chargeback: Why Cost Allocation Is Failing in the Kubernetes and AI Era

Chargeback was built for a world of static servers, predictable workloads, and clear ownership boundaries. That world is gone. In 2026, shared Kubernetes clusters, ephemeral containers, and AI token costs have made traditional allocation models inaccurate, delayed, and politically toxic. This briefing explains the five failure modes destroying chargeback in modern infrastructure — and the five-capability model that replaces it.

Explore article

Reading the Skies: What Bessemer’s State of AI 2025 Means for Business Leaders, FinOps, and the Future of Cost Visibility

Bessemer's State of AI defined the era's growth archetypes — Q2T3, Supernovas, Shooting Stars. Eight months on, 2026 has answered with a harder question: velocity produces waste as fast as it produces value. Average enterprise AI budgets grew from $1.2M to $7M in two years. 73% of FinOps teams report AI costs exceeded original projections. Inference now accounts for 85% of enterprise AI spend. This updated executive playbook translates the Bessemer framework into the financial discipline enterprises need now

Explore articleDigiUsher: The FinOps Operating System for Multi-Cloud Cost Management

76% of enterprises now run workloads across two or more cloud providers. Multi-cloud should mean resilience and choice — but without a unified FinOps OS, it means fragmented billing, incompatible cost schemas, and governance gaps that compound into tens of millions in unattributed spend. This is DigiUsher: what it does, who it's built for, why FOCUS 1.x native architecture changes the game, and why 7 of 10 enterprise evaluation criteria favour a purpose-built FinOps OS over a single-cloud or single-category tool.

Explore article