Reading the Skies: What Bessemer’s State of AI 2025 Means for Business Leaders, FinOps, and the Future of Cost Visibility

Bessemer's State of AI defined the era's growth archetypes — Q2T3, Supernovas, Shooting Stars. Eight months on, 2026 has answered with a harder question: velocity produces waste as fast as it produces value. Average enterprise AI budgets grew from $1.2M to $7M in two years. 73% of FinOps teams report AI costs exceeded original projections. Inference now accounts for 85% of enterprise AI spend. This updated executive playbook translates the Bessemer framework into the financial discipline enterprises need now

Author

DigiUsher

Read Time

18 min read

“AI is the new cloud: fast, expensive, and full of opportunity. Business leaders who want to ride the wave without drowning in costs need a FinOps-first strategy.”

When DigiUsher first published this analysis in October 2025, Bessemer’s State of AI had just drawn the new growth map of the AI era — Supernovas, Shooting Stars, Q2T3. The central warning was velocity without visibility.

Eight months on, the data has answered.

Average enterprise AI budgets grew from $1.2 million in 2024 to $7 million in 2026 — a 5.8× increase in two years. 73% of FinOps teams report AI costs exceeded original budget projections. Inference now accounts for 85% of the enterprise AI budget. Agentic AI workloads consume 15× more tokens per task than the standard chatbots enterprises used to model costs against.

The Bessemer framework was correct. The velocity it described has arrived. What follows is this executive playbook, updated with the 2026 inference economics data that confirms the warning — and the governance framework for acting on it now.

Why 2026 Has Answered With Harder Questions

The AI universe Bessemer described in 2025 has continued to form. Foundation models from OpenAI, Anthropic, Gemini, Llama, and xAI dominate the infrastructure layer, improving performance while exploring vertical integration. The Model Context Protocol has become the “USB-C of AI” — a standardised mechanism for AI agents to access external APIs, tools, and data persistently across interactions. Agentic browsers have emerged as the new interaction surface. Generative video is arriving on the forecast horizon.

Every prediction in Bessemer’s roadmap is materialising. So is the warning embedded within it.

The AI cost paradox of 2026:

Per-token API cost reduction since 2022: 93% (prices falling)

Enterprise generative AI spend 2024: $11.5 billion

Enterprise generative AI spend 2025: $37 billion (3.2× in one year)

Average enterprise AI budget 2024: $1.2 million

Average enterprise AI budget 2026: $7 million (5.8× in two years)

Companies spending $10M+ annually on AI: 40% of all enterprises

AI costs exceeding original projections: 73% of FinOps teams

─────────────────────────────────────────────────────────────

Lower unit cost. Dramatically higher total spend.

This is not an anomaly. It is the Jevons Paradox for AI — the economic phenomenon where efficiency improvements in resource consumption drive higher total consumption because lower unit cost makes broader adoption viable. AI per-token costs falling 93% has not reduced AI budgets. It has enabled more use cases, more agentic workflows, more always-on monitoring — generating token volume growth that has consumed every per-unit efficiency gain and then some.

The question for 2026 is no longer whether to adopt AI. It is whether the financial governance infrastructure is as ambitious as the AI adoption agenda.

Bessemer’s Two Archetypes — and Their FinOps Implications

Bessemer studied 20 high-growth AI companies and identified two distinct patterns. Understanding both is essential — because they describe not just startup trajectories but enterprise AI programme patterns.

Supernovas — Explosive Growth, Compressed Margins

Supernovas sprint from seed to $100M ARR often within 18 months. The headline numbers: ~$40M ARR in year one, ~$125M in year two, $1.13M revenue per employee — efficiency that has no precedent in software history.

The financial reality behind the headline: gross margins around 25%, sometimes negative. Revenue may appear vulnerable because adoption velocity belies low switching costs or massive novelty rather than durable value. Products built as thin wrappers around foundation models face compression risk as models improve and competitors access the same capabilities.

The FinOps pattern that Supernovas embody: growing at speeds where governance cannot keep pace. Revenue accelerates; cost structures are not validated until scale makes the unit economics problem a structural P&L crisis.

Shooting Stars — Sustainable Velocity, 60% Margins

Shooting Stars follow the Q2T3 benchmark: quadruple, quadruple, triple, triple, triple. They start smaller (~$3M ARR year one), reach $100M ARR by year four, and maintain ~60% gross margins throughout.

Bessemer’s thesis is explicit: “while we love Supernovas, we believe this era will be defined not by a few outliers — but by hundreds of Shooting Stars.” The Shooting Star margin is not accidental. It is the product of data and memory moats rather than model proximity, meaningful switching costs, reproducible evaluation frameworks, and — critically — tracking cost per inference from the first production workload.

The FinOps pattern Shooting Stars demonstrate: AI growth and margin discipline are not mutually exclusive. The 35-percentage-point gross margin advantage over Supernovas is the measurable financial value of governance discipline applied from inception rather than retrofit at scale.

What This Means for Enterprises

Enterprises adopting AI at scale are not building startups — but they are making the same architectural and governance decisions that determine whether an AI programme behaves like a Supernova (explosive cost growth, compressed margins, structural fragility) or a Shooting Star (sustainable velocity, durable unit economics, governed cost per outcome).

The choice is made in the first 90 days of production deployment — before scale makes the governance problem larger than the governance infrastructure can address.

The Jevons Paradox for AI

The single most dangerous financial assumption in enterprise AI strategy is this: prices are falling, so AI costs will naturally come down.

Stanford’s HAI 2025 AI Index documented a ~280-fold reduction in the per-token cost of GPT-4 class model performance between late 2022 and late 2024. Every major AI provider dropped prices 30–70% in early 2026 as NVIDIA flooded the market with additional GPU capacity.

Total enterprise AI spend grew from $11.5 billion in 2024 to $37 billion in 2025. The average enterprise AI budget grew 5.8× in two years.

This is the Jevons Paradox for AI: lower unit costs make AI deployment viable for more use cases, more teams, and more agentic workflows — generating consumption volume growth that systematically outpaces per-unit savings.

The agentic amplification makes the paradox structural. Where a standard chatbot processes one query with one LLM call:

Standard chatbot: 1 LLM call per user interaction

Agentic workflow: 10–20 LLM calls per task resolution

RAG pipeline: +context retrieval, embedding generation, reranking

Always-on monitoring: Continuous inference, 24/7, no natural ceiling

─────────────────────────────────────────────────────────────────

Token volume per task: Agentic AI consumes 15× standard chat

Net spend trajectory: Up, despite per-token prices falling

The consequence for enterprise financial planning: organisations that modelled AI economics assuming price declines would self-regulate spend are discovering that agentic deployment multiplies volume faster than prices fall. The answer to AI cost governance is not waiting for efficiency gains. It is governing consumption at the workload level, before volume growth consumes every efficiency improvement the market provides.

Five Economic Forces Every Business Leader Must Govern

1 — The Training→Inference Cost Inversion

In 2023, enterprise AI conversations centred on training cost — the one-time, large compute event of building or fine-tuning a model. Training is visible, bounded, project-scoped.

In 2026, 85% of the enterprise AI budget is inference — the ongoing, per-request cost of running models in production. Inference is invisible in standard cloud billing, unbounded unless explicitly governed, and scales with every customer interaction, agent action, and always-on monitoring process.

The financial consequence: every CFO modelling AI costs on training budgets is modelling the wrong cost category by 85%.

2 — The Gross Margin Compression Spiral

AI-first companies operate at 50–65% gross margins versus the 80–90% traditional SaaS benchmark. The mechanism: inference spend appears directly in COGS because it scales with every customer interaction rather than approaching zero at scale.

At the most exposed end — a Supernova with 25% gross margins and $125M ARR — COGS is approximately $93.75M. Most of that is inference infrastructure. The company is spending almost as much on running its product as it earns from selling it.

This is not a startup phenomenon. Enterprises embedding AI capabilities into products and services are absorbing the same economics. Microsoft’s Azure cloud gross margin fell as AI usage grew, landing around 69% as AI infrastructure ramp-up compressed the margin that compute services historically delivered.

The financial consequence: every AI architecture decision — model selection, context window size, caching strategy, agentic chain depth — is simultaneously a margin decision. Engineers making these choices without cost signal are making margin decisions without knowing it.

3 — The Big Model Fallacy

Not every AI task requires a frontier model with trillions of parameters. Yet without model routing governance, engineering teams default to the most capable model available — because it produces the best individual output and no financial signal is visible at decision time.

The aggregate economics are severe. Routing a document summarisation task to GPT-4o costs 20× more than routing it to a smaller, fine-tuned model that achieves 95% of the output quality at 5% of the inference cost. At 10,000 daily summarisation requests, this single routing decision is a $70,000–$100,000 monthly cost difference.

Model routing combined with semantic caching reduces API call volume by 30–50% for typical enterprise deployments — the highest-return short-term AI optimisation available without changing product architecture.

4 — The Agentic Cost Multiplication

Agentic AI is 2026’s defining governance challenge. A single agentic workflow chain:

- Chains 10–20 LLM calls to resolve one task

- Retrieves context from RAG pipelines (vector search, embedding lookups, memory retrieval — all billing separately)

- Calls external tools and APIs

- Generates structured output with validation passes

RAG Bloat adds a “context tax”: enterprises sending massive context windows with every query are paying token costs proportional to document size, not query complexity. Always-on monitoring agents run continuously without natural billing ceiling.

The canonical failure mode: an AI agent that saves a customer service representative 15 minutes of work but costs $4.00 in inference tokens to run is delivering negative ROI per interaction — invisibly, continuously, until the monthly invoice reveals it.

The FinOps Foundation’s 2026 State of FinOps identifies agentic AI cost attribution as the top new practitioner challenge. 73% of FinOps teams report AI costs exceeded original projections. The primary driver: AI economics were modelled on chatbot-level token consumption per workflow, then agentic deployments consumed 15× more.

5 — The Pricing Normalisation Cliff

Current AI API pricing is subsidised. OpenAI generated approximately $3.7 billion in revenue while losing an estimated $5 billion in 2025 — spending $1.35 for every dollar earned. This subsidisation is funded by venture capital and hyperscaler cross-subsidies designed to drive adoption velocity.

When pricing normalises toward economic cost, enterprises face 30–50% cost increases on existing workloads without any corresponding reduction in consumption. Organisations that built annual AI contracts in 2025 are already paying 2–3× current market rates. Organisations modelling AI economics on current subsidised rates are creating financial exposure that will materialise when normalisation occurs.

The Shooting Star defence: inference efficiency discipline — model routing, semantic caching, right-sized infrastructure — insulates AI unit economics against repricing. Enterprises building this discipline now are not just saving money today. They are insulating their AI programme economics against the commercial event that will separate sustainable AI investment from programmes that fail under financial pressure.

The FinOps-First AI Leadership Playbook

This is a practical 12-month governance framework for executives who need to govern AI costs, forecast AI spend intelligently, and connect infrastructure investment to business value. It is designed for organisations that have moved beyond AI experimentation into production deployment — where the governance gap between adoption velocity and financial visibility has real P&L consequences.

Phase 1 — Quick Wins: 0 to 90 Days

Step 1 — Instrument Everything From Day One

Tag AI workloads separately from cloud infrastructure: training, fine-tuning, inference, storage, RAG retrieval, and data egress as distinct cost categories. This single step — establishing AI cost attribution before spend accumulates — is the prerequisite for every governance action that follows.

The FinOps Foundation’s 2026 data confirms: 73% of teams who report AI cost overruns did not have AI-specific cost attribution in place at deployment. The overspend is not the surprise — the surprise is discovering it weeks later from a cloud invoice.

Step 2 — Define AI Unit Economics Per Feature

For every AI feature or agent workflow: define expected business value (revenue uplift, cost avoidance), key cost drivers (GPU hours, API calls, token volume, retrieval costs), and an acceptable time-to-value threshold. Cost per inference and cost per resolved interaction are the two metrics that determine whether an AI capability is margin-accretive or margin-destructive at scale.

Step 3 — API vs. Self-Hosted Assessment With LCOAI Analysis

Before deploying any new AI workload at scale, evaluate total lifecycle cost — compute, storage, inference volume, retrieval, operational overhead — against managed API alternatives. LCOAI (Levelized Cost of AI) analysis surfaces the crossover point where self-hosted deployment becomes more cost-effective than API consumption. At GPT-4.1 API rates (~$15/1,000 interactions), the crossover for stable, high-volume workloads typically occurs at enterprise scale — making this a strategic architecture question, not a default deployment assumption.

Phase 2 — Tactical Moves: 3 to 6 Months

Step 4 — Deploy Model Routing and Semantic Caching

Implement a tiered compute strategy directed by task complexity. Document summarisation, classification, and data extraction route to smaller, faster, cheaper models. Multi-step reasoning, code generation, and complex analysis route to frontier models. Semantic caching serves equivalent cached responses for semantically similar queries at near-zero cost.

Combined, routing and caching reduce API call volume by 30–50% for typical enterprise deployments — the highest-return inference optimisation that requires no product architecture change.

Step 5 — Build Private Evaluations and Data Lineage

Bessemer was explicit: enterprise procurement is increasingly gating AI deals on reproducible model behaviour and robust data lineage. Log inference behaviour, version datasets, track model drift, and build evaluation frameworks that run on private enterprise data. This is not governance overhead — it is the foundation of AI procurement credibility and the defence against governance blind spots that create compliance exposure.

Step 6 — Build the AI TCO Playbook With Pricing Normalisation Scenarios

Develop a comprehensive AI total cost of ownership model that includes current costs plus scenarios for pricing normalisation (+30–50%). Identify which workloads remain viable under normalised pricing and which require architectural changes. This stress test is the CFO’s most important AI governance document — not the current AI bill, but the forward-looking model of what AI economics look like when subsidies normalise.

Phase 3 — Strategic Plays: 6 to 12 Months

Step 7 — Operationalise the AI FinOps Centre of Excellence

Establish or expand a FinOps CoE that includes cloud economists, product finance partners, and ML engineers. Embed cost-to-value reviews at every product launch and agentic workflow deployment gate. The FinOps Foundation’s 2026 framework explicitly documents that mature AI FinOps practices — unit economics tracking, AI value quantification, and influencing technology selection — define the leading edge of the discipline. Organisations that build these capabilities now are 12 months ahead of those that wait until AI spend forces the conversation.

Step 8 — Negotiate Vendor Economics With Data

The enterprises with AI cost attribution infrastructure have clear visibility into workload economics — and therefore the strongest negotiating position with AI vendors and cloud providers. Negotiate based on inference volume commitments, SLA requirements, data residency constraints, and BYOC deployment options. Volume discounts, committed use contracts, and reserved capacity are all available — but only to enterprises with the data to negotiate them credibly.

Step 9 — Govern AI Carbon, Compliance, and Ethical Cost at Board Level

AI carries costs that do not appear on cloud invoices: EU AI Act compliance overhead, carbon footprint from GPU energy consumption, and reputational costs from ungoverned model behaviour. Boards are beginning to ask these questions — and the enterprises that have built comprehensive AI governance reporting are answering from data rather than from approximations.

Questions Boards Should Be Asking in 2026

Seven questions that separate enterprises with genuine AI financial governance from those managing AI spend reactively:

- Do we track cost per useful inference — not total token spend — for every AI feature in production?

- Can we reproduce and audit model behaviour on private data as an enterprise procurement prerequisite?

- Are our tagging practices separating training, inference, storage, RAG retrieval, and data egress as distinct cost categories?

- What is our agreed time-to-value threshold for AI feature and agent workflow launch?

- Are we capturing full lifecycle AI costs — compute, storage, licensing, people, evaluation, carbon, and compliance?

- What is our financial exposure if AI API pricing increases 30–50% from current subsidised rates?

- Do we have automated kill-switch infrastructure for agentic workflows that exceed defined compute thresholds?

The People Side of AI FinOps

The governance discipline described in this playbook requires people, not just tooling. Two roles are becoming essential in AI-forward enterprises:

Cloud Economists with AI Fluency — bridging finance and engineering, connecting inference cost to margin outcomes, making “here’s what 1 million inference calls cost us per feature” a routine financial communication rather than a periodic forensic exercise.

Product Finance Partners Embedded in AI Teams — ensuring cost-to-value reviews happen at product launch gates, not in quarterly financial reviews. The enterprises that avoid Supernova-pattern margin compression are those where financial accountability is embedded in engineering decision-making from the first production deployment.

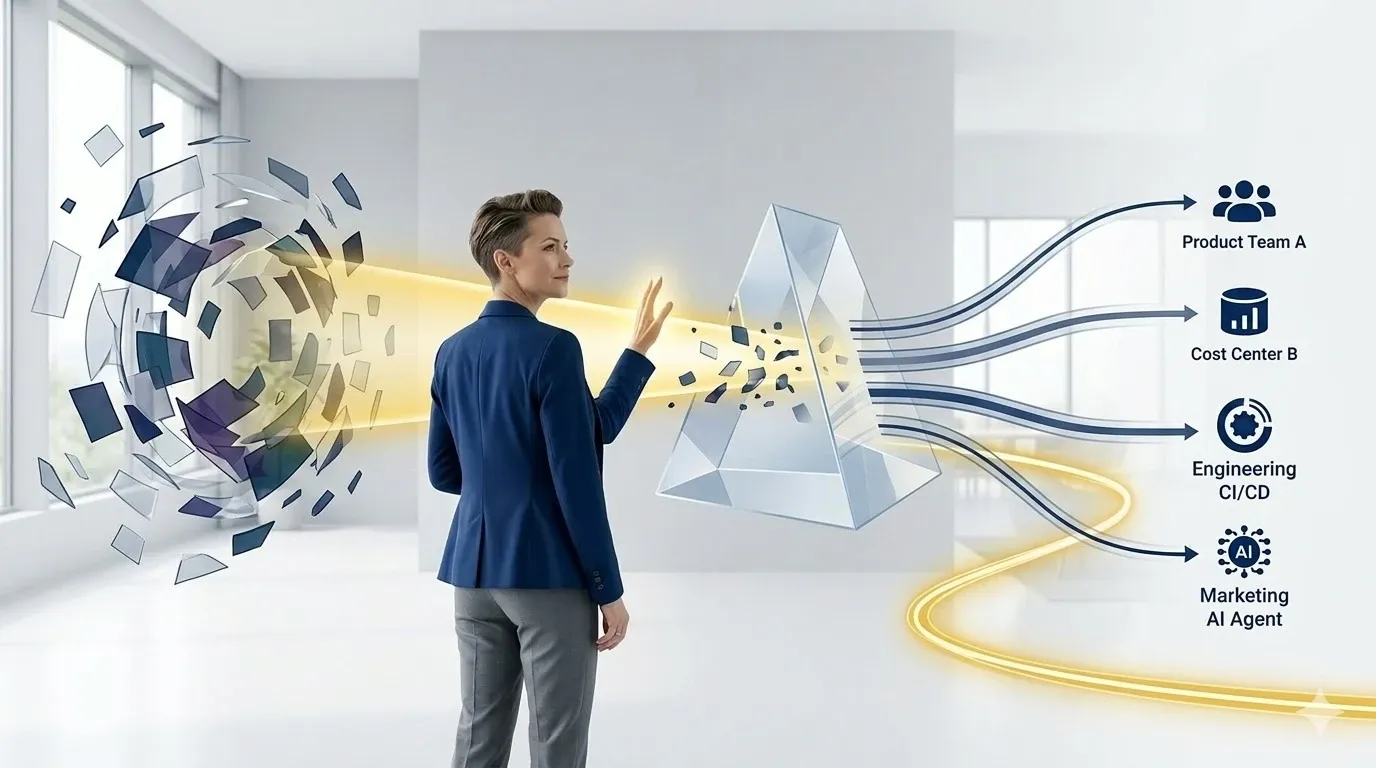

DigiUsher: The Financial Visibility Layer That Governs Shooting Star Economics

Velocity without visibility is risk. The enterprises that translate Bessemer’s framework into Shooting Star economics — sustainable growth with governed unit costs — are not hoping for lower AI prices. They are building the financial infrastructure that makes AI investment accountable to business outcomes.

DigiUsher’s FinOps Operating System provides the unified AI financial governance layer:

Real-time AI unit economics — cost per inference, cost per resolved interaction, cost per AI feature tracked continuously across all providers (Azure OpenAI, AWS Bedrock, Vertex AI, direct APIs) — the metric set that connects engineering decisions to margin outcomes.

Inference governance — token budget enforcement per team and per product, model routing cost signal integration, semantic caching opportunity identification, and agentic workflow attribution that surfaces per-chain token economics before 24/7 autonomous systems generate unbounded spend.

Pricing normalisation scenario modelling — financial models that surface the economic impact of API cost increases on existing workloads, enabling procurement, architecture, and strategic planning decisions before the repricing event occurs.

LCOAI-aligned cost intelligence — the full lifecycle AI cost analysis that makes the API-versus-self-hosted decision financially defensible rather than architecturally intuitive.

Board-ready AI investment reporting — cost per AI product, cost per business outcome, gross margin impact of AI features — connecting infrastructure investment to the financial accountability boards require.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

The AI opportunity is real. The costs are real. The governance gap between the two is where Shooting Stars separate from Supernovas. Build the financial visibility layer now — before scale makes the cost governance problem larger than the infrastructure designed to address it.

Frequently Asked Questions

What did Bessemer’s State of AI 2025 reveal about the two types of high-growth AI companies?

Bessemer studied 20 high-growth AI startups and identified two archetypes. Supernovas reach ~$40M ARR in year one and ~$125M in year two with $1.13M revenue per employee — but operate at ~25% gross margins (sometimes negative). Shooting Stars follow the Q2T3 benchmark (quadruple, quadruple, triple, triple, triple), reaching ~$100M ARR by year four while maintaining ~60% gross margins. Bessemer’s thesis: the AI era will be defined by hundreds of Shooting Stars, not a handful of Supernovas. The 35-percentage-point margin advantage is the measurable value of governance discipline applied from inception.

What is Q2T3 and why does it matter for enterprise financial planning?

Q2T3 (quadruple, quadruple, triple, triple, triple) replaced T2D3 as Bessemer’s AI-era growth benchmark. For enterprises, it signals that AI products reaching Q2T3 velocity are scaling inference costs at the same rate as revenue — meaning unit economics gaps that seem manageable at launch become structural P&L problems at $100M ARR. Q2T3 is the argument for establishing AI cost governance before scale makes the governance problem larger than the infrastructure.

What is the Jevons Paradox for AI?

The Jevons Paradox describes how efficiency improvements in resource consumption drive higher total consumption — because lower unit cost makes broader adoption viable. Applied to AI: per-token costs fell 93% since 2022, but total enterprise AI spend grew from $11.5 billion in 2024 to $37 billion in 2025 because lower costs enabled more agentic deployments consuming 15× more tokens per task. Lower AI prices are not solving the AI budget problem. They are accelerating it.

Why are AI-first companies operating at 50–65% gross margins?

Because inference spend appears directly in COGS, scaling with every customer interaction rather than approaching zero at scale as traditional SaaS costs do. Every model call triggers metered compute. AI-centric businesses typically operate at 50–60% gross margins versus 80–90% for traditional SaaS. The margin gap is governable through model routing, semantic caching, and right-sized inference infrastructure.

What should enterprise leaders do immediately about AI cost governance?

Five immediate actions: instrument all AI workloads with separate cost attribution from day one; define cost per inference and cost per resolved interaction per feature; implement model routing to match task complexity to compute tier; deploy semantic caching for semantically similar queries; and set automated token budget caps with throttle and suspend actions for agentic workflows.

What questions should boards be asking about AI cost governance?

Seven critical questions: Do we track cost per useful inference per AI feature? Can we reproduce model behaviour on private data? Are training, inference, storage, and retrieval costs tagged separately? What is our time-to-value threshold per AI launch? Are we capturing full lifecycle AI costs? What is our exposure if API prices increase 30–50%? Do we have automated kill-switch infrastructure for runaway agentic workflows?

How does DigiUsher’s FinOps OS support AI unit economics governance?

Through four integrated capabilities: real-time AI unit economics tracking (cost per inference, per interaction, per feature) across all AI providers in a unified FOCUS 1.x model; inference governance with token budget enforcement and agentic workflow attribution; pricing normalisation scenario modelling; and board-ready AI investment reporting connecting infrastructure spend to gross margin and business outcome metrics.

References

- Bessemer Venture Partners — State of AI 2025: Q2T3, Supernovas, Shooting Stars

- FinOps Foundation — State of FinOps 2026: AI as Fastest-Growing Spend Category

- FinOps Foundation — Cost Estimation of AI Workloads

- FinOps Foundation — Effect of Optimization on AI Forecasting

- Oplexa — AI Inference Cost Crisis 2026: Enterprise Budget Projections

- AnalyticsWeek — Inference Economics: Solving the 2026 Enterprise AI Cost Crisis

- MyWrittenWord — The Real Cost of Running AI in 2026

- Spheron — AI Inference Cost Economics 2026: GPU FinOps Playbook

- AnalyticsWeek — AI FinOps and Sovereign Infrastructure: Costs in 2026

- Drivetrain.ai — Unit Economics for AI SaaS Companies: CFO Guide

- Getmonetizely — The Economics of AI-First B2B SaaS in 2026

- Menlo Ventures — 2025 State of Generative AI in the Enterprise: $37B spend

- Stanford HAI — 2025 AI Index: 280-fold inference cost reduction since 2022

- IDC — Balancing AI Innovation and Cost: The New FinOps Mandate

- FinOps Foundation — FOCUS Specification

Translate Bessemer’s Framework Into Financial Discipline

The stars Bessemer mapped in 2025 are still forming. The AI opportunity is real, large, and accelerating. But velocity without visibility is risk — and the 2026 data confirms that most enterprise AI programmes are accumulating the governance debt that Bessemer’s Supernova archetype embodies.

DigiUsher’s FinOps OS gives your CFO, CIO, and AI leadership teams the financial visibility layer that separates Shooting Star economics from Supernova fragility: real-time AI unit economics, inference governance, pricing normalisation modelling, and board-ready ROI reporting.

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

FinOps for AI: From Cloud Chaos to Business Clarity

Cloud was complex. AI made it explosive. Enterprise AI spend grew 5.8× in two years. A $400M collective leak in unbudgeted agentic AI spend hit Fortune 500 companies in Q1 2026 alone. 73% of FinOps teams report AI costs exceeded original projections. This is the definitive guide to what FinOps for AI actually requires — why legacy cost tools cannot govern it, what business clarity looks like when it is working, and how DigiUsher's FinOps OS turns AI cost chaos into measurable cloud ROI.

Explore article

The Death of Chargeback: Why Cost Allocation Is Failing in the Kubernetes and AI Era

Chargeback was built for a world of static servers, predictable workloads, and clear ownership boundaries. That world is gone. In 2026, shared Kubernetes clusters, ephemeral containers, and AI token costs have made traditional allocation models inaccurate, delayed, and politically toxic. This briefing explains the five failure modes destroying chargeback in modern infrastructure — and the five-capability model that replaces it.

Explore article

Platform Teams Are Becoming Cost Centers — And What To Do About It

80% of enterprises now have formal platform engineering initiatives. Platform teams own Kubernetes clusters, CI/CD pipelines, observability stacks, and AI infrastructure — making them the de facto financial decision-makers for the fastest-growing cost categories in enterprise cloud. But they are measured on deployment speed and reliability, not cost efficiency. This brief explains the five mechanisms turning platform teams into shadow cost centers, why traditional FinOps cannot govern at platform velocity, and how the transformation from cost center to financial control plane happens.

Explore article