The Death of Chargeback: Why Cost Allocation Is Failing in the Kubernetes and AI Era

Chargeback was built for a world of static servers, predictable workloads, and clear ownership boundaries. That world is gone. In 2026, shared Kubernetes clusters, ephemeral containers, and AI token costs have made traditional allocation models inaccurate, delayed, and politically toxic. This briefing explains the five failure modes destroying chargeback in modern infrastructure — and the five-capability model that replaces it.

Author

DigiUsher

Read Time

17 min read

Executive Summary

For over a decade, chargeback was the default answer to the cloud cost accountability question. Allocate costs to teams, enforce financial consequences, drive behaviour change. In theory, elegant. In practice, increasingly broken.

The 2026 cloud estate has made chargeback’s structural weaknesses impossible to ignore:

- 30–50% of cloud resources are untagged or inconsistently tagged — the attribution foundation chargeback depends on is missing in most enterprises

- Infrastructure changes 50+ times per day — but chargeback delivers financial signals once per month

- Shared Kubernetes infrastructure cannot be cleanly allocated — the shared clusters, networking, and observability stacks that platform teams operate have no natural cost owners

- AI token costs have no mapping to chargeback’s resource-level allocation logic — 98% of FinOps teams now manage AI spend with models designed for hourly compute rates

The result: chargeback models are becoming increasingly inaccurate, generating organisational disputes rather than optimisation, and arriving too late to influence the engineering behaviour that created the cost in the first place.

At KubeCon Europe 2026, platform teams articulated what practitioners have quietly known for two years:

“We don’t need better allocation. We need better control.”

This briefing dissects the five failure modes that are destroying chargeback in modern infrastructure — and defines the five-capability financial control system model that replaces it. The goal is not to abandon accountability. It is to build accountability that operates at the speed and granularity of the infrastructure it governs.

What Chargeback Was Built For — and Why That World No Longer Exists

Chargeback was designed with a set of architectural assumptions that were entirely reasonable in 2012:

The Chargeback Design Assumptions (2012)

──────────────────────────────────────────────────────────────

Infrastructure: Physical servers, fixed capacity, clear ownership

Workloads: Static applications, predictable usage, stable costs

Cost model: Monthly billing, discrete line items, stable rates

Attribution: Server = application = team. One-to-one mapping.

Cadence: Monthly allocation aligned with finance cycles

──────────────────────────────────────────────────────────────

In that world: Allocating cost = controlling cost

──────────────────────────────────────────────────────────────

Every one of these assumptions has been invalidated by the cloud-native shift:

The 2026 Reality

──────────────────────────────────────────────────────────────

Infrastructure: Kubernetes clusters, shared node pools,

ephemeral containers, multi-cloud estates

Workloads: Ephemeral pods, auto-scaling services,

cross-region deployments, agentic AI

Cost model: Token billing, GPU-hours, DBUs, per-request AI,

Spot interruptions, serverless cold starts

Attribution: 50 services share a node pool. No clean boundary.

Cadence: 50+ changes/day. Monthly signals: 25 days too late.

──────────────────────────────────────────────────────────────

In this world: Allocating cost ≠ controlling cost

──────────────────────────────────────────────────────────────

Kubernetes abstracts infrastructure from application. Containers start and stop in seconds, shifting across nodes based on cluster scheduling decisions that have no awareness of cost ownership. A single application may span multiple clusters, multiple regions, and multiple cloud providers simultaneously.

The result: the one-to-one mapping between server, application, and team that made chargeback workable has dissolved. What remains is a shared infrastructure model that produces shared costs — and shared costs are, by definition, inaccurate to allocate.

Five Failure Modes Destroying Chargeback in 2026

Failure Mode 1 — Shared Kubernetes Infrastructure Cannot Be Cleanly Allocated

Kubernetes was designed for resource sharing. Chargeback was designed for resource ownership. These two design philosophies produce a fundamental incompatibility at the cluster boundary.

When 50 services share a node pool, standard billing reports are useless. Every allocation method applied to a shared cluster is an approximation — and approximations generate disputes when money changes hands.

The FinOps Foundation identifies three methods for shared cost splitting:

| Method | Mechanism | Trade-Off |

|---|---|---|

| Even split | Total divided equally across all consuming teams | Simple but unfair — high-consumption teams pay the same as low-consumption teams |

| Fixed proportional | Percentage based on estimated relative usage | More defensible but requires manual maintenance as consumption patterns evolve |

| Variable proportional | Based on actual measured consumption metrics (CPU, memory, network) | Most accurate and fairest — but requires substantial operational telemetry infrastructure |

The FinOps Foundation’s own recommendation: apply the KISS principle. Simpler models implemented consistently produce better outcomes than sophisticated models implemented inconsistently. More mature organisations use variable proportional methods — but the data infrastructure required makes this a multi-quarter implementation effort, not a quick configuration change.

The deeper problem: shared network costs, shared ingress controllers, shared observability stacks, and shared CI/CD pipelines have no natural owner even with perfect consumption telemetry. Every allocation of these costs will be contested by someone.

Failure Mode 2 — Tagging Is Incomplete, Inconsistent, and Retroactively Impossible

Chargeback depends on tagging the way a building depends on its foundation. 30–50% of cloud resources in typical enterprise environments are untagged or inconsistently tagged. The allocation model built on this foundation produces charges that are inaccurate in direct proportion to tagging incompleteness.

In Kubernetes environments, the problem compounds: dynamic pods scheduled by the cluster on available nodes can bypass namespace-level tagging entirely. A workload that migrates between namespaces during an autoscaling event may accumulate cost under a different ownership context than the one the chargeback model expects.

The correct technical response is not a better chargeback model — it is enforcing attribution at the infrastructure provisioning layer through policy-as-code. AWS Service Control Policies, Azure Policy, GCP Organisation Policies, and Kubernetes admission webhooks can all block resource creation that does not meet attribution requirements. Resources that do not carry mandatory metadata are not provisionable. Attribution becomes automatic rather than aspirational.

“Tag policies should be enforced at the infrastructure layer via policy — not applied retroactively by the FinOps team in a spreadsheet.” — FinOps Foundation guidance 2026

Without this enforcement, tagging campaigns produce temporary improvement that decays under delivery pressure. With it, the foundation for accurate attribution — whether for showback, chargeback, or a financial control system — is structurally maintained rather than manually sustained.

Failure Mode 3 — Monthly Allocation Cycles Are 25 Days Too Late

Cloud-native infrastructure generates cost at engineering velocity. Chargeback delivers the financial signal at finance velocity. The gap between these two cadences is where most chargeback value is lost.

A developer deploys code that creates a logging loop. In six hours, 2TB of logs have been ingested at CloudWatch rates. The cost appears in the chargeback report 25 days later. By then, the team has moved through three sprints. Nobody remembers the specific deployment. The pattern has been replicated on subsequent services. And the chargeback charge — when it arrives — creates a dispute about allocation accuracy rather than a conversation about the engineering decision that generated it.

The Temporal Governance Gap

──────────────────────────────────────────────────────────────

Engineering velocity: 50+ infrastructure changes per day

Cost accumulation: Continuous, proportional to usage

Chargeback cadence: Monthly

Signal latency: 25–30 days after cost is incurred

Actionability at month: Low — decisions locked in, engineers

moved to next sprint

What effective governance requires:

Cost visible within hours of accumulation

Signal surfaced to the team responsible — while the context

is live and behaviour change is possible

──────────────────────────────────────────────────────────────

The DevOps.com engineering team that implemented real-time cost visibility describes the operational model that works in 2026: each engineering team has a monthly cost budget visible in real time. They receive alerts when they hit 80% of budget. Nothing dramatic — just a conversation: “Hey, you went £2,000 over this month. What happened?” The team already knows. They own the explanation. Finance is not interrogating them; they are reporting on their own infrastructure.

This model does not require chargeback. It requires real-time visibility and team-level ownership. The cadence is the governance.

Failure Mode 4 — Chargeback Creates Allocation Disputes, Not Cost Optimisation

When financial consequences are attached to imprecise allocation logic, teams optimise for allocation accuracy rather than cost efficiency. Three behavioural failure patterns consistently emerge:

Tag gaming: teams apply tags selectively to reduce allocation under chargeback models, or shift workloads to shared namespaces where their cost is diluted across multiple owners.

Dispute escalation: teams contest allocation charges they perceive as unfair, consuming FinOps team bandwidth defending methodology rather than implementing optimisation. The political cost of defending imprecise charges frequently exceeds the efficiency benefit of the accountability model.

Optimisation avoidance: teams engaged in allocation disputes are not engaged in rightsizing, scheduling, or efficiency improvements. The original goal — driving engineering behaviour change — is displaced by governance theatre.

The distinction that separates effective accountability from counterproductive chargeback: accountability requires both visibility and agency. A team held financially accountable for costs they can see but cannot control will dispute the charges. A team that has real-time visibility, understands their cost, and has the operational tools to reduce it will optimise.

“Chargeback only succeeds when teams have stable, trusted allocation data and the operational tools to control their spend. Rushing to chargeback before that foundation is in place destroys trust in the FinOps practice faster than anything else.” — FinOps Foundation guidance

Failure Mode 5 — AI Economics Have No Mapping to Chargeback Allocation Logic

The fastest-growing cost category in enterprise cloud has no clean precedent in the allocation frameworks that FinOps teams were built to operate.

AI cost drivers — token usage, GPU utilisation, inference frequency, embedding generation, vector database queries, output moderation — are real-time, non-linear, and sensitive to prompt design decisions made by engineers with no cost visibility at decision time.

A shared Azure OpenAI endpoint accessed by 30 product teams generates one invoice line item. Allocating this charge requires matching API call metadata to billing data — a join that native billing cannot perform without external attribution infrastructure. A monthly chargeback cycle that distributes aggregate AI charges proportionally to estimated team usage cannot produce the workload-level accuracy that AI cost management requires.

The agentic AI layer amplifies the problem. Agentic workflows chain 10–20 LLM calls per task, consuming 15× more tokens than standard chatbot interactions. A single agent in a recursive reasoning loop can generate thousands of pounds per hour. Monthly chargeback cannot govern this — by the time the charge appears in the allocation report, the economic damage has already occurred.

AI cost governance requires: per-API-key token attribution at the request level, budget caps enforced before monthly thresholds are breached, GPU idle detection with automated scale-down, and agentic workflow kill-switches that terminate runaway processes before the next billing cycle. These are prevention mechanisms, not allocation mechanisms.

The Showback–Chargeback Spectrum: Understanding the Maturity Arc

Showback and chargeback are not opposites — they are points on a maturity spectrum. Most organisations benefit from spending significant time in showback mode before introducing financial consequences.

| Stage | What Happens | When to Use It |

|---|---|---|

| Exclusion (showback only) | Shared costs shown as a line item for visibility — not allocated | Early FinOps; tagging below 70%; teams not ready for financial accountability |

| Proportional showback | Costs reported to teams based on consumption share — no financial transfer | Tagging above 70%; building toward chargeback readiness |

| Usage-based chargeback | Each team pays exactly what they consumed — real financial consequences | 95%+ tagging; teams have tools to control spend; methodology validated 2+ months |

| Financial control system | Real-time attribution + embedded guardrails + AI governance + unit economics | Kubernetes estates; AI workload growth; platform engineering at scale |

“Most organisations spend 6–18 months in showback mode before transitioning to chargeback. This period is valuable. It surfaces tagging gaps, misattributed costs, and organisational misalignments that would create chaos if chargeback went live too early.” — Holori Cloud Showback vs Chargeback Guide 2026

The critical insight from practitioner experience: a SaaS team that implemented showback for Kubernetes costs by feature, then moved to chargeback after six months, reduced waste by 18% within one quarter and saw forecasting accuracy improve dramatically. The showback period was not wasted time — it was the trust-building phase that made chargeback effective rather than politically toxic.

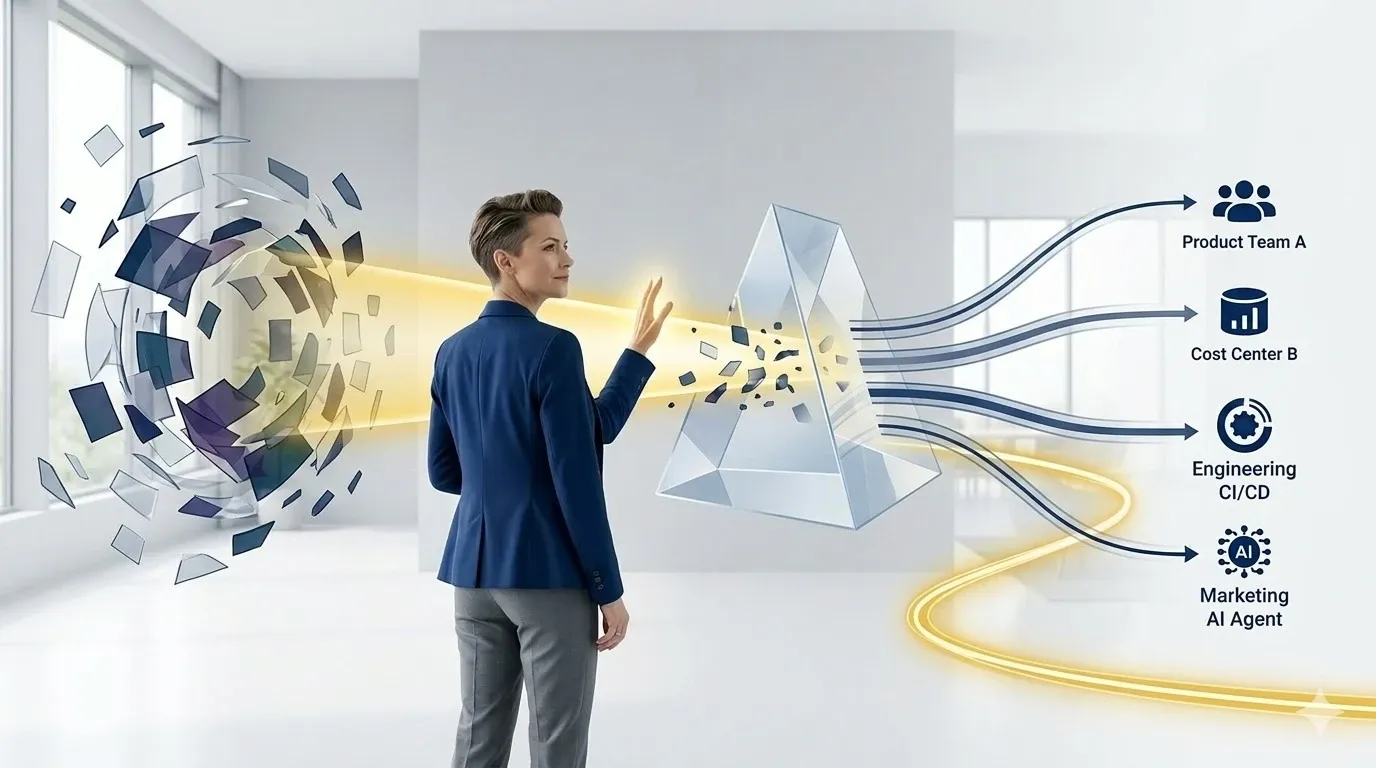

What Replaces Chargeback: The Financial Control System

The evolution beyond chargeback is not a different allocation model. It is a different governance architecture — one that answers a different question.

Chargeback asks: “Who should pay for this cost?” A financial control system asks: “How do we prevent unnecessary cost at the point it is created?”

Five capabilities define the modern FinOps financial control system:

Capability 1 — Real-Time Workload-Level Attribution

Cost visible at the service, namespace, and team level as it accumulates — not assembled from monthly billing exports after costs are locked in.

Implementation: FOCUS 1.x normalised cost attribution at the pod, namespace, and deployment level, with mandatory attribution labels enforced through IDP admission controls at provisioning time. Each engineering team’s cost dashboard updated hourly — giving them the real-time ownership that makes accountability possible without financial transfer.

The behaviour change this creates: engineers who see their team’s cost trajectory updating in real time make different decisions than engineers who receive a monthly chargeback report. Visibility drives behaviour. The real-time signal is the accountability mechanism.

Capability 2 — Cost Visibility at Decision Time

Pre-deployment cost estimates surfaced in engineering workflows before architecture decisions are committed.

Implementation: Infracost integration in CI/CD pipelines surfaces projected monthly cost impact in Terraform plan output. IDP service templates show estimated monthly cost for the requested configuration before provisioning. Cost gates require approval for deployments that exceed budget thresholds.

The behaviour change this creates: a PR that increases monthly infrastructure cost by £340 gets engineering scrutiny before it reaches production — the same review that a PR increasing error rate would receive. Cost becomes a first-class engineering signal, not a retrospective financial report.

Capability 3 — Embedded Financial Guardrails

Policy-as-code enforcement that makes non-compliant, over-budget configurations technically impossible to deploy.

Implementation: OPA or Kyverno admission webhooks reject resources that exceed defined limits or lack attribution metadata. Budget threshold triggers in CI/CD require approval rather than silent deployment. Non-production TTL controllers enforce scheduled shutdown. Resource limit ceilings prevent the overprovisioning that makes chargeback charges appear unexpectedly large.

The behaviour change this creates: the right thing becomes the easy thing. Attribution is automatic because untagged resources cannot be provisioned. Budget limits are respected because exceeding them requires explicit approval. Non-production waste is eliminated because the platform enforces lifecycle management.

Capability 4 — AI and GPU Cost Governance

Purpose-built governance for AI’s non-linear, usage-driven, token-based billing — the cost category that chargeback allocation logic cannot address.

Implementation: per-team token budget caps with automated throttle and suspend actions. GPU idle detection with automated scale-down. Agentic workflow kill-switches for runaway inference processes. Per-chain attribution for multi-step agentic workflows accessing shared foundation model endpoints.

The behaviour change this creates: AI teams receive the cost signal at API call time, not in the monthly billing report. A team approaching their token budget receives an automated alert at 80% — not a chargeback charge at 120% of their intended allocation three weeks after the fact.

Capability 5 — Unit Economics

Cost per feature, cost per user, cost per transaction — connecting infrastructure investment to the business outcomes that justify it.

Implementation: define cost-per-unit metrics that matter to the business. Track them on a weekly cadence alongside product performance metrics. Surface them in the same dashboards as latency, error rate, and deployment frequency — not in separate FinOps reports.

The behaviour change this creates: engineering teams optimise for cost per outcome rather than for cost in isolation. A cache optimisation that saves £250,000/month becomes visible as a £250,000/month improvement in the cost-per-feature metric — giving the engineering team the same recognition for a cost improvement as for a reliability improvement.

DigiUsher: From Allocation to Control

DigiUsher’s FinOps Operating System provides the financial control system architecture that replaces chargeback’s allocation-after-the-fact model with governance that operates at the speed and granularity of modern infrastructure.

Real-time workload-level attribution — FOCUS 1.x normalised cost from AWS, Azure, GCP, Kubernetes, Databricks, and AI platforms attributed at the namespace, service, and team level continuously. Cost dashboards that engineering teams trust because they reflect actual consumption, not estimated allocation.

Pre-deployment cost visibility — projected monthly cost estimates in IDP self-service workflows and CI/CD cost gates before resources are provisioned. The #1 desired FinOps tooling capability in the State of FinOps 2026 survey — delivered as a platform capability rather than a separate FinOps process.

Automated financial guardrails — tagging enforcement through admission controls, budget threshold triggers, non-production environment scheduling, and resource limit ceilings. Governance that prevents cost rather than allocating it.

AI and GPU cost governance — token budget caps per team with automated throttle and suspend, agentic workflow kill-switches, GPU idle detection, and per-chain inference attribution. The AI cost governance layer that chargeback allocation cannot replace.

Unit economics reporting — cost per feature, cost per customer interaction, cost per AI initiative in board-ready format. The business-outcome connection that transforms FinOps from a cost reporting function to a technology investment governance capability.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

Chargeback was built for an era that is gone. The Kubernetes and AI era requires governance that operates before cost accumulates — not allocation that arrives after it is locked in. The organisations that replace allocation with control will govern faster, dispute less, and optimise more.

Frequently Asked Questions

Why is chargeback failing in Kubernetes and AI environments in 2026?

Five structural failure modes: shared infrastructure incompatibility (50 services sharing a node pool cannot be cleanly allocated); tagging incompleteness (30–50% of cloud resources are untagged or inconsistently tagged); temporal mismatch (monthly allocation cycles are 25 days too late for 50+ daily infrastructure changes); behavioural distortion (imprecise charges generate disputes rather than optimisation); and AI billing incompatibility (token-based AI costs have no mapping to chargeback’s resource-level allocation logic).

What is the difference between showback and chargeback in FinOps?

Showback reports costs to teams without financial transfer — purely informational. Chargeback involves actual financial allocation: costs move into departmental budgets, real money changes hands. Most organisations spend 6–18 months in showback before attempting chargeback, using the period to validate attribution accuracy, surface tagging gaps, and build the trust that makes chargeback effective rather than politically toxic.

How should Kubernetes shared costs be allocated?

Three methods exist: even split (simple but perceived as unfair), fixed proportional (more defensible but requires manual maintenance), and variable proportional (most accurate, based on actual consumption metrics). The FinOps Foundation recommends starting simple. Pre-requisites for any method: namespace attribution labels enforced through admission controls at provisioning time, and consistent methodology documented and understood by consuming teams before financial consequences are introduced.

What replaces chargeback as the modern FinOps model?

The financial control system: real-time workload-level attribution, cost visibility at decision time, embedded financial guardrails, AI and GPU cost governance, and unit economics. The fundamental shift: chargeback asks “who should pay for this cost?” — a financial control system asks “how do we prevent unnecessary cost at the point it is created?” Governance that prevents cost rather than allocating it after the fact.

When should an organisation implement chargeback vs. showback?

Chargeback requires: 90–95%+ tagging coverage, documented and validated allocation methodology, attribution accuracy validated against actual bills for 2+ months, and engineering teams with operational tools to control their spend. Showback first — always. Rushing to chargeback before attribution accuracy is established destroys FinOps programme credibility faster than any other implementation error.

How does AI change the cost allocation challenge?

AI introduces token-based, usage-driven, non-linear billing that chargeback allocation logic cannot address. Shared AI endpoints generate aggregate charges that require request-level attribution to split fairly. Agentic workflows chain 10–20 LLM calls per task, generating costs that compound at machine speed. AI cost governance requires real-time per-team token attribution enforced at API call time — not monthly allocation of aggregate AI charges.

How does DigiUsher replace chargeback with a financial control system?

Through five integrated capabilities: real-time FOCUS 1.x workload-level attribution; pre-deployment cost visibility in IDP workflows and CI/CD pipelines; automated guardrails through admission controls, budget caps, and environment scheduling; AI and GPU governance with token budget enforcement and agentic kill-switches; and unit economics connecting infrastructure investment to business outcomes in board-ready reporting.

References

- FinOps Foundation — Managing Shared Cloud Costs: Identifying and Splitting

- FinOps Foundation — State of FinOps 2026

- Holori — IT Showback and Chargeback: Essential Tools in the FinOps Cloud Workflow (March 2026)

- Medium / Nicholas Thoni — How to Actually Track Kubernetes Costs in 2026 (February 2026)

- Apptio — Chargeback for Kubernetes: The Four Pillars

- OneUptime — FinOps Cost Allocation and Chargeback per Kubernetes Namespace (February 2026)

- OneUptime — Namespace Cost Allocation and Showback Reporting (February 2026)

- DevOps.com — FinOps Meets DevOps: Engineering Cost Ownership in 2026 (January 2026)

- codelynks — FinOps in 2026: Best Ways to Cut Cloud Waste by 30–40%

- byteiota — FinOps 2026 Implementation Guide: Cut Cloud Costs 30–50%

- Finout — FinOps Key Principles, Best Practices & Implementation Guide

- devopstales.com — Kubernetes Cost Optimisation Guide 2026

- turbogeek.co.uk — FinOps for DevOps Engineers: Making Cloud Cost Part of Your Pipeline

- FinOps Foundation — FOCUS Specification

Replace Allocation with Control

Chargeback was the right answer for 2012. The Kubernetes and AI era requires something fundamentally different — governance that operates at engineering velocity, prevents cost before it accumulates, and connects infrastructure investment to business outcomes rather than distributing it across budget lines.

DigiUsher’s FinOps OS provides the financial control system that replaces chargeback’s allocation model with real-time attribution, automated guardrails, AI cost governance, and unit economics — all in a single FOCUS-normalised platform.

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

FinOps for AI: From Cloud Chaos to Business Clarity

Cloud was complex. AI made it explosive. Enterprise AI spend grew 5.8× in two years. A $400M collective leak in unbudgeted agentic AI spend hit Fortune 500 companies in Q1 2026 alone. 73% of FinOps teams report AI costs exceeded original projections. This is the definitive guide to what FinOps for AI actually requires — why legacy cost tools cannot govern it, what business clarity looks like when it is working, and how DigiUsher's FinOps OS turns AI cost chaos into measurable cloud ROI.

Explore articleUnlocking Financial Efficiency with FinOps: The Savings Playbook

Cloud spending hit $723 billion globally in 2025. Enterprises without a structured FinOps programme waste 32–40% of every cloud dollar. Those with mature programmes recover that to under 15%. The difference is the Effective Savings Rate — the single metric that tells you whether your FinOps strategy is generating real financial efficiency or just producing optimisation reports. This definitive 2026 playbook covers the seven savings levers, the ESR benchmarks that define world-class performance, and how DigiUsher's FinOps OS operationalises them into continuous, measurable business value.

Explore article

Platform Teams Are Becoming Cost Centers — And What To Do About It

80% of enterprises now have formal platform engineering initiatives. Platform teams own Kubernetes clusters, CI/CD pipelines, observability stacks, and AI infrastructure — making them the de facto financial decision-makers for the fastest-growing cost categories in enterprise cloud. But they are measured on deployment speed and reliability, not cost efficiency. This brief explains the five mechanisms turning platform teams into shadow cost centers, why traditional FinOps cannot govern at platform velocity, and how the transformation from cost center to financial control plane happens.

Explore article