Unlocking Financial Efficiency with FinOps: The Savings Playbook

Cloud spending hit $723 billion globally in 2025. Enterprises without a structured FinOps programme waste 32–40% of every cloud dollar. Those with mature programmes recover that to under 15%. The difference is the Effective Savings Rate — the single metric that tells you whether your FinOps strategy is generating real financial efficiency or just producing optimisation reports. This definitive 2026 playbook covers the seven savings levers, the ESR benchmarks that define world-class performance, and how DigiUsher's FinOps OS operationalises them into continuous, measurable business value.

Author

DigiUsher

Read Time

19 min read

Executive Summary

Cloud spending hit $723 billion globally in 2025. Forrester projects it will cross $1 trillion in 2026.

Of that investment, enterprises without structured FinOps programmes waste 32–40% of every cloud dollar — idle resources, overprovisioned instances, unused commitments, and orphaned storage generating cost with no corresponding business value. Mature FinOps programmes recover that waste ratio to below 15%.

The financial consequence of the gap: an enterprise with $10 million in annual cloud spend is wasting between $3.2 million and $4 million annually without FinOps governance. A mature programme recovers $1.2–2.5 million of that waste, delivering 10–20x ROI on the programme investment.

But 2026 has changed what “financial efficiency” means for FinOps teams. The FinOps Foundation’s State of FinOps 2026 is explicit: mature practitioners report diminishing returns on traditional optimisation approaches.

“We have hit the ‘big rocks’ of waste and now face a high volume of smaller opportunities that require more effort to capture.” — FinOps Foundation State of FinOps 2026 practitioner

The implication: the era of large, easy cloud savings — untagged EC2 instances, idle dev environments, obvious over-provisioning — is maturing for organisations that have been doing FinOps for several years. The discipline is shifting from “how do we spend less?” to “how do we maximise return per cloud and AI dollar?”

Three things define financial efficiency in 2026:

- Measuring ESR — the single metric that tells you whether your FinOps strategy is generating real savings or just producing optimisation reports

- Executing the seven savings levers — the structured playbook from quick wins to strategic commitment management

- Governing AI costs — the fastest-growing and least-governed cost category, requiring savings instruments that traditional FinOps frameworks were not built to provide

This playbook covers all three.

The ESR: The Single Metric That Defines FinOps Financial Performance

Most FinOps teams track multiple KPIs — commitment coverage, utilisation rates, idle resource counts, blended rates, tagging compliance. These metrics each measure one dimension of cloud cost performance. None of them tells you whether your FinOps programme is generating real financial efficiency.

Effective Savings Rate (ESR) does.

What ESR Measures

ESR Formula (FinOps Foundation FOCUS-aligned)

──────────────────────────────────────────────────────────────

ESR = (On-Demand Equivalent Cost − Effective Cost)

÷ On-Demand Equivalent Cost

Where:

On-Demand Equivalent Cost = what you would have paid for

the same usage at full list rates

(no discounts, no commitments)

Effective Cost = what you actually paid

(after all discounts applied)

──────────────────────────────────────────────────────────────

ESR = 20% means: you paid 20% less than you would have paid

at on-demand rates for the same usage

──────────────────────────────────────────────────────────────

ESR captures three dimensions simultaneously:

- Coverage — what percentage of your usage is under commitment discount

- Utilisation — what percentage of your purchased commitments is actively consumed

- Discount rate — how large is the saving per committed unit

Traditional metrics capture only one of these at a time. ESR combines all three into a single outcome-based performance score — the “FinOps performance score” that can justify investment, benchmark against peers, and track improvement over time.

ESR Benchmarks: Where Does Your Organisation Stand?

AWS Compute ESR (ProsperOps 2025 Rate Optimisation Insights Report)

| Performance Tier | ESR | Coverage | Action Required |

|---|---|---|---|

| Negative ESR | < 0% | > 85% (over-committed) | Unwind commitments; let them expire; adjust to match actual usage |

| Below average | 0–15% | < 55% | Significant upside; automation can accelerate ESR improvement |

| Median | ~15% | ~55% | Matches the 2024 industry median; focus on commitment flexibility |

| Top quartile | 25–30% | 70–80% | World-class for most estate sizes; automation required to sustain |

| Elite | 30–40%+ | > 80% | Requires diversified commitment portfolio and continuous management |

Industry trajectory: median AWS Compute ESR rose from 0% in 2023 to 15% in 2024 as commitment adoption increased (64% used RIs/SPs in 2024, up from 45% in 2023).

Google Cloud Compute ESR (ProsperOps 2025 GCP Benchmarking Report)

| Usage Segment | ESR | Key Driver |

|---|---|---|

| Low spend (< $1M annual) | 9.4% | Limited access to private rates; minimal CUD usage |

| Mid-tier ($1M–$10M) | ~30% | CUD adoption with improving coverage |

| High spend ($10M+) | 54.3% | Dual CUD + private rates strategy; sustained use discounts compound |

The GCP data makes a critical point: companies with higher usage on Google Cloud compute resources save more and do so consistently — the median ESR was 54.3% for those with annualised usage of $10M or more. Scale and commitment discipline compound.

The ESR Performance Traps

Over-commitment: Buying commitments aggressively to maximise coverage creates ESR improvement on paper — until infrastructure shifts. When usage declines or instances are replaced, committed capacity goes to waste. ESR turns negative: you pay more than on-demand rates because you are paying for capacity you cannot use. Teams that buy a large volume of RIs or commit to 3-year Savings Plans, see ESR jump from 12% to 28%, and celebrate — then two quarters later their infrastructure shifts, leaving half those instances underutilised or idle. ESR should reflect good decisions, not justify bad ones.

ESR without commitment risk awareness: ESR only tells part of the story. Committed Use Discounts have inherent risk, and quantifying savings without considering this risk is helpful but insufficient. Commitment Lock-In Risk (CLR) is a companion metric that provides a balanced view of savings performance and the corresponding lock-in risk.

Measurement drift: ESR should be calculated on a consistent cost basis — amortised cost is recommended for cross-provider comparability because it aligns discount value to the period consumed. Switching between amortised, unblended, and blended bases between quarters creates apparent ESR movements that reflect accounting changes, not financial efficiency changes.

Seven Savings Levers: The Financial Efficiency Playbook

The seven levers are sequenced by accessibility — start with quick wins that require no architecture changes, then build to strategic commitment management, and finally govern AI workloads as the seventh and newest category.

Lever 1 — Commitment Discounts: 40–72% vs. On-Demand

The highest-return savings lever for stable workloads. Reserved Instances, Savings Plans (AWS), Committed Use Discounts (GCP), and Azure Reservations deliver 40–72% reduction versus on-demand rates — and drive the majority of ESR improvement.

The strategic challenge: commitment risk. The enterprises at the top of the ESR distribution are not those that committed most aggressively — they are those that committed most intelligently, balancing coverage with flexibility.

Commitment Strategy Framework

──────────────────────────────────────────────────────────────

Coverage Target: 60–80% of steady-state workloads

(leave 20–40% uncommitted for flexibility)

Instrument mix: Start flexible (Compute Savings Plans cover

EC2, Fargate, Lambda under one commitment)

Add instance-specific RIs for fully stable

workloads only

Term selection: 1-year for uncertain environments

3-year for fully validated stable baselines

(3-year = highest discount; highest CLR)

Management: Manual: monthly commitment review cycle

Automated: continuous usage-tracking,

dynamic purchase and adjustment

──────────────────────────────────────────────────────────────

The 2025 ProsperOps Report found that 51% of organisations using commitments made infrequent batch purchases of AWS Savings Plans over sophisticated strategies. The most popular type was the 3-year Compute Savings Plan, used by 50% of organisations, because it is easy to implement, covers a broad range of compute services, and requires no ongoing management. Easy to start — but automation is required to sustain ESR in dynamic environments.

DigiUsher’s commitment intelligence tracks ESR continuously across AWS, Azure, and GCP in a unified FOCUS 1.x view, surfacing coverage gaps and over-commitment risk before they affect the next invoice — not in the quarterly commitment review.

Lever 2 — Rightsizing: 15–35% of Compute Spend

The most universal form of cloud waste. Teams provision instances at peak capacity at project inception and never rebalance as workloads stabilise. A service that requested 4 CPUs at launch runs on 1.2 CPUs at steady state — and pays for 4 CPUs every hour indefinitely.

Rightsizing in 2026 relies on historical utilisation analysis rather than static thresholds. Teams evaluate long-term patterns to determine safe reductions without performance risk.

The implementation sequence:

- Measure P95 CPU and memory utilisation over 30–90 day lookback periods per workload

- Generate rightsizing recommendations targeting the instance type satisfying P95 without peak headroom

- Prioritise non-production first — safer, lower-risk validation of recommendation accuracy

- Apply to production through a change management gate with performance monitoring

- Automate the cycle: continuous measurement → continuous recommendations → governed application

For containerised workloads: VPA (Vertical Pod Autoscaler) in recommendation mode surfaces rightsizing signals without requiring immediate application — engineering teams see the recommendation in their workflow and apply it through standard change processes.

The Kubernetes compounding effect: overprovisioned pods multiply waste across every node in the cluster. A 40% over-requested namespace runs at 60% cluster efficiency on every node it occupies.

Lever 3 — Non-Production Environment Scheduling: 65–70% Non-Prod Saving

The most accessible savings action for any organisation, regardless of FinOps maturity.

he average non-production environment is idle 76% of the time. Implementing schedule-based shutdown for non-production environments (Monday–Friday 8AM–6PM) saves 65–75%.

Monthly Non-Prod Scheduling Saving

──────────────────────────────────────────────────────────────

Assumption: £50,000/month in non-production compute

Schedule: 08:00–18:00 weekdays, no weekends

Hours governed: 128 business hours vs 720 total hours

Saving: ~65% of non-prod compute = £32,500/month

Annual saving: £390,000/year — with zero architecture changes

──────────────────────────────────────────────────────────────

For platform teams: implement environment TTL policies through Kubernetes namespace controllers or IDP admission layer policies — platform-enforced scheduling rather than advisory alerts that developers override under delivery pressure.

Lever 4 — Spot and Preemptible Instances: 60–90% Off On-Demand

The highest single-discount instrument available for fault-tolerant workloads. Spot (AWS), Preemptible VMs (GCP), and Spot VMs (Azure) offer 60–90% versus on-demand for capacity that cloud providers can reclaim with short notice.

The requirement: fault tolerance. Training jobs that checkpoint and resume, batch processing pipelines with retry logic, CI/CD runners, and integration test environments are all strong candidates. Real-time, latency-sensitive inference is not.

Karpenter on EKS provides the best automated Spot management for Kubernetes: it dynamically selects the cheapest available Spot instance type satisfying workload node affinity requirements, diversifies across instance families and availability zones to reduce interruption probability, and automatically provisions on-demand fallback when Spot capacity is unavailable.

Lever 5 — Idle and Orphaned Resource Elimination: 10–15% Immediate Saving

A structured first-pass audit of any cloud estate that has not been recently reviewed typically reveals 10–15% in immediate, zero-risk savings:

- EC2 instances running with zero network traffic for 30+ days

- EBS volumes detached from deleted EC2 instances

- Elastic Load Balancers serving no targets

- S3 buckets receiving no access for 90+ days

- RDS instances running at < 5% CPU for 30+ days

- Kubernetes namespaces with no pods running

Automation is the only sustainable elimination strategy. Manual cleanup campaigns produce a one-time improvement; without continuous automated detection, the same waste categories re-accumulate within two sprint cycles. Tagging all resources at provisioning with owner, environment, and TTL is the prerequisite — resources without owner tags cannot be routed to a human for disposition decisions.

Lever 6 — Storage and Data Transfer Optimisation: 45–55% Storage Saving

Multiple snapshot copies, objects in expensive storage tiers, and logs retained indefinitely represent 45–55% optimisation potential — storage lifecycle policies alone yield this range of savings.

Three specific implementations with high return and low risk:

gp2 → gp3 migration (AWS): gp3 EBS volumes cost the same as gp2 while delivering 3,000 IOPS baseline versus gp2’s variable IOPS model. For most workloads, migrating from gp2 to gp3 reduces EBS cost by 20% with equal or improved performance. This is a zero-risk architectural change that can be automated at scale.

S3 Intelligent-Tiering: for objects with variable or unpredictable access patterns, S3 Intelligent-Tiering automatically moves objects between access tiers based on actual usage — eliminating the need to predict access patterns while recovering the cost difference between Standard and Infrequent Access tiers (40% storage cost reduction).

Data transfer architecture review: cross-AZ traffic between microservices generates $0.01/GB in data transfer charges that accumulate silently into a significant monthly cost. A microservices architecture making 10 million inter-service calls per day with 20% crossing AZ boundaries at an average payload of 10KB generates £29,000/month in data transfer charges that appear nowhere in standard compute cost views.

Lever 7 — AI Workload Cost Governance: 30–50% Inference Saving

The newest, fastest-growing, and least-governed savings category in 2026.

GPU-intensive AI workloads now account for 18% of total cloud spend at AI-forward enterprises, up from 4% in 2023 — creating new FinOps challenges around inference cost optimisation. 98% of FinOps teams now manage AI spend. Any savings strategy that does not include AI workload governance is incomplete.

Four AI-specific savings mechanisms:

Model routing: directing task complexity to the appropriate compute tier. Document summarisation, classification, and extraction at GPT-3.5-turbo or equivalent small model rates (typically 5–20× cheaper per token than frontier models). Complex multi-step reasoning and code generation at frontier model rates only when the quality difference is validated and worth the cost premium. Combined, model routing typically reduces inference API costs by 30–50%.

Semantic caching: serving cached responses for semantically equivalent queries without a new LLM API call. For enterprise applications where many users ask functionally identical questions (FAQ-style, status-check, report generation), caching can eliminate 20–40% of API calls with no quality impact.

Token budget caps: enforcing per-team and per-product token budgets with automated throttle and suspend actions before monthly limits are breached. Not after. The cost of ungoverned AI inference is typically discovered as a monthly invoice surprise — by which point the spend has already occurred.

Agentic workflow controls: automated kill-switch infrastructure for AI agents caught in recursive reasoning loops — the mechanism that prevented the collective $400M Fortune 500 agentic AI cost leak of Q1 2026. A single agent in a reasoning loop can exhaust daily compute budgets before business hours begin.

From Cost Reduction to Value Maximisation: The FinOps Maturity Shift

The 2026 State of FinOps documents a structural shift in how mature FinOps teams measure success. Mature practices are focusing on value capabilities: unit economics, AI value quantification, and influencing technology selection. The centre of gravity is spreading as teams take responsibility for increasing technology value, not just reducing technology cost.

This shift has three practical implications:

1. From savings dollars to value per cloud dollar

The new FinOps KPI set:

| Traditional FinOps KPI | 2026 Value-Oriented KPI |

|---|---|

| Total cloud spend | Cloud cost as % of revenue |

| Savings achieved (£) | Cost per customer served |

| Commitment coverage % | Cost per AI feature delivered |

| Waste % eliminated | ESR + AI unit economics |

| Monthly cost report | Real-time cost-per-outcome |

Efficiency metrics in 2026 include unit economics (cost per customer/transaction), waste percentage (target <8% for mature programmes), and cloud efficiency ratio (value per dollar). Optimisation metrics include commitment coverage (60–80% target), commitment utilisation (>95% target), rightsizing adoption (>70%), and tagging compliance (>95%).

2. FinOps participating in technology decisions before spend is committed

FinOps is no longer just explaining past spend. It is shaping future technology decisions before commitments are made. FinOps leaders are now participating in provider negotiations, commitment modelling, and AI investment decisions — not as retrospective cost auditors but as prospective financial governance partners in technology architecture decisions.

3. AI-funding-through-savings

Many organisations report being asked to self-fund AI investments through optimisation savings, tying traditional FinOps work directly to strategic technology enablement. The savings programme is no longer an end in itself — it is the mechanism that funds AI capability development. A 20–30% cloud cost reduction on a £10M annual cloud estate generates £2–3M annually that can be reallocated to GPU infrastructure, AI platform investment, and AI engineering capacity.

Building the Financial Efficiency Programme: The Implementation Sequence

90-Day Quick Wins

The first 90 days of a FinOps programme should focus on zero-risk, high-return actions that generate immediate savings without architectural changes:

Week 1–2: Establish full cost visibility — ingest billing data from all cloud providers, AI platforms, and Kubernetes clusters. Achieve 95%+ tagging compliance. Calculate baseline ESR.

Week 2–4: Schedule all non-production environments. Estimated immediate saving: 65–70% of non-prod compute. On a £2M non-prod estate: £1.3–1.4M annual saving.

Week 3–6: Execute idle resource audit. Identify and terminate EC2 instances, EBS volumes, load balancers, and RDS instances generating cost without active workloads. Estimated: 10–15% immediate waste removal.

Week 4–8: Analyse commitment opportunity from 60 days of baseline data. Purchase initial Compute Savings Plans targeting 60% of validated steady-state compute. Start ESR measurement.

Months 2–3: Rightsize overprovisioned instances from utilisation data. Begin AI cost instrumentation — tag AI workloads separately from cloud infrastructure.

6-Month Tactical Build

Months 3–6 build the governance infrastructure that sustains quick-win savings and prevents them from drifting back:

- Automated commitment management replacing manual quarterly purchase cycles

- VPA-style rightsizing integrated into engineering workflows rather than FinOps-team-only reports

- AI unit economics dashboard tracking cost per inference, cost per model training run, cost per AI feature

- Model routing implementation for inference workloads

- Anomaly detection replacing monthly billing surprise cycles

12-Month Strategic Capability

Months 6–12 build the capability that mature FinOps programmes operate at continuously: AWS, Azure, GCP, Oracle OCI, Alibaba Cloud; Kubernetes (EKS, AKS, GKE, OKE); Databricks, Snowflake ML; Azure OpenAI, AWS Bedrock, Vertex AI, Hugging Face, direct AI API providers; Google Workspace, Salesforce, Anthropic Claude, Cursor and more. All normalised to FOCUS 1.x.

- ESR-driven commitment strategy achieving 25–40% for the estate size

- AI cost governance at parity with infrastructure governance — same attribution depth, same real-time monitoring, same automated enforcement

- Value-per-dollar reporting connecting infrastructure spend to business outcomes at the CFO and board level

- FinOps participating in technology selection decisions before infrastructure is provisioned

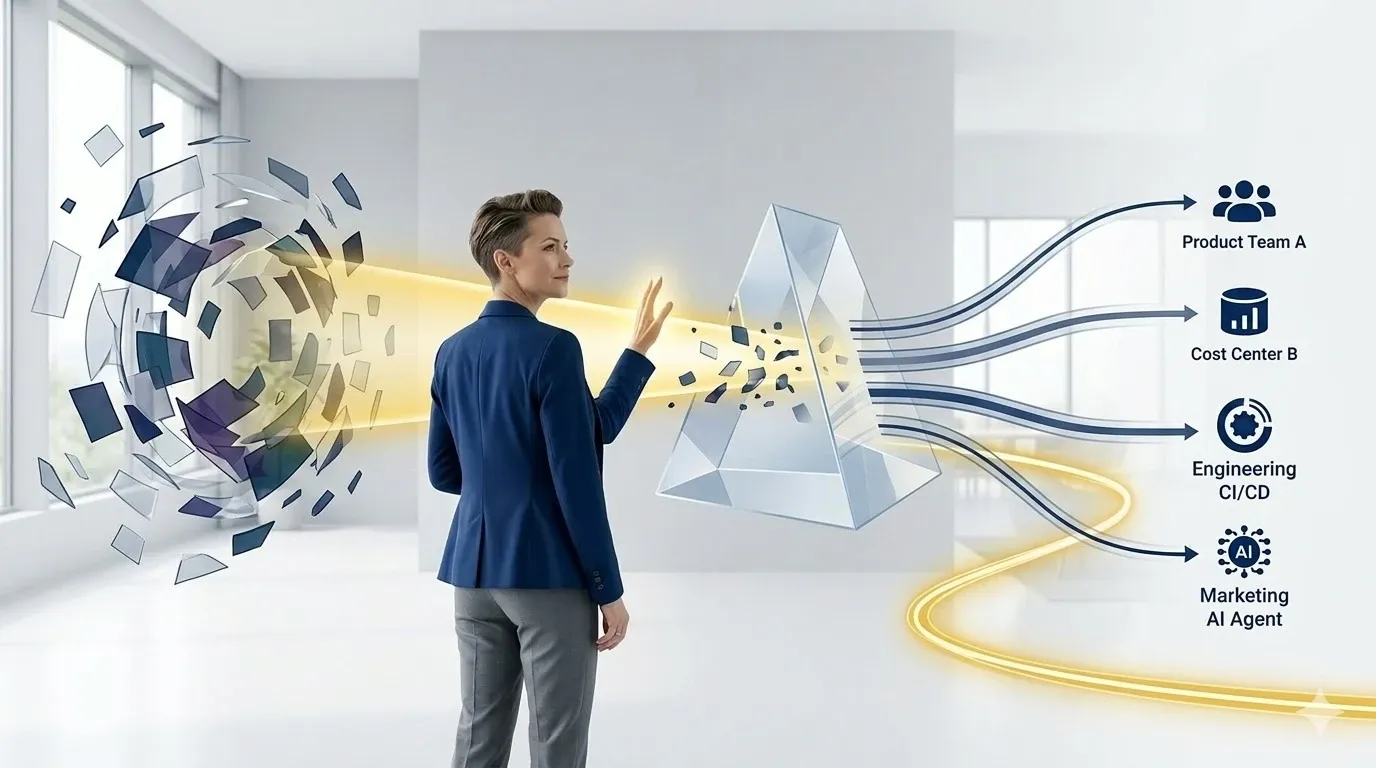

DigiUsher’s FinOps OS: Operationalising Financial Efficiency

DigiUsher’s FinOps Operating System operationalises every lever in this playbook — not as discrete, disconnected tools, but as an integrated continuous governance system.

ESR measurement across all providers. FOCUS 1.x normalised ESR calculated continuously across AWS, Azure, and GCP in a unified view — not assembled manually from three separate billing systems. ESR by provider, by service, by team, and by product for attribution at every relevant level.

Commitment intelligence. Real-time commitment utilisation tracking with gap detection and over-commitment risk signals. Commitment coverage recommendations based on continuously updated usage baseline analysis — not quarterly review cycles. ESR impact modelling for proposed commitment changes before purchase.

Automated rightsizing signals. Workload-level utilisation analysis surfaced to engineering teams at the point of architectural decision — in IDP workflows, CI/CD pipelines, and developer dashboards — not in monthly FinOps reports that compete with delivery priorities.

Non-production scheduling enforcement. Platform-layer environment scheduling through IDP admission controls and Kubernetes namespace TTL policies — governance that enforces at provisioning time rather than advisory alerts that engineers override.

AI cost governance. Native token budget enforcement, model routing cost intelligence, GPU idle detection with automated scale-down, agentic workflow kill-switches, and per-chain token attribution across Azure OpenAI, AWS Bedrock, Vertex AI, Databricks, and direct API providers. The AI savings layer that infrastructure FinOps tools cannot provide.

Real-time anomaly detection. Spend patterns that diverge from baselines trigger alerts before they compound to invoice level. Not retrospective anomaly reporting — proactive governance that prevents cost surprises from forming.

Board-ready value reporting. Cost per business outcome — cost per AI product, cost per customer interaction, cost per transaction, cloud efficiency ratio — connecting every savings lever to the financial results boards require.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. AWS ISV Accelerate Partner listed on AWS Marketplace. Delivered globally through Infosys, Wipro, and Hexaware.

The question FinOps teams are answering in 2026 is not “how do we reduce cloud spend?” It is “how do we maximise return per cloud and AI dollar?” The difference between those two questions is the difference between a cost-cutting function and a strategic value governance capability. DigiUsher’s FinOps OS is built for the second question.

Frequently Asked Questions

What is Effective Savings Rate (ESR) and why is it the most important FinOps metric?

ESR measures the ROI of cloud commitment discount instruments — calculated as (On-Demand Equivalent Cost − Effective Cost) ÷ On-Demand Equivalent Cost. It is the most important FinOps metric because it captures coverage, utilisation, and discount rate simultaneously in one outcome-based performance number. Median AWS Compute ESR rose from 0% in 2023 to 15% in 2024; GCP high-spend organisations achieve 54.3%. World-class enterprise ESR for $10M+ compute estates is 25–40%.

How much cloud spend do enterprises waste without FinOps governance?

Organisations without structured FinOps programmes waste 32–40% of cloud spend. Mature programmes reduce this to 15–20%. Enterprises report an average 25–30% reduction in monthly cloud spend after FinOps implementation. On a £10M annual cloud estate: £3.2–4M wasted without governance; £2–2.5M recoverable with mature FinOps — 10–20x ROI on typical programme investment.

What are the seven cloud savings levers in 2026?

Commitment discounts (40–72% vs. on-demand); rightsizing (15–35% compute saving); non-production environment scheduling (65–70% non-prod saving); Spot and preemptible instances (60–90% off on-demand for fault-tolerant workloads); idle and orphaned resource elimination (10–15% immediate saving); storage lifecycle optimisation (45–55% storage saving); AI workload cost governance (30–50% inference cost reduction). All seven require continuous, automated governance to sustain — one-time optimisation campaigns produce temporary savings that drift back without ongoing enforcement.

What does world-class FinOps look like in 2026?

Six characteristics: continuous real-time cost monitoring rather than monthly reporting; ESR-driven performance measurement rather than coverage tracking; 98% AI spend inclusion in governance scope; automation-first commitment management, rightsizing, and scheduling; value-per-dollar KPIs (cost per customer, cost per AI feature, cloud efficiency ratio) alongside traditional savings metrics; FinOps participating in technology selection before spend is committed.

How does AI change the FinOps savings strategy?

AI is the fastest-growing and least-governed cost category — 18% of total cloud spend at AI-forward enterprises, up from 4% in 2023. Traditional savings levers (commitments, rightsizing, scheduling) do not govern token-based, usage-driven AI billing. AI savings require: model routing (30–50% inference cost reduction), semantic caching (20–40% API call elimination), token budget enforcement with automated throttle, and agentic workflow kill-switches to prevent recursive loop cost spirals.

How does DigiUsher measure and improve ESR across AWS, Azure, and GCP?

AWS, Azure, GCP, Oracle OCI, Alibaba Cloud; Kubernetes (EKS, AKS, GKE, OKE); Databricks, Snowflake ML; Azure OpenAI, AWS Bedrock, Vertex AI, Hugging Face, direct AI API providers; Google Workspace, Salesforce, Anthropic Claude, Cursor and more. All normalised to FOCUS 1.x.

References

- ProsperOps — 2025 Rate Optimisation Insights Report: AWS Compute (August 2025)

- ProsperOps — 2025 Rate Optimisation Insights Report: Google Cloud Compute (August 2025)

- FinOps Foundation — How to Calculate Effective Savings Rate (ESR)

- FinOps Foundation — State of FinOps 2026

- FinOps Foundation — FOCUS Specification: ESR Use Case

- Tech Insider — FinOps in 2026: How CFOs Are Finally Taming Runaway Cloud Costs (March 2026)

- DEV Community — Cloud FinOps 2026: Cut Costs by 30% While Accelerating Innovation

- Holori — The Complete Cloud Cost Optimisation Guide in 2026

- LeanOps — Cut Your Cloud Bill by 30% Without Downtime: The 2026 FinOps Playbook

- Intellectt — Cloud Cost Optimisation in 2026: How AI-Driven FinOps Is Transforming Enterprise IT Spending

- nOps — 8 FinOps Best Practices for 2026

- nOps — 25+ Stunning FinOps Statistics That Show the True State of Cloud Spending

- Amnic — What’s Effective Savings Rate (ESR)? Measure Your FinOps ROI

- North.Cloud — Effective Savings Rate: The FinOps Metric That Matters

- Flexera — 3 Ways to Maximise Effective Savings Rate (November 2025)

- Sedai — Top 17 FinOps Cloud Optimisation Strategies for 2026

- Forrester — Public Cloud Market Outlook 2026: $1.03 Trillion

Start Measuring Your Effective Savings Rate

Financial efficiency in cloud and AI starts with knowing where you are. ESR tells you your current savings performance. The seven savings levers tell you where the recovery is.

DigiUsher’s FinOps OS measures ESR continuously across AWS, Azure, and GCP — alongside AI unit economics, commitment coverage, rightsizing signals, and anomaly detection — in a single unified governance view.

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

The Death of Chargeback: Why Cost Allocation Is Failing in the Kubernetes and AI Era

Chargeback was built for a world of static servers, predictable workloads, and clear ownership boundaries. That world is gone. In 2026, shared Kubernetes clusters, ephemeral containers, and AI token costs have made traditional allocation models inaccurate, delayed, and politically toxic. This briefing explains the five failure modes destroying chargeback in modern infrastructure — and the five-capability model that replaces it.

Explore article

Platform Teams Are Becoming Cost Centers — And What To Do About It

80% of enterprises now have formal platform engineering initiatives. Platform teams own Kubernetes clusters, CI/CD pipelines, observability stacks, and AI infrastructure — making them the de facto financial decision-makers for the fastest-growing cost categories in enterprise cloud. But they are measured on deployment speed and reliability, not cost efficiency. This brief explains the five mechanisms turning platform teams into shadow cost centers, why traditional FinOps cannot govern at platform velocity, and how the transformation from cost center to financial control plane happens.

Explore article

EKS vs AKS vs GKE vs OKE: Cost Governance for Platform Teams

Platform teams running EKS, AKS, GKE, and OKE face radically different cost structures behind identical Kubernetes APIs. Control plane fees alone cost $8,760/year per 10-cluster estate on EKS versus zero on AKS. OKE runs serverless workloads at one-third the cost of EKS or AKS. This technical FinOps playbook breaks down real 2026 pricing, hidden cost drivers, platform-specific optimisation levers, and the unified governance model that no single native tool provides.

Explore article