Platform Teams Are Becoming Cost Centers — And What To Do About It

80% of enterprises now have formal platform engineering initiatives. Platform teams own Kubernetes clusters, CI/CD pipelines, observability stacks, and AI infrastructure — making them the de facto financial decision-makers for the fastest-growing cost categories in enterprise cloud. But they are measured on deployment speed and reliability, not cost efficiency. This brief explains the five mechanisms turning platform teams into shadow cost centers, why traditional FinOps cannot govern at platform velocity, and how the transformation from cost center to financial control plane happens.

Author

DigiUsher

Read Time

23 min read

Executive Summary

Platform Engineering was designed to accelerate delivery, standardise infrastructure, and enable developer self-service.

In many enterprises, it has quietly become something else entirely: one of the largest and fastest-growing ungoverned cost centres in the cloud estate.

The data is unambiguous:

- 80% of enterprises now have formal platform engineering initiatives — up from 45% in 2022 — making platform engineering the fastest-adopted enterprise infrastructure practice of the decade

- 78% of companies waste 21–50% of cloud spend — with platform-managed shared infrastructure absorbing the largest share of unattributed cost

- 82% of organisations say AI initiatives are making cloud costs harder to manage — and platform teams are the primary provisioning layer for AI infrastructure

- 30–50% of enterprise GPU spend is wasted on over-provisioned resources running on platform-managed Kubernetes clusters

Platform teams now control Kubernetes clusters across EKS, AKS, GKE, and OKE; CI/CD pipelines processing thousands of deployments daily; observability stacks ingesting terabytes of telemetry; shared data platforms; and AI infrastructure including GPU clusters and inference endpoints. Every architectural decision they make — every node pool configuration, every cluster tier selection, every GPU allocation — has direct and immediate financial consequence.

And yet, platform teams are measured on deployment frequency, developer experience, and reliability — not cost efficiency. Speed is rewarded. Efficiency is optional.

“Dashboards are table stakes of yesterday — reactive. You have to move to proactive, real-time, automation. But you can’t automate what you can’t see.” — FinOps practitioner, State of FinOps 2026

This briefing explains the five structural mechanisms turning platform teams into shadow cost centres, why traditional FinOps cannot govern at platform velocity, and how the transformation from cost centre to financial control planehappens — and what it looks like when it works.

The Structural Shift: From Enablement Layer to Cost Driver

Historically, the financial roles in enterprise technology were cleanly separated: infrastructure teams managed servers, finance tracked budgets, and engineering built applications. Each function had its own domain, its own governance model, and its own accountability structure.

Platform Engineering has dissolved all three boundaries simultaneously.

Today’s platform teams design, operate, and continuously evolve systems across:

Platform Engineering Scope — 2026

──────────────────────────────────────────────────────────────

Infrastructure Layer

Kubernetes clusters: EKS · AKS · GKE · OKE

Compute: GPU node pools · ARM nodes · Spot fleets

Storage: Block · Object · Managed databases

Developer Platform Layer

Self-service portals: Backstage · custom IDPs

CI/CD pipelines: GitHub Actions · ArgoCD · Tekton

Observability: Metrics · Logging · Tracing

AI Infrastructure Layer

Managed AI: Azure OpenAI · AWS Bedrock · Vertex AI

GPU clusters: H100/A100 node pools for inference + training

Data platforms: Databricks · Snowflake ML

All of it: Provisioned · Operated · Billed

Through the platform team

──────────────────────────────────────────────────────────────

This scope creates an inescapable financial reality: every architectural decision made by platform teams has direct financial impact. The node pool configuration chosen for the EKS cluster determines the compute cost of every workload that runs on it. The GPU tier selected for AI inference determines the per-hour rate of every model call. The observability tool configured with verbose defaults generates terabytes of billable log ingestion daily.

Platform Engineering is now the execution layer for CIO technology strategy and CFO financial governance simultaneously — but the financial governance half of that mandate has not caught up with the technical execution half.

Five Mechanisms Turning Platform Teams Into Shadow Cost Centers

Mechanism 1 — Self-Service Removes Cost Signal at Decision Time

Platform Engineering’s core value proposition is eliminating infrastructure friction. When developers provision resources instantly through golden paths, self-service APIs, and pre-configured IDP templates, the deliberate decision-making that approval workflows used to enforce — however slowly — disappears.

What also disappears is the cost signal at the point where architectural decisions are made.

“Cloud’s usage-based pricing makes it ‘easy to spend far more than expected.’”

A developer creating a new Backstage component and provisioning an EKS namespace sees the architecture. They do not see the cost. A GPU pod configuration selected from a dropdown has no price tag attached. An observability stack enabled by default in a service template has no monthly cost estimate surfaced.

The financial consequence arrives three to four weeks later in the cloud invoice — by which point the architecture is in production, the team has moved to the next sprint, and “nobody remembers what they deployed three weeks ago.”

The State of FinOps 2026 names pre-deployment architecture costing as the single most desired tooling capabilityamong FinOps practitioners. The reason: catching cost issues before infrastructure ships is fundamentally more efficient than remediating after the bill arrives. Yet this capability is absent from the majority of platform engineering toolchains.

The self-service paradox: platform engineering enables autonomous infrastructure provisioning — and simultaneously removes every financial checkpoint that previously governed it.

Mechanism 2 — Cloud Billing Breaks at the Platform Abstraction Layer

Cloud billing was designed for a world where infrastructure teams ran distinct servers with distinct purposes. AWS Cost Explorer reports EC2 charges by instance. Azure Cost Management reports VM charges by resource group. GCP Billing reports Compute Engine charges by project.

Platform Engineering abstracts all of this behind APIs, golden paths, and Kubernetes namespaces. The abstraction that makes the platform valuable to developers — “don’t worry about the infrastructure, just deploy your service” — is also an abstraction that makes costs invisible to standard billing tools.

The Billing Abstraction Gap

──────────────────────────────────────────────────────────────

What AWS billing shows:

EC2: m5.4xlarge × 12 instances × 720 hours = £X

What platform teams actually need to know:

Team Alpha's checkout service: £4,200/month

Team Beta's recommendation engine: £8,100/month

Team Gamma's ML pipeline: £12,400/month

Shared observability stack: £3,800/month (attributable?)

What native billing provides:

One cluster-level line item.

Attribution: none.

──────────────────────────────────────────────────────────────

When 50 services share a node pool, standard reports are useless. The billing stops at the infrastructure boundary. The platform team manages the infrastructure. The engineering teams manage the services. Neither the platform team nor the engineering teams can attribute the cluster cost to the services generating it without workload-level cost tooling that sits above the native billing layer.

The result: the platform team absorbs the cluster cost in their budget, the product teams see no cost signal for their services, and the financial conversation becomes “why is platform engineering so expensive?” rather than “which product teams are generating cost, and is it proportionate to the value they create?”

Mechanism 3 — Shared Infrastructure Creates Default Cost Absorption

Platform teams operate shared infrastructure by definition. A shared Kubernetes cluster serves multiple teams. A shared observability stack collects telemetry from every service. A shared data platform processes data from multiple business units. A shared CI/CD system runs pipelines for every product team.

Without precise shared-cost attribution, the economics of this shared model collapse into a single failure mode: centralised cost, decentralised usage.

The FinOps Foundation’s research is explicit: shared and unallocated costs are often assigned directly to platform teams — making them default cost centres rather than enablement layers. In practice, this means:

- A platform team with £5M in annual cloud spend may own £1.5–2M in shared infrastructure costs attributable to product teams

- Product teams see no cost accountability for shared infrastructure consumption

- Finance sees the platform team as a large, opaque cost centre with no direct revenue attribution

- The platform team has neither the authority to enforce cost discipline on consuming teams nor the tooling to attribute costs to them

A real-world pattern documented in 2026: a global logistics company discovered that 40% of its “platform team budget” was actually product team consumption of shared services that had been allocated by default to the platform. The financial conversation changed entirely when attribution was corrected — not because any cost was reduced, but because ownership became visible.

Mechanism 4 — AI Workloads Are Exploding Platform Costs Non-Linearly

AI has changed the financial risk profile of platform engineering categorically.

Platform teams are now the provisioning layer for the fastest-growing and most financially volatile cost category in enterprise cloud: AI infrastructure. GPU clusters for training and inference. Azure OpenAI endpoints. AWS Bedrock integrations. Vertex AI pipelines. Databricks ML workloads. Each one generates costs that no platform team’s existing governance model was designed to manage.

The numbers make the urgency tangible:

- A single p5.48xlarge GPU instance on AWS costs $98.32/hr — one node running 24/7 generates $70,790/month

- 30–50% of enterprise GPU spend is wasted on over-provisioned, idle, or inefficiently scheduled compute

- An AI agent in a recursive reasoning loop generates thousands of pounds per hour without automated kill-switch infrastructure

- 82% of organisations say AI is making their cloud costs harder to manage — yet platform teams are the provisioning layer responsible for all of it

Traditional platform cost governance was designed for CPU workloads with linear, predictable cost scaling. AI costs are non-linear. A workload that runs at 20% GPU utilisation for three weeks and then scales to 95% for a training run generates a cost spike with no warning signal in standard monitoring. An inference endpoint provisioned for peak demand generates idle GPU cost continuously during off-peak hours.

Platform teams without AI-specific governance — GPU idle detection, token budget caps, agentic workflow kill-switches, inference cost attribution per team — are managing the most expensive infrastructure in their estate with the least appropriate tooling.

Mechanism 5 — Platform KPIs Reward Speed, Not Financial Efficiency

The DORA metrics — deployment frequency, change failure rate, lead time for changes, mean time to recovery — are the gold standard for measuring platform engineering performance. They are excellent operational metrics. They contain no cost dimension.

A platform team measured exclusively on DORA metrics and developer experience scores has no incentive structure that rewards cost efficiency. The team that ships the fastest IDP wins. The team that ships the most expensive one generates the same performance review outcome.

This misalignment is not an organisational failure — it is an architectural one. Cost efficiency is structurally absent from the measurement model that governs how platform teams make decisions.

“Engineers now see cost as a feature metric, not a finance metric — right alongside latency, error rates, and throughput sits cost per transaction.” — DevOps.com FinOps Meets DevOps, January 2026

This shift — treating cost as a first-class engineering signal alongside performance metrics — is happening in leading organisations. But it requires cost visibility at engineering velocity: cost per deployment surfaced in the same dashboard as error rate, not in a monthly FinOps report that arrives 25 days after the code was deployed.

The insight that repeatedly surprises engineering leaders who implement cost visibility: engineers actually care, once they can see. The assumption that “that’s not my job” is driven by the absence of visibility, not the absence of concern. When cost becomes a real-time engineering signal, it gets treated with the same professionalism as reliability and performance.

Why Traditional FinOps Cannot Govern at Platform Velocity

Traditional FinOps was designed for a different operational cadence:

Traditional FinOps Cycle vs. Platform Engineering Reality

──────────────────────────────────────────────────────────────

Traditional FinOps: Platform Engineering:

──────────────────── ────────────────────────

Monthly billing review 50+ infrastructure changes daily

Quarterly commitment opt. GPU costs compounding hourly

Annual architecture review Self-service provisioning in minutes

Post-spend analysis Costs committed at deployment

Centralised FinOps team Distributed engineering autonomy

──────────────────────────────────────────────────────────────

The structural lag:

Developers deploy → costs incurred instantly

FinOps reviews → 25+ days later

Optimisation → retrospective, after costs are locked in

By the time you see the cost, the architecture that

generated it has already been in production for weeks.

──────────────────────────────────────────────────────────────

The FinOps Foundation’s 2026 analysis confirms the direction the discipline must move: from “optimize after the bill arrives” to shift-left cost forecasting before deployment. Infrastructure reviews should include cost estimates the same way they include security reviews. Engineers should see cost impact during pull request review, not when the monthly bill lands.

Traditional FinOps cannot deliver this because its data pipeline operates at billing cadence — not engineering cadence. By the time FinOps recommendations reach engineering teams, the architecture decisions that generated the cost have already been committed to production, the team is three sprints ahead, and the cost is locked in.

The architectural insight that leading teams have adopted: cost optimization is shifting from FinOps to platform design itself. The platform is not the problem FinOps governs — the platform is where governance must live.

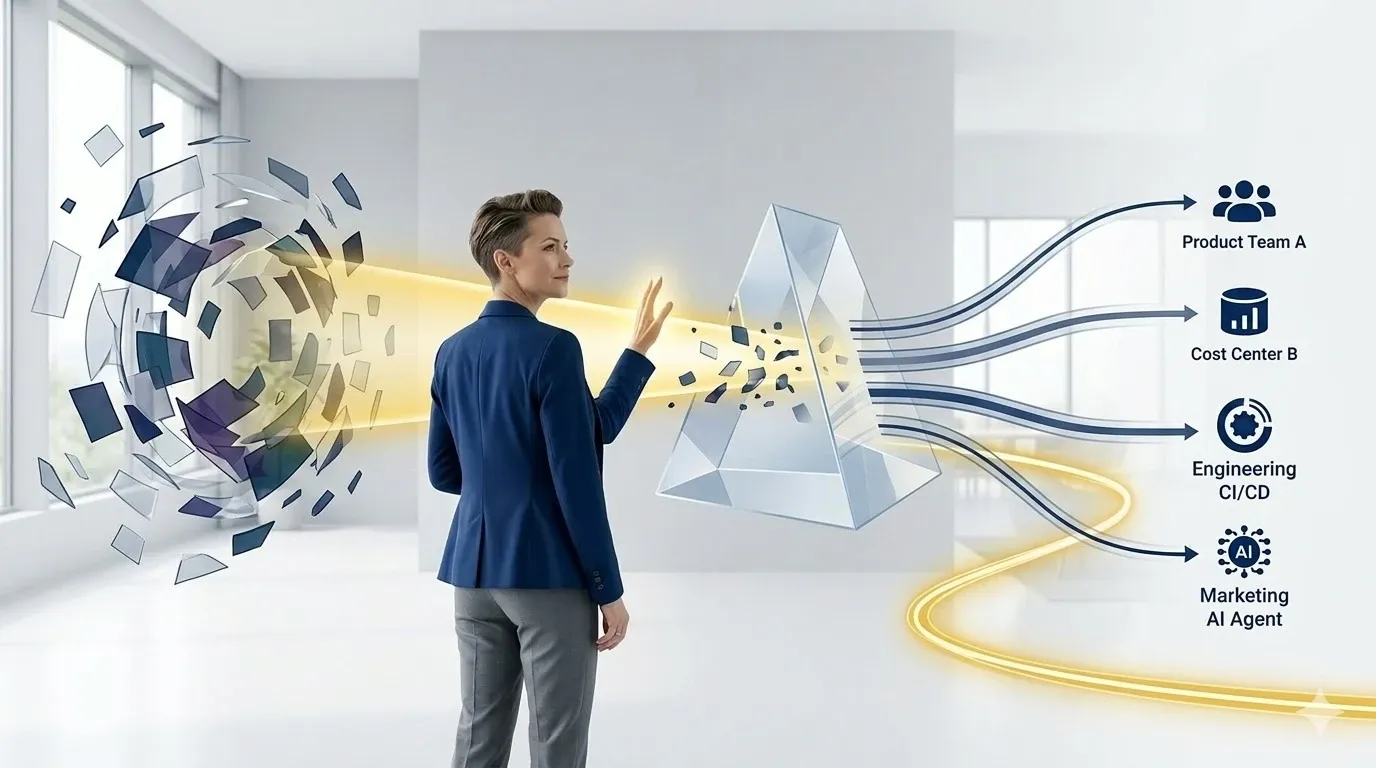

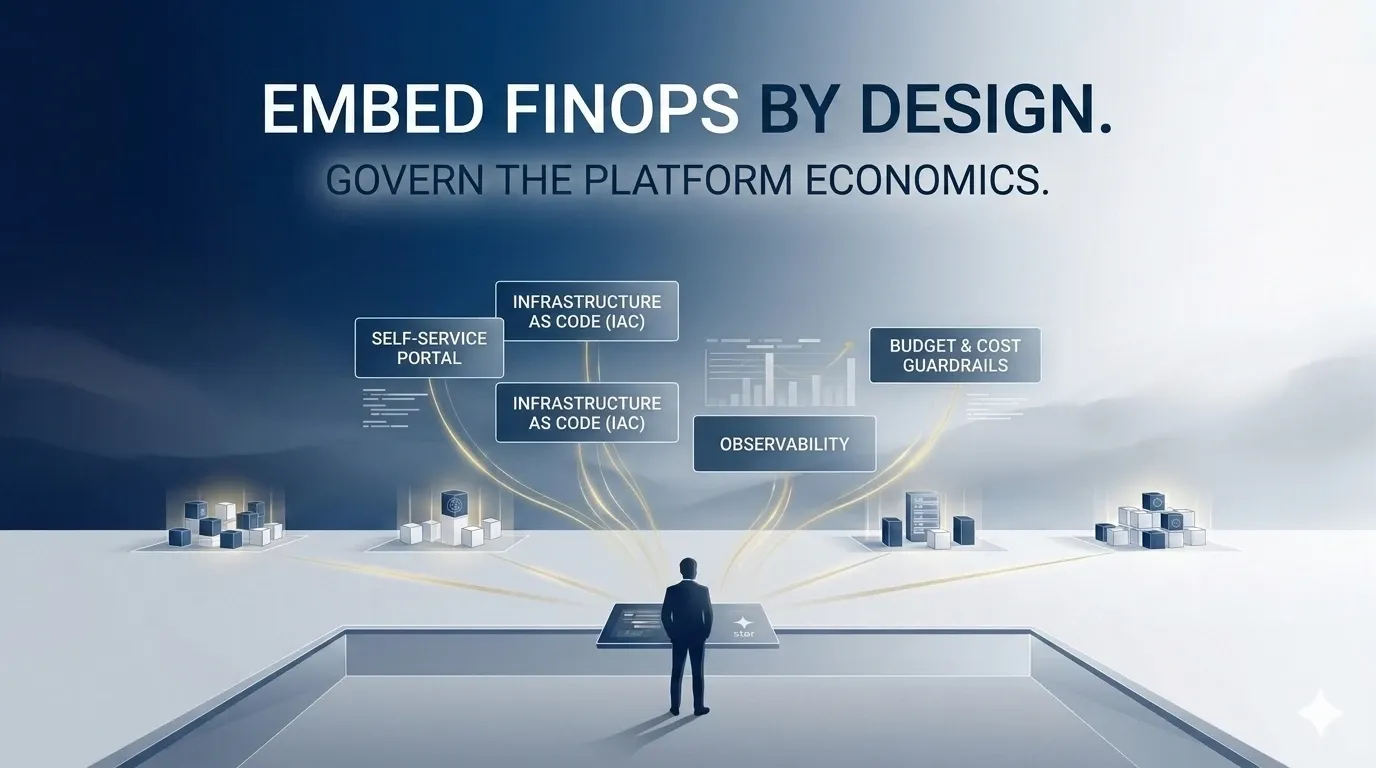

The Transformation: From Shadow Cost Center to Financial Control Plane

The evolution is precise: platform teams must become financial control planes, not cost-reporting recipients. The distinction is architectural, not cultural.

A shadow cost center absorbs costs it does not generate and cannot attribute.

A financial control plane governs costs at the point of origination — embedding visibility, attribution, and enforcement directly into the infrastructure layer where cost decisions are made.

Five capabilities define this transformation:

Capability 1 — Cost Visibility Before Provisioning

The highest-leverage intervention in platform cost governance is the one that prevents expensive decisions from being made without cost context.

Every self-service workflow in the IDP surfaces a projected monthly cost estimate before resource creation. A Kubernetes namespace request shows the monthly cost of the requested node capacity and compares it to the team’s remaining budget. A GPU cluster request shows the hourly rate and projected monthly cost for the configured instance type. A service template shows the relative cost of dev, staging, and production environment configurations.

# Example: IDP cost estimate surfaced at provisioning

# Before the developer clicks "Deploy", they see:

╔══════════════════════════════════════════════════════╗

║ Cost Estimate for: recommendation-engine-prod ║

║ ─────────────────────────────────────────────────── ║

║ Node pool (m5.4xlarge × 3): £4,320/month ║

║ Storage (500GB gp3): £38/month ║

║ Estimated observability overhead: £280/month ║

║ ─────────────── ║

║ Projected total: £4,638/month ║

║ Team Gamma remaining budget: £12,400/month ║

║ Budget after this provisioning: £7,762/month ║

╚══════════════════════════════════════════════════════╝

This is the intervention the FinOps Foundation has named the top desired capability for 2026. It prevents the expensive architectural choice before it is committed to production — rather than explaining it in next month’s cost review.

Capability 2 — Workload-Level Cost Attribution — Automatic, Not Retrospective

Every provisioned resource is attributed to an owning team, product, and cost centre at creation — enforced by the IDP admission layer, not applied in a retrospective tagging campaign.

The practical enforcement mechanism: OPA (Open Policy Agent) or Kyverno admission webhooks in the Kubernetes admission layer reject any namespace, deployment, or service that does not carry the mandatory attribution schema (team, product, environment, cost-centre, workload-type) before any infrastructure is provisioned. No resource enters the billing system without complete financial ownership metadata.

The real-world outcome documented from this model: a fintech firm that unified engineering, finance, and operations dashboards through workload attribution reduced dispute resolution time by 60%. The conversation changed from “why is cloud expensive?” to “Marketing’s AI chatbot costs £X/month and resolved 40% more customer queries — is that a good return?”

Capability 3 — Real-Time Cost as a First-Class Engineering Metric

Cost metrics appear in engineering dashboards at the same prominence as SLOs, DORA metrics, and application performance data:

| Engineering Dashboard — 2026 Model |

|---|

| Deployment frequency: 12/day ✅ |

| Change failure rate: 2.1% ✅ |

| P95 API latency: 134ms ✅ |

| Cost per transaction: £0.00034 ✅ |

| Namespace spend (this week): £8,420 ✅ |

| Budget remaining: £3,980 ⚠️ |

When cost is a real-time engineering signal — visible on the same dashboard, at the same cadence, with the same alert mechanisms as reliability metrics — engineers treat it as a first-class engineering concern. A streaming service that implemented this model saved £250,000/month in Redis costs by making cache layer cost visible to the teams managing it. The engineers already knew the cache was oversized. They simply had no financial signal to act on until cost became visible in their existing workflow.

Capability 4 — Automated Governance Enforcement — Not Advisory Alerts

Policy-as-code at the platform layer makes non-compliant, over-budget, and inefficient configurations technically difficult to deploy — not just advisorily discouraged.

A global logistics company that implemented this model blocks Kubernetes clusters that exceed predefined cost thresholds before deployment, reducing waste by 15%. The enforcement runs in the CI/CD pipeline: Terraform plan output includes cost impact estimation, and deployments that exceed budget thresholds require explicit override approval rather than silent deployment.

Non-production environment scheduling — enforced through Kubernetes namespace TTL controllers rather than developer discipline — recovers 65–70% of non-production compute hours that are currently idle overnight and at weekends. A £2M non-production estate recovers £1.3–1.4M annually from this single automated governance action.

Capability 5 — AI and GPU Cost Governance at Platform Layer

AI workloads require governance mechanisms that general-purpose platform cost tools were not designed to provide. The platform team that provisions GPU infrastructure without AI-specific governance is managing the most expensive resource in the estate with the least appropriate tooling.

Five AI-specific governance mechanisms belong at the platform layer:

GPU idle detection: per-node-pool utilisation monitoring with automated scale-down when utilisation falls below configurable thresholds (typically 15–20% for sustained periods). Average enterprise GPU utilisation is 20–35% — automated idle detection recovers the 65–80% idle period at hyperscaler GPU billing rates.

Token budget caps: per-team and per-product token budgets for AI API consumption, with automated throttle and suspend actions before monthly thresholds are breached. Not alerts — automated enforcement.

Agentic workflow kill-switches: automated process termination when per-run AI consumption exceeds configured thresholds. The governance mechanism that prevents recursive reasoning loops from generating thousands of pounds per hour before anyone is awake to stop them.

MIG GPU partitioning: Multi-Instance GPU configuration for H100 and A100 node pools, allowing a single GPU to serve multiple independent inference workloads simultaneously — directly addressing the low-utilisation problem that generates idle GPU cost on platforms serving intermittent AI workloads.

Inference cost attribution per team: every model call attributed to the owning team and product through API key governance and request tagging — producing the cost-per-AI-feature visibility that makes AI investment decisions financially legible.

The CIO–CFO–Platform Engineering Convergence

Platform Engineering is now the execution layer that sits between two executive mandates that rarely aligned before 2026:

The CIO needs technology strategy executed consistently, at scale, with security and reliability. The IDP is how that happens.

The CFO needs financial governance applied to the fastest-growing cost category in the enterprise. AI and cloud infrastructure is that category.

Platform Engineering is the operational layer where both mandates intersect. Organisations that recognise this convergence and build the governance infrastructure to support it — embedding financial control into the platform layer rather than applying it retrospectively through a separate FinOps function — achieve what the research consistently identifies as the combination of outcomes:

- Better cost control: because governance operates at decision time, not after the invoice

- Improved ROI: because costs are attributed to outcomes that can be measured against them

- Faster innovation: because governance that operates through automation rather than approval friction preserves the developer velocity that platform engineering was designed to deliver

The FinOps Foundation’s 2026 data confirms: FinOps under CTO/CIO creates stronger alignment with engineering and platform teams, enabling earlier influence on technology decisions and reinforcing the broader shift-left trend. Platform Engineering is increasingly joining as FinOps shifts left into development workflows.

The Federated Governance Model: What Winning Organisations Are Building

The governance model that mature organisations have converged on in 2026 is the federated FinOps model: a small central FinOps team (typically two to four people) that sets standards, defines policies, and owns ESR measurement — combined with embedded cost practitioners within engineering and platform teams who own day-to-day cost accountability.

62% of mature FinOps organisations use this hybrid structure. It balances standardisation with engineering autonomy — the central team cannot scale through headcount alone, so it scales through enablement, automation, and embedded champions.

For platform teams, the federated model translates to three embedded responsibilities:

Embedded financial engineering: a platform engineer (or a platform-embedded FinOps practitioner) who owns cost-as-platform-capability — building cost estimates into IDP templates, enforcing attribution through admission controls, and surfacing cost metrics in engineering dashboards. This person is not a FinOps analyst who reviews platform bills. They are a platform engineer who ships cost governance as a platform feature.

Weekly team-level cost reviews: at the engineering team level (not the FinOps team level), weekly review of actual spend versus team budget. Each team sees their spend, owns the explanation, and owns the action. The FinOps Foundation’s recommended cadence: weekly team reviews, monthly commitment purchasing reviews, quarterly architecture reviews with cost as an explicit criterion.

Cost as a performance review dimension: including FinOps activities in performance reviews alongside feature delivery. The question that practitioners are asking in 2026: “Once you fix it, it’s gone — how do we give engineers credit for shift-left cost governance activities?” The answer: make cost efficiency a tracked performance dimension, not an invisible benefit that nobody receives credit for.

DigiUsher: Turning Platform Teams Into Financial Control Planes

DigiUsher’s FinOps Operating System provides the integrated governance layer that transforms platform teams from default cost absorbers into active financial control planes.

Cost-aware self-service at provisioning time — projected monthly cost estimates surfaced inline in IDP workflows and Backstage service templates before resources are created. The pre-deployment cost signal that the State of FinOps 2026 identifies as the #1 desired platform capability.

Workload-level attribution enforcement — FOCUS 1.x normalised cost attribution at the namespace, service, and team level across EKS, AKS, GKE, and OKE simultaneously. Mandatory attribution schema enforced through IDP admission controls — no resource enters the billing system without complete financial ownership metadata.

Real-time cost as a platform metric — cost-per-workload, cost-per-service, cost-per-deployment surfaced in engineering dashboards alongside DORA metrics, not in separate monthly FinOps reports. The same operational signal cadence as latency, error rate, and throughput.

Automated policy enforcement — non-production environment scheduling through Kubernetes namespace TTL controllers, resource limit enforcement through admission webhooks, budget threshold triggers with automated throttle and suspend. Governance that enforces rather than advises.

AI and GPU cost governance — GPU idle detection, token budget caps, agentic workflow kill-switches, MIG partitioning recommendations, and per-chain inference attribution. The AI-specific governance layer that platform teams managing GPU infrastructure need and that general-purpose cloud cost tools cannot provide.

Shared cost allocation models — proportional and direct attribution of shared infrastructure costs to consuming teams based on actual usage metrics. Eliminating the default absorption pattern that makes platform teams look like expensive cost centres when they are actually efficient shared service providers.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware. AWS ISV Accelerate Partner listed on AWS Marketplace.

Platform teams are not the problem. The absence of financial control at the platform layer is. The organisations that solve this — embedding cost governance into the platform infrastructure itself rather than applying it retrospectively — will have platforms that are not just fast. They will be financially intelligent.

Frequently Asked Questions

Why are platform teams becoming cost centers in 2026?

Platform teams have become cost centers through five structural mechanisms operating simultaneously. Self-service removes the cost signal at decision time — developers provision instantly without seeing the financial consequence. Cloud billing breaks at the platform abstraction layer — Kubernetes clusters generate one billing line item regardless of how many services consume them. Shared infrastructure creates default cost absorption — without attribution, shared costs land in the platform team’s budget by default. AI workloads are exploding platform costs non-linearly — GPU infrastructure, inference endpoints, and agentic workflows generate costs that platform teams’ existing governance models were not designed for. And platform KPIs reward speed over financial efficiency — DORA metrics contain no cost dimension, so there is no incentive structure that rewards cost governance.

What is a platform team financial control plane and how does it differ from traditional FinOps?

A platform team financial control plane embeds cost governance directly into the Internal Developer Platform infrastructure — surfacing cost estimates at provisioning, enforcing attribution at resource creation, and automating governance actions before cost accumulates. Traditional FinOps operates retrospectively at billing cadence: monthly cost reviews, quarterly commitment reviews, recommendations that arrive weeks after the architectural decisions that generated the cost. A financial control plane operates at platform velocity: cost context at decision time, attribution at provisioning time, automated enforcement at usage time. The FinOps Foundation’s 2026 data names this shift — pre-deployment architecture costing — as the top desired tooling capability among practitioners. The financial control plane is the operational model that delivers it.

What does it cost enterprises when platform teams lack financial governance?

78% of companies waste 21–50% of cloud spend — with platform-managed shared infrastructure absorbing the largest share. 30–50% of GPU spend is wasted on over-provisioned AI resources. 65–70% of non-production compute hours are idle overnight and at weekends on every platform. An enterprise with £10M in annual cloud spend may be generating £2.1–5M in platform-related waste annually — from overprovisioned clusters, idle AI infrastructure, unattributed shared costs, and ungoverned self-service provisioning. Without governance, AI-driven cloud cost growth is projected to cause enterprises to overspend by 30% or more.

What is the federated FinOps model and how does it work for platform teams?

The federated FinOps model pairs a small central FinOps team (two to four people) that sets standards and owns ESR measurement with embedded cost practitioners within engineering and platform teams who own day-to-day accountability. 62% of mature FinOps organisations use this hybrid structure. For platform teams, it means a platform engineer who owns cost-as-a-platform-feature — building cost estimates into IDP templates, enforcing attribution through admission controls, and surfacing cost in engineering dashboards. Weekly team-level cost reviews replace monthly FinOps reports. Cost becomes a performance review dimension alongside feature delivery. The central team cannot scale through headcount alone — it scales through enablement, automation, and embedded champions.

How does AI change the cost governance challenge for platform teams?

AI changes platform cost governance in three material ways. First, cost non-linearity: GPU costs and token-based billing scale by orders of magnitude faster than CPU workloads — a single GPU node running idle costs the same as one at 95% utilisation. Second, cost velocity: an AI agent in a recursive reasoning loop can generate thousands of pounds per hour before anyone is aware. Monthly billing cycles cannot govern this. Third, attribution complexity: AI inference costs require attribution at the model, team, and product level — workload-level and namespace-level attribution that standard Kubernetes cost tools cannot provide for token-based billing. Platform teams need AI-specific governance mechanisms: GPU idle detection, token budget caps, agentic kill-switches, and inference attribution per team.

How does DigiUsher help platform teams become financial control planes?

DigiUsher’s FinOps OS provides five capabilities that transform platform teams from cost absorbers into financial control planes. Cost-aware self-service: projected monthly cost estimates in IDP workflows before resources are created — the #1 desired FinOps capability in the 2026 survey. Workload-level attribution: FOCUS 1.x normalised attribution at namespace, service, and team level across EKS, AKS, GKE, and OKE, enforced through IDP admission controls. Real-time cost as a platform metric: cost-per-workload in engineering dashboards alongside DORA metrics. Automated governance: non-production scheduling, resource limit enforcement, and budget threshold triggers. AI and GPU governance: GPU idle detection, token budget caps, agentic kill-switches, and per-chain inference attribution.

What results do platform teams achieve when they implement financial control plane governance?

Documented outcomes from 2026 implementations include: a global logistics company blocking Kubernetes clusters exceeding cost thresholds before deployment — reducing waste by 15%. A streaming service saving £250,000/month in Redis costs by making cache layer cost visible to engineering teams. A fintech firm reducing cost dispute resolution time by 60% by unifying engineering, finance, and operations dashboards. Non-production environment scheduling consistently recovers 65–70% of non-production compute hours across all platforms. DigiUsher customers report up to 20% additional savings above traditional cloud cost tool baselines, with the platform paying for itself in under 90 days.

References

- FinOps Foundation — State of FinOps 2026

- AI Infra Link — How Platform Engineering Impacts Resource Costs in 2026

- Platform Engineering.org — 10 FinOps Tools Platform Engineers Should Evaluate for 2026

- DevOps.com — FinOps Meets DevOps: Engineering Cost Ownership in 2026 (January 2026)

- byteiota — FinOps 2026 Implementation Guide: Cut Cloud Costs 30–50%

- codelynks — FinOps in 2026: Best Ways to Cut Cloud Waste by 30–40%

- Webvillee — FinOps Operating Model 2026: Roles, KPIs & Accountability (March 2026)

- turbogeek.co.uk — FinOps for DevOps Engineers: Making Cloud Cost Part of Your Pipeline

- FinOps Foundation — Managing Shared Cloud Costs

- FinOps Foundation — FOCUS Specification

- Gartner — Platform Engineering Trends Report 2026

- TechRadar — When Cloud Growth Outpaces Control: Waste Follows

- Forbes Tech Council — Cloud Cost Management in 2026

- thetechmasterpros.com — Why Cloud Cost Optimisation Is Moving from FinOps to Platform Engineering

Stop Absorbing Cost. Start Controlling It.

Platform teams are not the problem. The absence of financial governance at the platform layer is.

DigiUsher’s FinOps OS embeds cost visibility, attribution enforcement, and automated governance directly into the platform infrastructure — at provisioning time, not invoice time. Turning self-service from a cost risk into a governed financial capability.

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

The Death of Chargeback: Why Cost Allocation Is Failing in the Kubernetes and AI Era

Chargeback was built for a world of static servers, predictable workloads, and clear ownership boundaries. That world is gone. In 2026, shared Kubernetes clusters, ephemeral containers, and AI token costs have made traditional allocation models inaccurate, delayed, and politically toxic. This briefing explains the five failure modes destroying chargeback in modern infrastructure — and the five-capability model that replaces it.

Explore article

EKS vs AKS vs GKE vs OKE: Cost Governance for Platform Teams

Platform teams running EKS, AKS, GKE, and OKE face radically different cost structures behind identical Kubernetes APIs. Control plane fees alone cost $8,760/year per 10-cluster estate on EKS versus zero on AKS. OKE runs serverless workloads at one-third the cost of EKS or AKS. This technical FinOps playbook breaks down real 2026 pricing, hidden cost drivers, platform-specific optimisation levers, and the unified governance model that no single native tool provides.

Explore article

The Rise of Platform Engineering: Why FinOps Must Be Embedded by Design

80% of software engineering organisations will have dedicated platform teams by 2026 — up from 45% in 2022. But Gartner's own data confirms the critical gap: Internal Developer Platforms optimise for speed, not cost. This briefing explains why FinOps embedded at the platform layer — not bolted on after deployment — is the only governance model that prevents frictionless self-service from becoming frictionless overspend

Explore article