The Rise of Platform Engineering: Why FinOps Must Be Embedded by Design

80% of software engineering organisations will have dedicated platform teams by 2026 — up from 45% in 2022. But Gartner's own data confirms the critical gap: Internal Developer Platforms optimise for speed, not cost. This briefing explains why FinOps embedded at the platform layer — not bolted on after deployment — is the only governance model that prevents frictionless self-service from becoming frictionless overspend

Author

DigiUsher

Read Time

18 min read

From self-service infrastructure to financially accountable platforms in the age of AI and multi-cloud

- Gartner projects 80% of software engineering organisations will have dedicated platform teams by 2026 — up from 45% in 2022 and 55% in 2025

- IDPs deliver software 40% faster with nearly 50% reduction in operational overhead

- Organisations with mature platforms achieve 3.5× higher deployment frequency and 4× shorter lead times — DORA 2025

The business case for Internal Developer Platforms is proven. The financial governance model to prevent them from accelerating cost at the same rate they accelerate delivery is not.

“Your developers just shipped a Kubernetes service that will cost $47,000 a month to run. They found out three weeks after deployment when finance flagged the anomaly. By then, the architecture was locked, refactoring would delay the roadmap by a quarter — and everyone was pointing fingers.” — Cloud Native Now, KubeCon Europe 2026

This scenario plays out constantly in organisations where FinOps and platform engineering operate as parallel disciplines. The platform optimises for developer velocity. FinOps optimises for cost efficiency. The two functions produce the most expensive possible outcome when they operate independently: a fast platform generating ungoverned spend at platform scale.

At KubeCon Europe 2026 Platform Engineering Day, one theme dominated:

“We’ve solved developer experience. Now we must solve economic efficiency.”

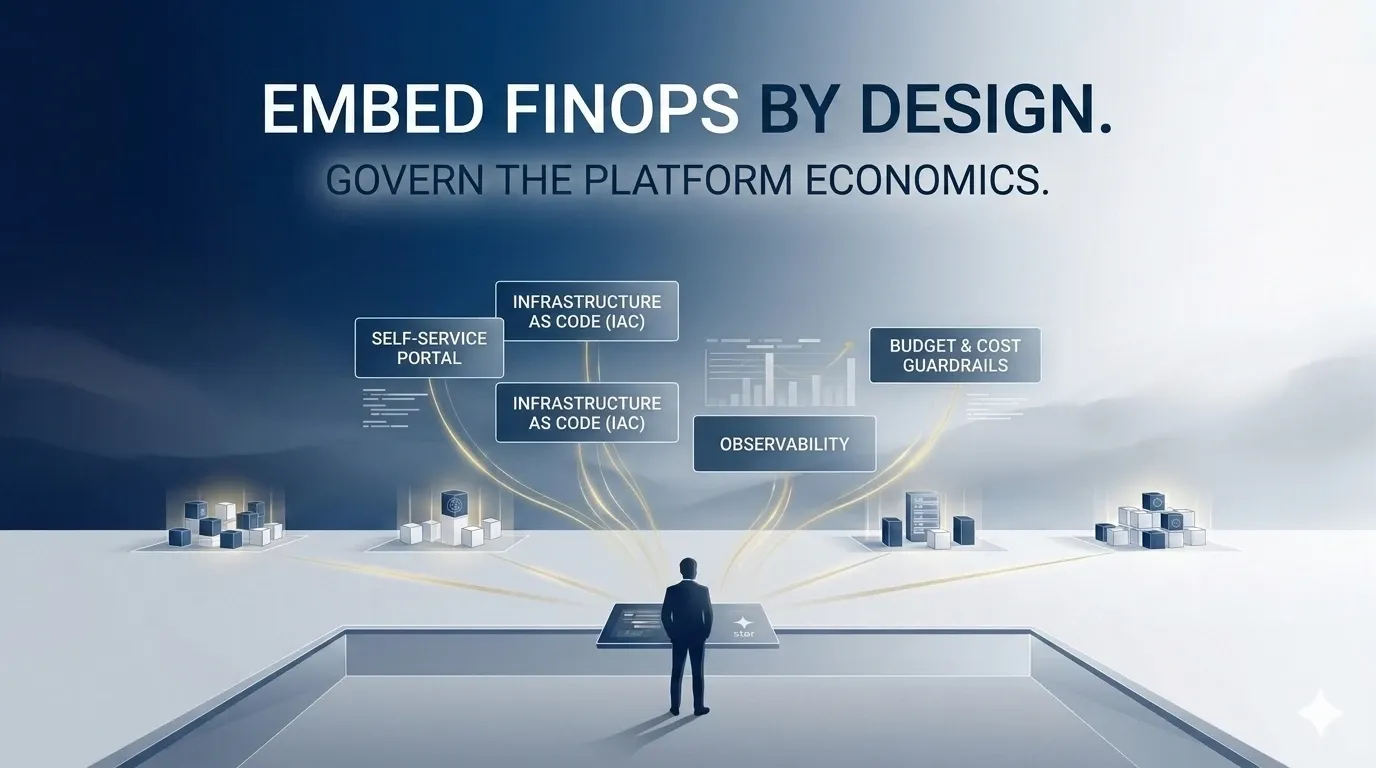

This briefing explains what solving economic efficiency looks like operationally — the five capabilities that transform an IDP from a delivery engine into a financially governed system, and why FinOps must be embedded at the platform layer by design rather than applied as a reporting overlay after spend has already been committed.

What Is Platform Engineering FinOps?

Platform Engineering FinOps is the practice of embedding financial governance — cost visibility, attribution, guardrails, and automated optimisation — directly into Internal Developer Platform workflows and Kubernetes infrastructure at provisioning time.

It is distinct from both traditional FinOps and standard platform engineering in a critical operational respect:

Traditional FinOps: Platform Engineering FinOps:

──────────────────────── ──────────────────────────────────────

Reports on past spend Governs spend at the moment it is created

Monthly billing data Real-time provisioning cost signals

Retrospective attribution Mandatory attribution enforced at admission

FinOps team recommendations Platform-enforced policy-as-code

Developer awareness via Cost visible inline in developer workflow

separate dashboards

AI costs discovered at AI token budgets enforced at API key creation

month-end

The State of FinOps 2026 identifies the structural shift that makes this model necessary: Platform Engineering is increasingly participating in “shift left” conversations — and FinOps teams are building pricing calculators and offering pre-deployment guidance. But incentive structures have not caught up.

The gap between intent and implementation is the financial risk. Every day a platform provisions resources without embedded cost governance is a day the IDP delivers velocity without accountability — accelerating the inefficiency it was designed to eliminate.

The Acceleration Paradox: Speed Without Financial Discipline

Internal Developer Platforms introduce a paradox that is structurally predictable but rarely anticipated:

The same capabilities that make IDPs valuable — frictionless self-service, instant provisioning, abstracted infrastructure — are precisely the capabilities that remove cost signals from engineering decision-making.

A developer provisioning a new service through an IDP experiences:

- Zero-friction environment creation

- Instant cluster access

- Pre-configured golden paths

- No cost estimate

- No budget constraint

- No tagging requirement

- No attribution validation

The IDP has successfully eliminated infrastructure friction. It has simultaneously eliminated every financial governance checkpoint that previously existed in the provisioning workflow.

The financial consequence: frictionless consumption leads to uncontrolled spend. Not through rogue behaviour — through the perfectly rational execution of the IDP’s design objective. Speed without governance is not an efficiency gain. At platform scale, it is a financial risk multiplier.

FinOps will move from reactive dashboards to preventive controls — and this shift is non-negotiable. Platform teams without cost gates will face budget overruns, and overruns mean lost funding. — byteiota Platform Engineering ROI 2026

The organisations that discover this most painfully are those that deploy IDPs for 12–18 months, achieve excellent DORA metrics, and then present a cloud bill to the CFO that has grown at exactly the rate of developer adoption. The IDP worked. The governance didn’t.

Why FinOps Cannot Be a Reporting Overlay on a Platform

The conventional FinOps model — monthly cost reports, quarterly optimisation reviews, retrospective tagging campaigns — fails structurally in platform-driven environments for three reasons:

The timing mismatch. Cloud cost data arrives with hours-to-day-scale latency. FinOps reporting cycles are monthly. The platform generates spend decisions continuously; the governance signal arrives weeks later. By the time cost is reported, resources are provisioned, inefficiencies are embedded in architecture, and the engineering behaviour that created the cost has already moved to the next sprint. Reporting on last month’s spend cannot govern this month’s provisioning decisions.

The abstraction gap. Platform engineering abstracts infrastructure behind APIs, service templates, and golden paths. The Kubernetes namespace, the AKS cluster, the GPU node pool — all provisioned through the platform interface with no mandatory cost ownership metadata unless the platform explicitly enforces it. Finance receives a cloud invoice. The IDP shows nothing. Neither system can answer who owns the workload or whether it is generating return proportionate to its cost.

The AI cost acceleration. Platform engineering is now the provisioning layer for AI infrastructure — Azure OpenAI endpoints, AWS Bedrock integrations, Vertex AI ML pipelines, and GPU Kubernetes clusters. GPU-intensive workloads now account for 18% of total cloud spend at AI-forward enterprises — up from 4% in 2023. AI costs are non-linear and volatile: inference loads spike unpredictably, token-based billing scales with usage complexity, and agentic workflows can multiply costs 5–30× beyond chatbot-level baselines. A monthly FinOps report cannot govern this dynamic. Only real-time platform-layer governance can.

FinOps by Design: Five Capabilities That Change the Model

Capability 1 — Cost-Aware Self-Service: Developers See Cost Before They Commit

The problem it solves: architecture decisions that determine monthly billing are made with performance, latency, and reliability as inputs. Cost is not in the room at decision time.

What it looks like operationally: every self-service workflow in the IDP surfaces a cost estimate before resource creation. Kubernetes namespace provisioning shows the monthly cost of requested node capacity. GPU cluster requests show hourly cost for H100 versus A100 configurations. Environment templates show relative monthly cost for dev, staging, and production profiles. The developer makes the architecture decision with full economic context — not three weeks after deployment when finance flags the anomaly.

Implementation: pre-deployment costing integrated into Backstage service templates, Crossplane compositions, and Terraform module catalogues. Cost estimates generated from FOCUS-normalised, real-time pricing data and updated inline as configuration options change.

The State of FinOps 2026 identifies pre-deployment architecture costing as the top desired tooling capability among FinOps practitioners. But only the organisations embedding it into the IDP workflow achieve it consistently. Linking to a separate FinOps dashboard from the IDP does not count — if developers must leave their workflow for cost information, they will not seek it.

Capability 2 — Built-In Guardrails via Policy-as-Code

The problem it solves: financial policies enforced through documentation, developer education, and approval workflows that operate outside the platform layer — creating governance friction that engineers route around.

What it looks like operationally: resource requests above configurable ceilings are blocked at provisioning. GPU instance types outside approved tiers require explicit approval workflows triggered within the IDP. Environments without mandatory attribution tags (team, product, cost-centre, environment, workload-type) cannot be created — the IDP admission controller rejects the request before any infrastructure is provisioned.

Platform enforces:

- CPU and memory request ceilings per namespace tier

- Approved GPU instance type lists with cost tier classification

- Mandatory tagging schema enforced before resource creation

- Environment TTLs (time-to-live) that automatically terminate non-production resources outside business hours

- Token budget caps on AI API keys at provisioning

Implementation: OPA (Open Policy Agent) or Kyverno integrated into the IDP admission layer. Policy definitions maintained as code in the IDP repository, version-controlled alongside platform configuration, and applied consistently across every provisioning path.

Google’s “shift down” principle, introduced at PlatformCon 2025, captures this precisely: embed decisions and responsibilities into underlying platforms, making non-compliant deployments not merely discouraged but technologically impossible. Policy-as-code at the platform layer makes this structural rather than aspirational.

Capability 3 — Real-Time Cost as a First-Class Platform Metric

The problem it solves: cost is a lagging indicator, reported monthly by a separate FinOps function, disconnected from the engineering metrics that developers and platform teams actually optimise for.

What it looks like operationally: cost metrics are exposed in developer dashboards at the same level of prominence as SLOs, DORA metrics, and application performance data. Teams see their daily spend trajectory, namespace cost efficiency, GPU utilisation rate, cost-per-deployment, and projected monthly bill — alongside the metrics they already monitor continuously.

The UK bank case study: One engineering organisation implemented this model by treating cloud spend as a real-time P&L — published on the same leaderboard as trading performance metrics, tied to cost efficiency bonuses. Every pound of cloud spend had an owner. Cost-per-transaction (£0.00034 per payment) was visible to engineers. Result: annual AWS spend reduced from £9.4M to £5.6M — a 40% reduction — with the same workload, same regulatory requirements, and zero SLA degradation.

Implementation: OpenCost or equivalent Kubernetes-native cost attribution integrated into the IDP observability stack. Cost data normalised to FOCUS 1.x and surfaced in Backstage, Grafana, or custom developer portals alongside existing telemetry. GitOps deployment pipelines surfacing cost regression detection before changes reach production.

Capability 4 — Automated Optimisation as Platform Infrastructure

The problem it solves: rightsizing, bin packing, and GPU lifecycle management treated as periodic FinOps review exercises — generating recommendations that compete with delivery priorities for engineering bandwidth.

What it looks like operationally: workload optimisation is a platform capability, not a human exercise. VPA recommendations surface automatically within the IDP developer workflow. Cluster bin packing is governed by scheduler policies enforced continuously. Environment TTLs terminate non-production infrastructure on automated schedules. GPU idle detection triggers scale-down with configurable thresholds — without requiring manual FinOps intervention.

Implementation pattern:

# Example: Platform-enforced environment TTL policy

# All non-production namespaces with TTL annotation are automatically

# terminated by platform controller at 18:00 local time, reprovisioned at 08:00

apiVersion: platform.internal/v1

kind: EnvironmentPolicy

metadata:

name: non-prod-ttl-governance

spec:

selector:

environment:

- dev

- staging

ttl:

enabled: true

shutdownTime: "18:00"

startupTime: "08:00"

weekends: false # No automatic restart Saturday/Sunday

costSaving:

estimatedMonthlyReduction: "auto"

alertOnException: trueImpact: IDPs enforcing automated FinOps controls deliver 30–40% cloud cost reduction. Platform-native optimisation converts the FinOps team from a reactive reporting function into a strategic governance capability — enabling small central teams to govern large, distributed engineering organisations without linear headcount growth.

Capability 5 — Accountability by Default: Every Workload Has an Owner

The problem it solves: attribution deployed as a documentation requirement, not a technical enforcement — creating permanent tagging gaps that prevent cost-to-business-outcome reconciliation.

What it looks like operationally: attribution is mandatory at provisioning, enforced by the platform layer before any resource is created. The IDP service template requires team, product, environment, cost-centre, and workload-type labels as non-optional fields. The admission controller validates these labels before creating the namespace, cluster, or API key. No untagged resource enters the billing system.

The governance consequence: when accountability is automatic and universal:

- Finance can reconcile the cloud bill against business outcomes at the cost-centre and product level

- Platform teams can identify the highest-cost workloads by owner without retrospective audit

- FinOps teams can produce chargeback reports automatically without manual attribution

- CIOs can present technology ROI by product line — not by infrastructure category

“Once you fix it, it’s gone… how do we give developers credit for shift-left activities?” — State of FinOps 2026 practitioner survey. The answer: accountability by design generates continuous, automatic evidence of governance discipline — every attributed workload is a governance success, visible in real time.

The CIO–CFO–Platform Triangle

Platform Engineering creates a structural alignment requirement between three organisational roles that have historically operated independently. When this alignment is absent, IDP adoption generates delivery velocity without financial accountability. When it is present, platform engineering becomes one of the highest-return technology investments in the enterprise portfolio.

The CIO–CFO–Platform Triangle: Aligned Governance

──────────────────────────────────────────────────────────────────

CIO / CTO

┌─────────────────┐

│ Technology │

│ strategy, │

│ architecture, │

│ IDP standards │

└────────┬────────┘

│ Shared attribution

│ taxonomy and

│ pre-deployment

│ approval thresholds

┌─────────┴─────────────────────────┐

│ │

┌──────┴──────┐ ┌─────────┴──────┐

│ CFO / │ │ Platform │

│ VP Finance │ │ Engineering │

│ │ │ Team │

│ Business │ Shared KPIs: │ │

│ outcome │ Cost/deploy, │ Embed FinOps │

│ attribution,│ Cost/user, │ capabilities │

│ chargeback, │ Cost efficiency│ at platform │

│ ROI metrics │ ratio │ layer │

└─────────────┘ └────────────────┘

──────────────────────────────────────────────────────────────────

The shared KPI set that makes this triangle operational rather than aspirational:

| KPI | What It Measures | Who Acts on It |

|---|---|---|

| Cost per deployment | Cloud cost efficiency of delivery | Platform team + CIO |

| Cost per active user | Product economics | Platform team + CFO |

| Cloud cost as % revenue | Overall efficiency | CFO + Board |

| Attribution coverage % | Governance completeness | Platform team + FinOps |

| GPU utilisation rate | AI infrastructure efficiency | Platform team + CIO |

| Forecast accuracy ±% | Financial planning reliability | CFO + FinOps |

Forrester’s finding: organisations that align CIO, CFO, and platform engineering around shared technology value metrics achieve significantly better cloud and AI investment outcomes. The mechanism: shared KPIs, integrated governance models, and joint pre-deployment decision-making prevent the accountability gap between infrastructure cost and financial return that drives cloud waste in ungoverned environments.

Why Most Platforms Fail Financially — Four Anti-Patterns

Anti-Pattern 1: Dashboards Without Enforcement

The most common failure mode: cost visibility built into the IDP without budget enforcement or tagging requirements. Developers see cost estimates but are not constrained by them. Charts and reports provide awareness without accountability.

Why it fails: developers operating under delivery pressure make the fast architectural decision, not the cost-efficient one. Awareness of cost without financial consequence for cost decisions does not change behaviour — it documents it.

Anti-Pattern 2: Attribution Assigned After Provisioning

Tagging requirements communicated through developer documentation, enforced through periodic “tagging campaigns,” treated as a compliance effort rather than a technical control.

Why it fails: once resources are provisioned and running, retroactive tagging requires engineering effort that competes with delivery priorities. In practice, tagging campaigns are never fully complete. Untagged resource backlog grows faster than cleanup cycles can address it. Attribution must be enforced at creation.

Anti-Pattern 3: AI Workloads Governed by Standard Cloud Policies

GPU clusters, AI API integrations, and token-based workloads governed by the same admission policies and cost thresholds designed for containerised application workloads.

Why it fails: AI cost economics are fundamentally different from container economics. Token-based billing is non-linear. Agentic AI can generate 5–30× more cost per task than the standard chatbot the policy was designed around. GPU idle time generates costs at rates that standard workload policies cannot intercept fast enough. AI workloads require AI-specific platform governance.

Anti-Pattern 4: FinOps Outside the Platform Engineering Org

FinOps operating as a separate reporting function that generates recommendations for platform teams to implement — with separate tooling, separate data, and no workflow integration.

Why it fails: governance lag is structural. By the time FinOps recommendations are generated, reviewed, prioritised, and scheduled into the platform team’s roadmap, the architecture decisions that created the cost have been made two sprints ago. The State of FinOps 2026 is explicit: Platform Engineering and FinOps must participate in the same shift-left conversations — not in separate workflows with a recommendation handoff between them.

What Great Looks Like in 2026

The organisations leading in platform engineering FinOps in 2026 have moved beyond treating cost governance as a post-deployment exercise. The distinguishing disciplines:

They embed cost visibility before the first deployment decision. IDP service templates show projected monthly cost alongside performance configuration options. The architecture decision includes cost as an input — not as a consequence discovered three weeks later.

They make non-compliant deployments technically impossible. Policy-as-code in the admission layer rejects untagged resources and oversized requests at the platform boundary — before any infrastructure is provisioned.

They surface cost alongside performance and reliability in every dashboard. Engineers optimise for cost the same way they optimise for latency and error rate — because the platform makes cost equally visible and equally a first-class engineering metric.

They govern AI workloads with AI-specific platform controls. Token budget caps enforced at API key creation. GPU idle detection triggering automated scale-down. Agentic workflow cost attribution tracking per-chain token economics. The platform governance layer that prevents the 18% GPU share from becoming 40% without financial accountability.

They measure platform success in business terms. DORA metrics plus cost-per-deployment, cost-per-active-user, and cloud cost as a percentage of revenue. Platform ROI demonstrable in CFO language — not only in engineering metrics. Platform Engineering teams that cannot translate MTTR to money will not survive 2026 budget reviews.

DigiUsher: FinOps Embedded Into the Platform Layer

DigiUsher’s FinOps Operating System integrates with platform engineering at the four layers that make FinOps by design operationally achievable rather than aspirationally intended:

Platform-layer FOCUS normalisation — real-time cost data from AWS, Azure, GCP, AI platforms, and Kubernetes clusters normalised to FOCUS 1.x. The pricing data infrastructure that IDPs need to display cost estimates at provisioning time and generate accurate post-deployment attribution.

Policy enforcement API — budget guardrails, tagging enforcement, and resource limit policies surfaced as API-callable governance controls that IDP admission webhooks invoke at provisioning time. Platform teams configure governance rules once; DigiUsher enforces them across every provisioning path.

AI and GPU cost governance — token budget caps, model tier access controls, GPU idle detection, and agentic workflow attribution integrated into Kubernetes platform layers. AI-specific governance that standard IDP frameworks do not include.

Executive alignment reporting — cost-per-deployment, cost-per-inference, cost-per-product, and cloud cost efficiency metrics in board-ready format. The shared KPI layer that makes the CIO–CFO–Platform triangle operationally aligned rather than theoretically aligned.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

Platform Engineering is reshaping how infrastructure is consumed. Without financial governance embedded at the platform layer, it accelerates inefficiency at scale. The next evolution is clear: platforms must not only be self-service. They must be self-governing financially.

Frequently Asked Questions

What is FinOps by design in platform engineering and how does it differ from traditional FinOps?

FinOps by design embeds financial governance into IDP workflows at provisioning time — cost estimates before resources are created, tagging enforced at admission, automated optimisation as a platform capability. Traditional FinOps operates as a monthly reporting overlay on already-committed spend. The critical difference: in traditional FinOps, cost reports follow decisions by days to weeks. In FinOps by design, cost context precedes decisions — appearing inline in the developer workflow where architecture choices are actually made.

Why is platform engineering adoption accelerating so rapidly?

Gartner projects 80% of software engineering organisations will have platform teams by 2026 — up from 45% in 2022. Four drivers: DevOps at scale creates cognitive overload (developers spend 30–40% of time on infrastructure tasks unrelated to business logic); IDPs solve this by abstracting complexity behind self-service interfaces; DORA 2025 confirms 3.5× higher deployment frequency for mature platform organisations; and AI workloads are forcing platform standardisation for GPU and token-based cost governance.

What is the cost impact of embedding FinOps into an IDP?

30–40% cloud cost reduction when an IDP enforces FinOps practices by default — through automated rightsizing, intelligent autoscaling, and real-time attribution baked into developer workflows. On a £5M annual cloud bill, this generates £1.5M+ in direct savings, typically exceeding the platform engineering programme cost within the first year.

How should platform teams implement pre-deployment cost visibility?

Four components: real-time FOCUS-normalised pricing data; cost estimates embedded directly into Backstage service templates and Crossplane compositions — inline, not in a separate portal; comparative cost display showing the financial implications of different configuration options; and budget constraint enforcement that triggers a block or approval workflow when projected monthly cost exceeds defined limits.

How does AI change the platform engineering FinOps challenge?

AI workloads now account for 18% of cloud spend at AI-forward enterprises (up from 4% in 2023). Three specific changes: cost non-linearity (inference scales by orders of magnitude faster than containers); inference-specific economics (token budgets, model tier controls, agentic multiplication) requiring platform-native AI governance beyond standard cloud policies; and attribution complexity (AI costs require model, team, and product level attribution that standard namespace attribution cannot provide).

What does the CIO–CFO–Platform Engineering triangle require?

Three convergence points: a shared attribution taxonomy (CFO defines business outcome model, CIO translates to technical tagging schema, platform team enforces through admission controls); pre-deployment cost approval thresholds (CFO defines budget limits, CIO integrates into IDP templates, platform team enforces through policy-as-code); and shared KPIs across delivery and finance (cost per deployment, cost per active user, cloud cost as % revenue — making platform engineering legible to finance and financial governance legible to engineering).

How does DigiUsher integrate with platform engineering?

Through four layers: platform-layer FOCUS normalisation providing real-time pricing data for IDP cost estimates; policy enforcement API enabling admission webhooks to invoke governance controls; AI and GPU governance including token budget caps, GPU idle detection, and agentic workflow attribution; and executive alignment reporting producing cost-per-deployment and cost-per-product metrics in board-ready format.

References

- Gartner — Platform Engineering Teams: 80% Adoption Forecast by 2026

- DORA 2025 — State of DevOps Report: 3.5× Deployment Frequency with Mature Platforms

- Platform Engineering.org — 10 Platform Engineering Predictions for 2026

- Cloud Native Now — Build Cost Awareness Into Your Kubernetes IDP (KubeCon EU 2026)

- byteiota — Developer Productivity 2026: AI and Platform Engineering Shift

- byteiota — Platform Engineering ROI in 2026: Business Metrics Win

- HAMS Tech — Kubernetes Platform Engineering 2026: IDP ROI Business Case

- EITT — Platform Engineering: The Evolution of DevOps in 2026

- FinOps Foundation — State of FinOps 2026

- Platform Engineering.org — 10 FinOps Tools Platform Engineers Should Evaluate for 2026

- Roadie.io — Platform Engineering in 2026: Why DIY Is Dead

- Growin — Platform Engineering in 2026: 5 Shifts Driving the Rise of IDPs

- Cloud4U — FinOps in 2026: Cost Optimisation Practices

- DEV Community / Meena Nukala — FinOps in 2026: £3.8M Saved by Treating Cloud Like a Trading Floor

- CNCF — Platform Engineering Day Europe 2026 Proceedings

- FinOps Foundation — FOCUS Specification

Build a Platform That Is Self-Governing Financially

Platform Engineering has solved the developer experience challenge. The next challenge — and the one that determines whether the platform investment generates financial return — is economic efficiency.

- Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails

- FinOps for Kubernetes: The Ultimate Guide

- Why FinOps Is Now a Board-Level Responsibility

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

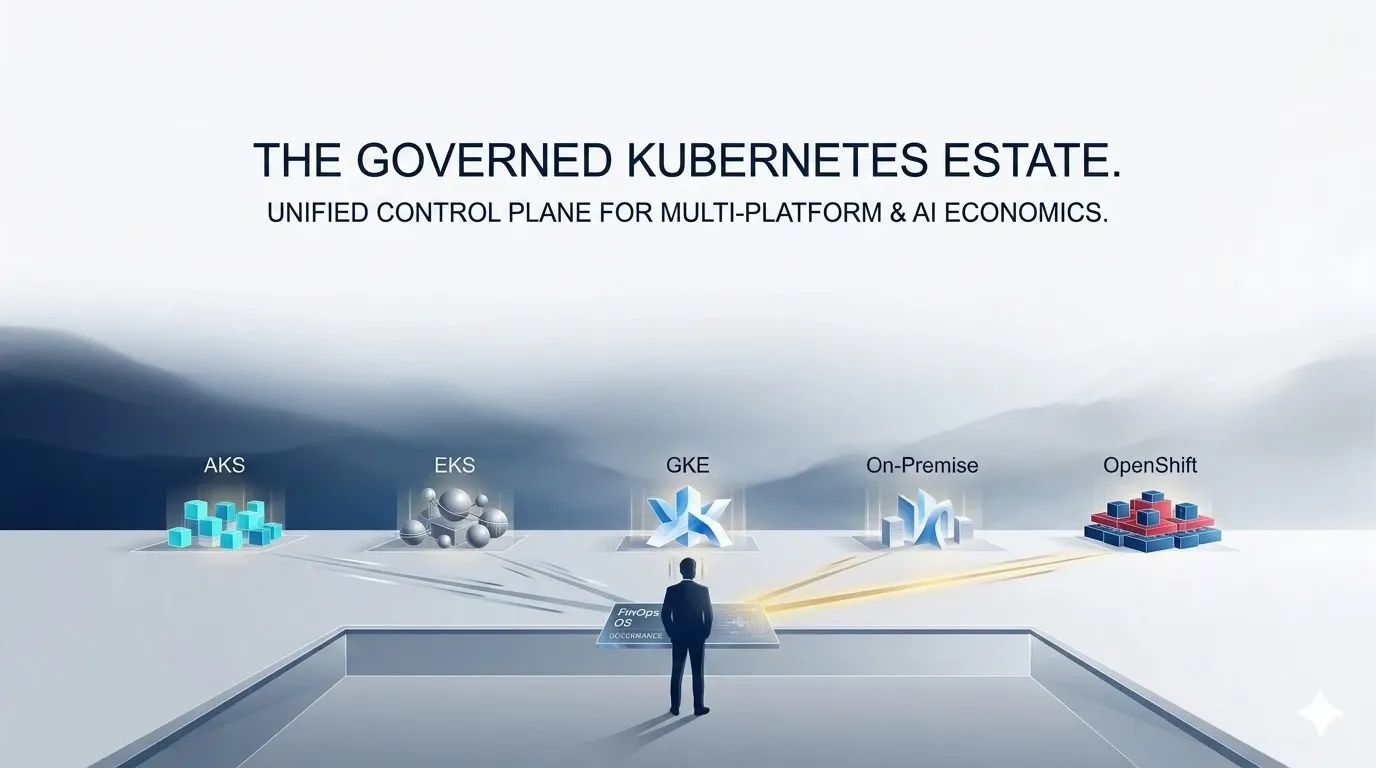

AKS vs EKS vs GKE vs On-Prem vs OpenShift: Cost Governance Deep Dive

Not all Kubernetes platforms are economically equal. This FinOps deep dive compares AKS, EKS, GKE, on-prem Kubernetes, and OpenShift across cost visibility, pricing structure, optimisation potential, and governance capability — with a practical framework for making Kubernetes platform economics a competitive advantage in 2026.

Explore article

FinOps for Kubernetes: The Ultimate Guide to Rightsizing, Bin Packing, and GPU Optimisation

96% of enterprises run Kubernetes — yet only 13% of requested CPU is actually used. This ultimate FinOps guide covers workload rightsizing using P50/P95 percentiles, bin packing strategies for node efficiency, GPU optimisation with MIG and time-slicing, namespace cost attribution, and real-time policy enforcement — with concrete benchmarks, configuration patterns, and the DigiUsher FinOps OS layer that governs it all.

Explore article

Kubernetes Economics: Why Containers Multiply Cloud Waste

99% of Kubernetes clusters are overprovisioned. The average cluster wastes 47% of provisioned resources. At KubeCon Europe 2026, the industry admitted what FinOps practitioners already knew: Kubernetes solves deployment — it amplifies inefficiency. This deep dive explains the five hidden financial mechanisms behind Kubernetes waste, why traditional FinOps cannot fix them, and what a FinOps OS layer does that point tools cannot.

Explore article