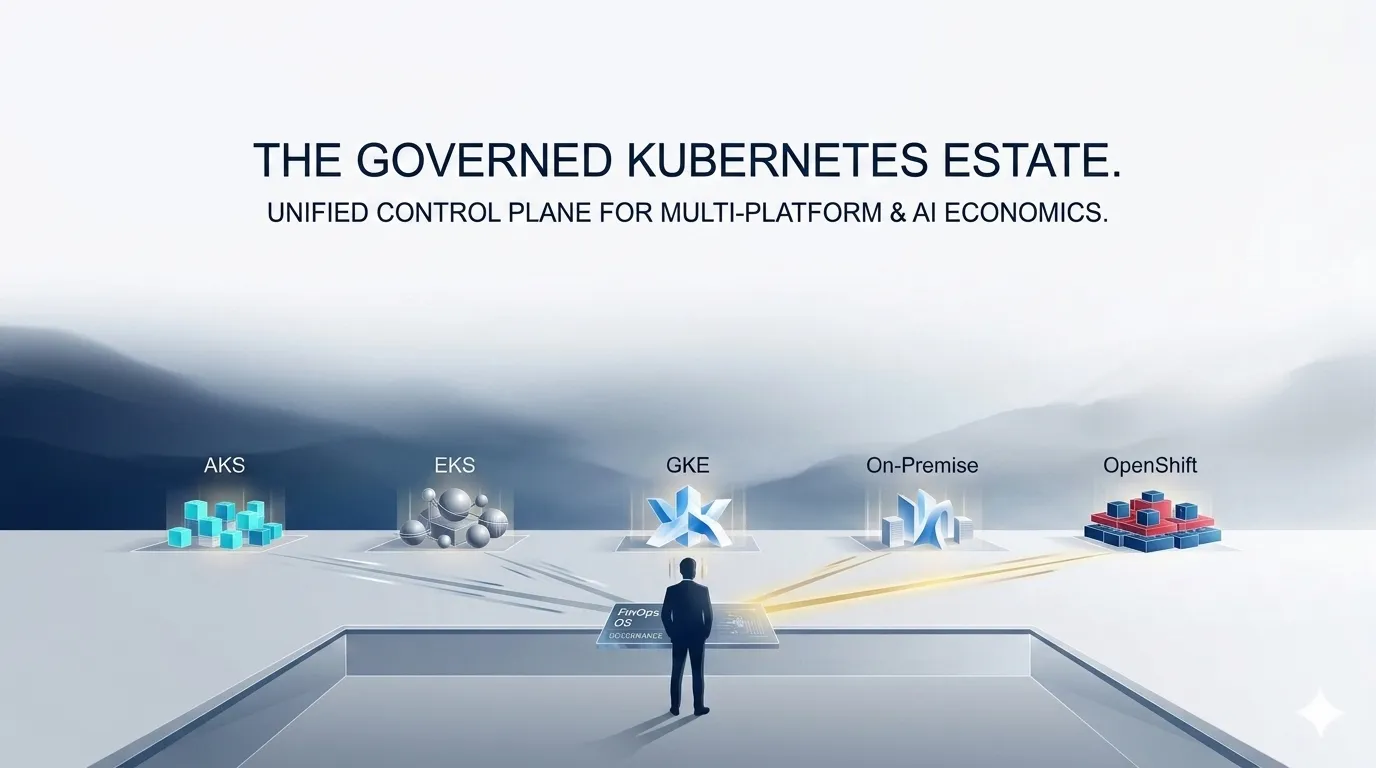

AKS vs EKS vs GKE vs On-Prem vs OpenShift: Cost Governance Deep Dive

Not all Kubernetes platforms are economically equal. This FinOps deep dive compares AKS, EKS, GKE, on-prem Kubernetes, and OpenShift across cost visibility, pricing structure, optimisation potential, and governance capability — with a practical framework for making Kubernetes platform economics a competitive advantage in 2026.

Author

DigiUsher

Read Time

19 min read

Executive Summary

Kubernetes has become the universal control plane for cloud-native and AI workloads. But not all Kubernetes platforms are economically equal — and in 2026, the platform choice you made for technical reasons is producing financial consequences you may not have anticipated.

Enterprises today operate across some combination of:

- Azure Kubernetes Service (AKS) — tightly integrated with Microsoft

- Amazon Elastic Kubernetes Service (EKS) — native to Amazon Web Services

- Google Kubernetes Engine (GKE) — built and operated by Google

- On-Premises Kubernetes — self-managed clusters on owned hardware

- Red Hat OpenShift — enterprise Kubernetes with platform governance

While these platforms deliver similar workload orchestration capabilities, they differ profoundly in cost visibility, pricing structure, operational overhead, and optimisation potential.

At KubeCon Europe 2026, one theme emerged consistently from platform engineering and FinOps practitioners:

“Kubernetes is no longer the challenge — operating it efficiently is.”

This briefing provides a technical FinOps comparison across all five platforms, a practical GPU economics guide, and a platform-agnostic governance framework — helping enterprises understand which platform enables cost control, and which amplifies cost complexity.

The Kubernetes Cost Stack: A Technical Baseline

Regardless of platform, every Kubernetes deployment carries the same six cost categories. How they are exposed, attributed, and governed varies significantly by platform:

Kubernetes Cost Stack

─────────────────────────────────────────

① Compute (VMs / Nodes) ← largest category

② Storage (Persistent Volumes) ← IOPS and capacity billing

③ Networking (Ingress / Egress) ← most underestimated

④ Control Plane ← varies: free to $0.10/hr+

⑤ Platform Overhead ← tooling, mesh, monitoring

⑥ AI / GPU Workloads ← fastest growing

─────────────────────────────────────────

Total Kubernetes Cost

This structure is consistent. What differs dramatically across platforms is whether each layer is visible, attributable to workloads, and governable through automated policy — or whether it is buried in undifferentiated billing that requires manual forensics to understand.

Platform-by-Platform Cost Governance Analysis

AKS — Azure Kubernetes Service {#aks-cost-governance}

Cost complexity: Integrated but layered | Control plane: Free (standard) | Best fit: Enterprise Azure organisations

Cost Model

AKS charges for VM node pool instances, Azure Managed Disks (per tier and provisioned capacity), networking (Load Balancer, egress, Private Link), Azure Container Registry operations, and — increasingly — Azure OpenAI and GPU VM instances for AI workloads. The control plane is free in standard tiers, with an uptime SLA charge for premium configurations.

FinOps Advantages

AKS is the most naturally integrated Kubernetes platform for enterprises already running Azure workloads. Azure’s subscription and resource group hierarchy creates a billing structure that aligns with organisational boundaries, making cost allocation easier than on platforms where all workloads land in a flat account. Azure Cost Management provides centralised billing visibility, tagging-based cost attribution, and integration with Azure Policy for governance controls.

Governance Challenges

The billing hierarchy strength is also a governance ceiling: Azure Cost Management provides visibility at resource group level, not workload or namespace level. Attributing Kubernetes spend to individual services, teams, or products requires an external FinOps layer that can map namespace-level usage to the cost data Azure surfaces at VM level.

Hidden costs accumulate in networking: egress charges, Private Link connections, NAT Gateway usage, and cross-region data transfer appear as undifferentiated “data transfer” line items with no workload attribution. GPU VM pricing for Azure OpenAI and AI workloads introduces volatility that standard compute budget models cannot accommodate.

Microsoft guidance emphasises cost management and governance policy — but at enterprise scale, AKS workload economics below subscription level require a dedicated external attribution layer.

EKS — Amazon Elastic Kubernetes Service

Cost complexity: Highly granular and fragmented | Control plane: $0.10/hr per cluster | Best fit: AWS-native engineering teams

Cost Model

EKS control plane charges $0.10 per hour per cluster (~$72/month) — a fixed cost regardless of cluster utilisation that creates financial incentive to consolidate workloads and reduce cluster count. Node costs are EC2 instance pricing across families (general purpose, compute-optimised, GPU), with Spot and Reserved Instance options offering significant savings at the cost of management complexity. EBS Persistent Volumes bill per provisioned IOPS and GB regardless of actual usage. Network costs fragment across NAT Gateway ($0.045/hr plus $0.045/GB), cross-AZ data transfer ($0.01/GB), and Elastic Load Balancing.

FinOps Advantages

EKS offers the deepest optimisation potential of the three major managed platforms. The breadth of EC2 instance families, combined with Spot instance availability across GPU and compute families, enables aggressive cost optimisation for teams willing to invest in the management overhead. AWS Savings Plans and Reserved Instances provide committed-use discounts that can reduce compute cost by 30–60% for stable workloads. AWS Cost and Usage Reports provide the most granular billing data of any platform — the raw material for comprehensive FinOps analysis.

Governance Challenges

EKS is also the most difficult platform to govern without dedicated tooling. Cost is fragmented across multiple AWS services — EC2, EBS, VPC, ELB, NAT Gateway — with no unified workload-level view in native AWS tooling. Attribution across services requires correlation of resource tags that must be applied consistently across all billing dimensions — a manual and error-prone process at scale.

EBS provisioned IOPS billing regardless of actual usage creates hidden waste: a persistent volume provisioned for peak write throughput bills at full provisioned cost even when average IOPS utilisation is below 20%. NAT Gateway charges accumulate invisibly in microservices architectures where internal services route external traffic through the NAT gateway unnecessarily.

AWS best practices explicitly recommend custom FinOps layers for EKS — native tooling alone is insufficient for Kubernetes cost governance at enterprise scale. The granularity that makes EKS powerful for optimisation is the same granularity that makes governance structurally complex.

GKE — Google Kubernetes Engine

Cost complexity: Efficient but multi-model pricing | Control plane: Free (Standard, limited) | Best fit: Efficiency-focused and AI/ML-intensive organisations

Cost Model

GKE operates in two distinct modes with incomparable billing models. Standard mode bills per node VM using Compute Engine pricing, with sustained use discounts applied automatically as monthly utilisation hours accumulate — no reservation commitment required. Autopilot mode bills per pod resource request (vCPU and memory) rather than per node, eliminating node-level waste but introducing per-pod billing semantics that require different financial modelling. Networking uses GCP VPC egress pricing. Vertex AI and Cloud TPU pricing applies for ML workloads.

FinOps Advantages

GKE delivers the best raw compute efficiency of the managed platforms through a combination of automated bin-packing, advanced cluster autoscaling, and sustained use discounts that activate without manual action. Autopilot mode eliminates a class of node-level waste — you pay for what pods request, not for nodes that are partially utilised. GCP’s integrated cost recommendations surface rightsizing opportunities within the billing interface. For AI workloads, GKE’s TPU integration and preemptible GPU instance availability (at 60–70% discount) make it the most cost-competitive managed platform for ML training at scale.

Governance Challenges

The Autopilot vs. Standard pricing difference creates a multi-model cost environment that makes cross-cluster cost comparison unreliable without normalisation. A cost-per-workload comparison between an Autopilot cluster and a Standard cluster requires converting between fundamentally different billing semantics before any comparison is meaningful.

Sustained use discount retroactive application means the billing amount at mid-month differs from the settled amount at month-end — creating forecast variance that complicates real-time budget tracking. Cost transparency for complex multi-service deployments across multiple GKE clusters still requires external governance.

Google emphasises efficiency-first infrastructure — and GKE delivers on compute efficiency. Cost transparency at the workload level and cross-mode comparability remain governance challenges that the native GCP tooling does not fully address.

On-Premises Kubernetes

Cost complexity: CapEx-dominant with hidden OpEx | Control plane: Embedded in CapEx and OpEx | **Best fit:**Regulated workloads, data sovereignty requirements, predictable long-term cost profiles

Cost Model

On-premises Kubernetes has a fundamentally different economic structure from cloud-managed platforms. Hardware — servers, GPU nodes, storage arrays, networking equipment — is purchased as CapEx and amortised over 3–5 year hardware refresh cycles. OpEx includes power, cooling, rack space, maintenance contracts, and the platform engineering staff time required to operate the clusters. There is no per-hour billing, no consumption-based pricing, and no cloud egress charges.

FinOps Advantages

For the right workloads, on-premises Kubernetes offers genuine economic advantages. Absence of egress charges makes inter-service communication effectively free within the datacentre — a material saving for microservices architectures generating high internal API traffic. Long-horizon cost predictability, once hardware is amortised, makes financial planning more deterministic than consumption-based cloud billing. Full infrastructure control eliminates provider-imposed pricing changes.

Governance Challenges

On-premises Kubernetes has the lowest cost visibility of any platform — and the highest hidden cost risk from utilisation inefficiency. Cloud billing provides granular, real-time cost data by resource. On-premises cost data lives in hardware procurement records, facilities cost centres, and staffing budgets that are never integrated with workload tracking systems.

Gartner research finds many on-premises environments operating below 40% average utilisation — meaning the majority of CapEx is funding idle infrastructure rather than productive workloads.

Rightsizing is constrained by hardware procurement cycles: cloud environments can scale down idle capacity in minutes; on-premises environments must plan hardware utilisation across multi-year horizons. Chargeback in on-premises Kubernetes requires manual allocation modelling that distributes amortised hardware cost, power, and staff time across workloads — a labour-intensive process that produces approximations rather than attributions.

Red Hat OpenShift

Cost complexity: Infrastructure plus licensing layer | Control plane: Embedded in subscription | Best fit: Hybrid enterprises, regulated industries, governance-priority environments

Cost Model

OpenShift cost is the sum of two components that no single billing system exposes: Red Hat platform licensing (per core, applied to all nodes in the cluster) plus underlying infrastructure cost (cloud VM pricing on whichever hyperscaler hosts the cluster, or on-premises hardware CapEx). OpenShift Service Mesh, middleware components, and Red Hat support tiers add further licensing layers. The combined cost is typically 20–40% higher than equivalent bare Kubernetes deployments on the same infrastructure.

FinOps Advantages

OpenShift’s enterprise-grade governance and policy enforcement capabilities are its primary financial value proposition. Standardised environments across hybrid and multi-cloud deployments reduce configuration drift and the hidden costs of inconsistency. Strong RBAC, security policy, and compliance controls align with regulated industry requirements where the governance premium is justified by risk reduction. Hybrid flexibility — consistent platform experience across cloud and on-premises — reduces the operational overhead of multi-environment management.

Governance Challenges

The true cost-per-workload is invisible in OpenShift environments without external normalisation. Licensing is applied uniformly across all nodes regardless of whether individual workloads require OpenShift-specific capabilities — GPU nodes used for standard ML training pay the same licensing overhead as nodes running OpenShift-specific platform services. The combined cost view (licensing + infrastructure) requires integrating Red Hat billing with cloud or hardware billing, which no native tool provides.

Deloitte identifies OpenShift as a governance-first platform — the policy and compliance controls are enterprise-grade. The trade-off is cost efficiency: the licensing overhead is the price of the governance discipline.

Comparative Cost Governance Matrix

| Platform | Cost Visibility | Optimisation Capability | Governance Complexity | Control Plane Cost | Best Fit |

|---|---|---|---|---|---|

| AKS | High | Medium | Medium | Free (standard) | Enterprise Azure shops |

| EKS | Medium | High | High | $0.10/hr per cluster | AWS-native teams |

| GKE | Medium-High | High | Medium | Free (Standard, limited) | Efficiency-focused orgs |

| On-Prem | Low | Low | High | CapEx + OpEx | Regulated workloads |

| OpenShift | High | Medium | High | Embedded in licensing | Hybrid enterprises |

Reading the matrix: High cost visibility does not equal governance capability. AKS and OpenShift both score high on visibility — but neither provides automated policy enforcement at the workload level without external tooling. Optimisation capability (EKS and GKE scoring highest) reflects the depth of native optimisation levers available — not whether those levers are being used.

The AI Factor: GPU Economics Across Kubernetes Platforms

All five platforms face the same GPU governance challenge:

GPUs are expensive — and underutilised.

AI platforms — Azure OpenAI Service, Amazon Bedrock, Google Vertex AI — are increasingly integrated with Kubernetes workloads, introducing GPU scheduling complexity, cost fragmentation, and multi-layer pricing across every platform.

| Platform | GPU Options | Key Governance Challenge |

|---|---|---|

| AKS | NCv3 (V100), NC A100 v4, NVv4 | Idle GPU nodes appear as normal compute — no utilisation signal in Azure Cost Management |

| EKS | P3 (V100), P4 (A100), G5 (A10G), Trn1 | Spot GPU interruptions create cost unpredictability; Reserved GPU commitments are inflexible |

| GKE | T4, A100, H100, Cloud TPU v4/v5 | Autopilot vs. Standard GPU cost comparison requires normalisation across billing models |

| On-Prem | H100/A100/H200 — no provider markup | GPU utilisation appears as depreciation, not variable cost — FinOps rarely measures it |

| OpenShift | Platform-dependent | Licensing overhead on GPU nodes creates invisible premium above infrastructure GPU cost |

The consistent failure across all platforms: GPU utilisation rate is not surfaced in standard billing data. A GPU instance billing at $28/hr while running at 15% utilisation costs exactly the same as one running at 95%. Without utilisation-aware governance, GPU waste is the largest single source of recoverable spend in Kubernetes environments.

KubeCon Europe 2026: “AI workloads are stress-testing Kubernetes. GPU scheduling inefficiencies, rising infrastructure costs, and lack of cost visibility are forcing platform engineering to own financial accountability.”

Why FinOps Must Be Platform-Agnostic

Each of the five platforms provides:

- Partial visibility — within its own billing environment

- Limited optimisation tools — for its own native resource types

None provides:

- Unified cost governance across all platforms simultaneously

- Cross-platform normalisation that makes cost comparisons accurate

- Workload-level financial control that connects cluster spend to business outcomes

This is why platform-agnostic FinOps is not optional — it is the necessary governance layer for any enterprise operating across more than one Kubernetes platform.

The FinOps Foundation’s FOCUS specification is the open standard enabling this normalisation. FOCUS defines a common cost data schema that transforms billing data from AKS, EKS, GKE, OpenShift, and on-prem into equivalent metrics — making accurate cross-platform cost comparison possible for the first time.

Implementing FOCUS-native governance requires a platform built on the specification from the ground up — not ETL pipelines that approximate normalisation and introduce their own attribution errors.

What Leading Enterprises Are Doing Differently in 2026

Forward-looking organisations at KubeCon Europe 2026 shared three consistent practices that distinguish governance-mature enterprises from those still struggling with fragmented Kubernetes economics:

They Treat Kubernetes Platform Choice as a Financial Architecture Decision

The technical properties of AKS, EKS, GKE, OpenShift, and on-prem Kubernetes are well understood. The financial properties — cost visibility ceiling, optimisation lever depth, governance complexity — are equally important and frequently underweighted in platform selection. Mature organisations evaluate platforms on both dimensions and architect multi-platform strategies with governance as a first-class constraint.

They Standardise Cost Metrics, Not Just Workloads

Standardising workload deployment across platforms (using Helm, ArgoCD, or similar) is table stakes. Standardising cost metrics — using FOCUS to ensure every platform reports in the same schema — is what enables financial governance at multi-platform scale. Without metric standardisation, cost comparison is manual, error-prone, and always stale.

They Integrate FinOps into Platform Engineering — Not Alongside It

KubeCon Europe 2026: “Platform engineering is taking ownership. Teams are increasingly responsible for cost guardrails, workload efficiency, and financial accountability.”

Governance-mature organisations embed cost visibility into platform tooling — surfacing workload cost to developers at the point of deployment, not in a monthly FinOps report they never read. Cost guardrails are enforced by the platform, not negotiated with the engineering team.

A Platform-Agnostic Kubernetes FinOps Framework

Implementing effective multi-platform Kubernetes cost governance follows five steps — regardless of which combination of AKS, EKS, GKE, OpenShift, and on-prem you operate:

Step 1 — Audit Cost Visibility Gaps Across Each Platform

Inventory every Kubernetes cluster across all platforms. Map which cost dimensions are visible in native billing, which require correlation across multiple billing sources, and which are structurally invisible without external tooling. Quantify the manual reconciliation overhead your FinOps team currently absorbs — this is the measurable cost of the visibility gap.

Step 2 — Deploy FOCUS-Native Cross-Platform Normalisation

Implement FOCUS 1.x normalisation that ingests billing data from all platforms and transforms it to a common schema. This eliminates the manual translation layer that makes cross-platform cost comparison unreliable, and provides the foundation for all subsequent governance actions.

Step 3 — Enforce a Unified Tagging Taxonomy at Provisioning

Define a common tag schema (Team, Product, Environment, CostCentre, WorkloadType) and enforce it at provisioning across all Kubernetes platforms simultaneously. Mandatory tagging at provisioning — blocking resource creation without complete attribution metadata — replaces quarterly compliance campaigns with continuous governance.

Step 4 — Implement GPU and AI Workload Lifecycle Automation

Configure GPU utilisation monitoring across all platforms with idle detection and automatic scale-down. Set training job SLA enforcement that auto-terminates runaway jobs beyond defined cost or time thresholds. Attribute inference and training costs to owning teams in real time — making GPU spend a governed financial object rather than an unattributed infrastructure cost.

Step 5 — Surface Unit Economics Across All Platforms

Track cost per workload, cost per deployment, and cost per business outcome consistently across all platforms. Unit economics — cost per inference served, cost per active user, cost per product feature — translate Kubernetes platform spend into the financial language that connects engineering decisions to business outcomes.

DigiUsher: Unified Kubernetes FinOps Across All Platforms

DigiUsher’s FinOps Operating System provides the platform-agnostic governance layer that individual Kubernetes platforms cannot deliver:

Cross-platform cost visibility — AKS, EKS, GKE, OpenShift, and on-prem cost data normalised to FOCUS 1.x in a single model, alongside AI APIs, Snowflake, Databricks, SaaS, and cloud Marketplace charges

Real-time workload-level attribution — namespace, label, and team-level cost attribution across all five Kubernetes platforms simultaneously — P&L-grade chargeback generated automatically, not assembled manually

GPU and AI cost governance — utilisation tracking per cluster and workload, idle scale-down automation, training job SLA enforcement, and inference cost attribution per team and product across all platforms

Mandatory tagging enforcement — a unified attribution taxonomy applied at provisioning across all Kubernetes platforms, blocking resource creation without complete cost metadata

Automated budget guardrails — machine-enforceable policies that trigger throttle, scale-down, and alert actions before budget thresholds are breached — not after

Deployment options: Multi-tenant SaaS or BYOC single-tenant for regulated industries with data sovereignty requirements (ICICI Bank and other regulated-industry deployments). SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

The outcome: Kubernetes becomes a financially governed system — not a fragmented cost environment that finance teams cannot reconcile and engineering teams cannot optimise.

Frequently Asked Questions

What is the difference between AKS, EKS, and GKE for cost governance?

AKS has the highest cost visibility through Azure Cost Management integration and subscription hierarchy, but requires external tooling for workload-level attribution. EKS offers the deepest optimisation potential through granular AWS primitives and Spot instances, but has the most fragmented cost visibility across multiple AWS services. GKE delivers the best compute efficiency through sustained use discounts and advanced bin-packing, but multi-model pricing between Autopilot and Standard requires normalisation for accurate cross-cluster comparison. All three provide partial visibility and limited governance — none provide unified cross-platform cost policy enforcement.

How does on-premises Kubernetes cost compare to managed cloud Kubernetes?

On-premises uses CapEx-dominant billing — hardware purchased upfront, amortised over 3–5 years — versus cloud’s consumption-based per-hour billing. On-premises eliminates cloud egress charges and provides long-term cost predictability once amortised. However, it introduces low utilisation risk (Gartner finds many environments below 40% utilisation), eliminates demand elasticity, and has the lowest cost visibility of any platform — chargeback requires manual allocation across hardware, facilities, and staffing budgets that don’t integrate with cloud billing.

What is the cost of EKS vs GKE vs AKS control plane?

EKS charges $0.10/hr per cluster (~$72/month) regardless of size. AKS provides a free control plane in standard tiers, with charges in premium configurations. GKE is free in Standard mode for limited configurations, charged in Enterprise tiers. OpenShift embeds control plane cost in per-core subscription licensing. On-premises has no control plane billing — cost is absorbed into hardware CapEx and staff OpEx.

Why is OpenShift more expensive than other Kubernetes platforms?

OpenShift adds Red Hat platform licensing on top of underlying infrastructure — typically 20–40% more than equivalent bare Kubernetes deployments. Licensing is per-core and applies to all nodes regardless of whether workloads use OpenShift-specific capabilities. The premium funds enterprise-grade governance, hybrid/multi-cloud flexibility, and standardised environments — organisations that prioritise policy enforcement and compliance accept this cost as the price of governance discipline.

How should enterprises govern GPU costs across Kubernetes platforms?

Four controls applied consistently: real-time GPU utilisation tracking per node pool (not just billing cost); idle GPU detection with automatic scale-down at a defined inactivity threshold; training job lifecycle enforcement with time or cost SLA limits; and cross-platform GPU cost normalisation comparing cost-per-GPU-hour across AKS, EKS, GKE, and on-prem using equivalent metrics rather than incompatible provider billing formats.

What is platform-agnostic Kubernetes FinOps and why is it necessary?

Platform-agnostic Kubernetes FinOps governs all Kubernetes platforms simultaneously using a unified cost model, shared tagging taxonomy, and common attribution framework. It is necessary because each platform provides partial visibility within its own environment but none provide cross-platform governance. Enterprises running multiple Kubernetes platforms without platform-agnostic governance end up with fragmented cost data, incompatible attribution structures, and no single source of truth for total Kubernetes spend.

What are the hidden costs of multi-platform Kubernetes environments?

Five hidden cost categories: cross-platform reconciliation overhead (manual finance consolidation time); egress and inter-cluster network charges appearing as undifferentiated data transfer; idle GPU and compute capacity billing continuously across all platforms; on-premises utilisation waste from hardware provisioned at peak but averaging 40% or less; and OpenShift licensing overhead applied uniformly to workloads that don’t require OpenShift-specific capabilities.

How does DigiUsher’s FinOps OS govern Kubernetes costs across all platforms?

Through four integrated capabilities: FOCUS 1.x native normalisation across AKS, EKS, GKE, OpenShift, and on-prem enabling accurate cross-platform cost comparison; workload-level cost attribution with namespace and label-based mapping across all platforms; GPU and AI workload governance with real-time utilisation tracking, idle automation, and inference cost attribution; and mandatory tagging enforcement at provisioning producing P&L-grade chargeback automatically rather than through monthly manual reconciliation.

References

- FinOps Foundation — FOCUS Specification

- KubeCon Europe 2026 — Platform Engineering and FinOps sessions

- Gartner — On-Premises Kubernetes Utilisation and Cloud Migration Research

- Deloitte — OpenShift Enterprise Governance Analysis

- PwC — Multi-Platform Cloud Cost Governance

- AWS EKS cost and pricing documentation

- Azure AKS pricing documentation

- Google GKE pricing documentation

- Red Hat OpenShift pricing

- McKinsey — Cloud Cost Optimisation: Platform Engineering Efficiency

Go Deeper: Kubernetes and FinOps OS

- Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails — 1 Apr 2026 — The five structural failures destroying multi-cloud Kubernetes ROI

- AI Cost Governance: How to Prevent Runaway GenAI Spend — 14 Jan 2026 — GPU lifecycle automation across Kubernetes platforms

- Why Enterprises Need a FinOps Operating System — Not Just Tools — 7 Jan 2026 — The full FinOps OS architecture governing Kubernetes as one layer

- Designing Azure Landing Zone Cost Guardrails — 11 Feb 2026 — AKS-specific Policy Engine governance within Azure Landing Zone

- How CFOs Can Govern Cloud ROI in 2026 — 28 Jan 2026 — Connecting Kubernetes platform spend to business ROI

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

FinOps for Kubernetes: The Ultimate Guide to Rightsizing, Bin Packing, and GPU Optimisation

96% of enterprises run Kubernetes — yet only 13% of requested CPU is actually used. This ultimate FinOps guide covers workload rightsizing using P50/P95 percentiles, bin packing strategies for node efficiency, GPU optimisation with MIG and time-slicing, namespace cost attribution, and real-time policy enforcement — with concrete benchmarks, configuration patterns, and the DigiUsher FinOps OS layer that governs it all.

Explore article

Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails

Multi-cloud Kubernetes delivers portability across AKS, EKS, GKE, and OpenShift — but portability does not solve economics. Discover the five ways cost fragmentation silently destroys multi-cloud ROI, how leading platform teams are building financial orchestration alongside workload orchestration, and why DigiUsher's FinOps OS outperforms IBM Cloudability, CloudZero, Vantage, and FinOut for Kubernetes cost governance.

Explore article

Kubernetes Economics: Why Containers Multiply Cloud Waste

99% of Kubernetes clusters are overprovisioned. The average cluster wastes 47% of provisioned resources. At KubeCon Europe 2026, the industry admitted what FinOps practitioners already knew: Kubernetes solves deployment — it amplifies inefficiency. This deep dive explains the five hidden financial mechanisms behind Kubernetes waste, why traditional FinOps cannot fix them, and what a FinOps OS layer does that point tools cannot.

Explore article