FinOps for Kubernetes: The Ultimate Guide to Rightsizing, Bin Packing, and GPU Optimisation

96% of enterprises run Kubernetes — yet only 13% of requested CPU is actually used. This ultimate FinOps guide covers workload rightsizing using P50/P95 percentiles, bin packing strategies for node efficiency, GPU optimisation with MIG and time-slicing, namespace cost attribution, and real-time policy enforcement — with concrete benchmarks, configuration patterns, and the DigiUsher FinOps OS layer that governs it all.

Author

DigiUsher

Read Time

20 min read

Executive Summary

Kubernetes has become the universal control plane for modern infrastructure and AI workloads. 96% of enterprises now run Kubernetes — and for most of them, it is also:

- The largest source of cloud inefficiency in the estate

- The least understood cost driver in the cloud bill

- The hardest environment to govern financially

The research is stark. Analysis of 3,042 production clusters in January 2026 found that 68% of pods request 3–8× more memory than they actually use. Studies show only 13% of requested CPU is consumed on average — an 8× gap between what enterprises pay for and what their workloads actually need.

KubeCon Europe 2026: “Organisations have mastered Kubernetes deployment — but not Kubernetes economics.”

This guide operationalises FinOps for Kubernetes across the three pillars that determine whether your Kubernetes estate generates competitive advantage or compounds financial waste:

- Rightsizing — matching resource requests to actual workload behaviour

- Bin Packing — maximising node utilisation through intelligent scheduling

- GPU Optimisation — eliminating the largest per-hour cost inefficiency in AI infrastructure

Each section combines first-principles explanation with concrete configuration guidance, current 2026 benchmarks, and the governance layer that makes optimisation continuous rather than quarterly.

What Is FinOps for Kubernetes?

FinOps for Kubernetes is the practice of applying financial governance — cost attribution, rightsizing, optimisation, and policy enforcement — to containerised workloads at the pod, namespace, and cluster level.

It requires a fundamentally different approach from standard cloud FinOps because Kubernetes breaks the one-to-one relationship between infrastructure and cost that traditional tools assume:

Traditional cloud FinOps model:

1 VM = 1 cost unit = 1 attribution tag → Cost is attributable

Kubernetes reality:

1 VM → dozens of containers → multiple teams → multiple products

1 application → multiple clusters → multiple clouds

1 workload → appears and disappears in seconds

In this environment, cloud billing shows VM cost — it does not show which pods ran on that VM, which team owns those pods, which product they served, or whether the resources requested were ever actually used.

A cluster billed at £100,000/month for VM capacity may be generating only £35,000/month of productive workload value — with 65% of requested resources sitting idle in overprovisioned containers that the Kubernetes scheduler treats as occupied.

Traditional FinOps tools that report on infrastructure cost cannot surface or act on this structural waste. Kubernetes FinOps requires workload-level visibility, percentile-based rightsizing, scheduling policy governance, and continuous automated optimisation.

Pillar 1 — Rightsizing: Fix the Biggest Source of Kubernetes Waste

The Scale of the Problem

Kubernetes requires developers to define CPU and memory requests (used for scheduling) and limits (used for enforcement) for every container. In practice, these values are almost universally overestimated.

The data from 2026 production cluster analysis is unambiguous:

| Metric | Finding | Source |

|---|---|---|

| Average CPU actually used vs. requested | 13% utilisation — 8× gap | Sedai Production Analysis |

| Pods requesting 3–8× more memory than used | 68% of all pods | Wozz, 3,042 clusters, Jan 2026 |

| Teams that know their P95 memory usage | Only 12% | Wozz Engineering Interview Study |

| Common ‘safety’ headroom added after OOM incident | 2–4× resource multiplication | 64% of teams surveyed |

The root cause is structural, not behavioural. Developers set resource requests at deployment time and never revisit them. Cloud providers bill for requested resources, not actual usage — if a pod requests 2 GiB and uses 400 MiB, you pay for 2 GiB. This waste is buried in EC2, AKS, and GKE bills as normal compute charges with no signal that the resources are sitting idle.

Rightsizing and auto-scaling programmes routinely cut compute waste by 25–35% — making it the single highest-return FinOps initiative available in any Kubernetes environment.

The Correct Approach: Percentile-Based Rightsizing

Static resource configuration based on developer estimates must give way to percentile-based rightsizing using observed historical behaviour:

| Request / Limit | Percentile Target | Rationale |

|---|---|---|

resources.requests.cpu | P50 (median) | Schedules pods based on typical usage — allows burst headroom via limits |

resources.limits.cpu | P95 | Allows bursting for 5% of the time without throttling neighbours |

resources.requests.memory | P95 | Memory requests must cover most usage peaks — OOM kills are disruptive |

resources.limits.memory | P99 | Prevents OOM kills while eliminating 2–4× ‘just in case’ inflation |

This approach requires multi-week rolling window data — not a snapshot. Workload behaviour changes as features are deployed, traffic patterns shift, and upstream dependencies evolve. Rightsizing based on last week’s data applied to next quarter’s workload is a category error.

Automation: Vertical Pod Autoscaler and Beyond

Manual rightsizing reviews are insufficient at scale — a Kubernetes estate with 500 deployments would require continuous human analysis to stay current. Automation is the only viable governance model.

Vertical Pod Autoscaler (VPA) — the native Kubernetes mechanism for automated rightsizing:

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: api-service-vpa

namespace: product-team-a

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: api-service

updatePolicy:

updateMode: "Auto" # Start with "Off" for recommendations only

resourcePolicy:

containerPolicies:

- containerName: api-container

minAllowed:

cpu: "100m"

memory: "128Mi"

maxAllowed:

cpu: "2"

memory: "4Gi"

controlledResources: ["cpu", "memory"]Configuration guidance:

- Start in

updateMode: "Off"to generate recommendations without applying them — validate before enabling automation - Set

minAllowedandmaxAllowedbounds to prevent VPA from over-optimising into OOM risk - Apply

PodDisruptionBudgetsto production deployments before enablingAutomode — VPA restarts pods to apply resource changes - Do not run VPA and HPA simultaneously on the same resource dimension (e.g., both targeting CPU) — use HPA for horizontal scaling, VPA for vertical rightsizing

Common Anti-Patterns to Eliminate

Deploy-time requests, never revisited — resource requests set at first deployment, unchanged as workload behaviour evolves for months or years. Fix: resurface rightsizing recommendations in CI/CD pipelines so engineers see cost impact at deploy time, not in quarterly FinOps reviews.

The post-OOM multiplication reflex — 64% of teams add 2–4× memory headroom after a single OOM incident in staging. “It OOMKilled once two years ago, so now we request 4Gi.” The correct fix is setting memory limits at P99 with robust PodDisruptionBudgets — not permanently inflating requests that the cluster bills for continuously.

Identical resource profiles across environments — dev and staging environments running with production-scale resource requests, paying full production cost for workloads that never receive production traffic. Fix: enforce environment-specific resource profiles via namespace-level LimitRange objects.

Requests sized for peak-day capacity — configuring baseline requests for Black Friday or month-end peak means paying peak-level resources 365 days a year. Use HPA for demand-driven horizontal scaling and set baseline requests at P50 — let autoscaling handle demand spikes.

Pillar 2 — Bin Packing: Maximise Node Utilisation

The Default Kubernetes Scheduling Problem

Kubernetes’ default scheduling strategy is spread-first: pods are distributed evenly across available nodes to maximise resilience and minimise per-node resource contention. This is architecturally sound for availability — and financially expensive.

Spread-first scheduling creates stranded capacity: nodes running at 30–50% allocation appear “in use” to the Cluster Autoscaler, which prevents scale-down and generates continuous node billing for idle infrastructure. A 10-node cluster where each node is at 40% utilisation costs the same as a 10-node cluster at 95% utilisation — but delivers 2.4× less workload per pound of cloud spend.

KubeCon Europe 2026 practitioners: “We solved scaling. Now we need to solve efficiency.”

Kubernetes bin-packing, vertical pod autoscaling, and quota guards are identified as core optimisation practices — and for good reason. Consolidation typically improves node utilisation from 40–60% to 75–85%, reducing node count and cost by 20–30% for equivalent workloads.

Bin Packing Implementation

Scheduling policy configuration — switch the kube-scheduler from spread-first to bin-pack-first:

# kube-scheduler-config.yaml

apiVersion: kubescheduler.config.k8s.io/v1

kind: KubeSchedulerConfiguration

profiles:

- schedulerName: default-scheduler

plugins:

score:

disabled:

- name: NodeResourcesBalancedAllocation # Disable spread

enabled:

- name: NodeResourcesFit

weight: 1

pluginConfig:

- name: NodeResourcesFit

args:

scoringStrategy:

type: MostAllocated # Enable bin packing

resources:

- name: cpu

weight: 1

- name: memory

weight: 1Cluster Autoscaler tuning — default scale-down thresholds are conservative. Production-grade bin packing requires more aggressive configuration:

# cluster-autoscaler deployment args

--scale-down-utilization-threshold=0.5 # Scale down if node < 50% utilised

--scale-down-delay-after-add=5m # Wait 5m after adding a node

--scale-down-unneeded-time=5m # Scale down after 5m unneeded

--scale-down-delay-after-failure=3m # Retry after 3m on failure

Namespace ResourceQuota enforcement — prevents individual namespaces from over-requesting and distorting bin packing efficiency:

apiVersion: v1

kind: ResourceQuota

metadata:

name: platform-team-quota

namespace: platform-team

spec:

hard:

requests.cpu: "20"

requests.memory: "40Gi"

limits.cpu: "40"

limits.memory: "80Gi"

pods: "100"

---

apiVersion: v1

kind: LimitRange

metadata:

name: platform-team-limits

namespace: platform-team

spec:

limits:

- default:

cpu: "500m"

memory: "512Mi"

defaultRequest:

cpu: "100m"

memory: "128Mi"

type: ContainerNode Pool Heterogeneity

Bin packing efficiency improves significantly when the scheduler can choose between multiple node types matched to workload profiles:

| Node Pool Type | Instance Family | Best For |

|---|---|---|

| General purpose | m5/D-series/N2 | Web services, APIs, microservices |

| Compute optimised | c5/Fsv2/C2 | CPU-intensive batch, data processing |

| Memory optimised | r5/Edsv5/M2 | Caching, in-memory databases, large JVM workloads |

| GPU — training | p4/NC A100/A2 | Distributed model training |

| GPU — inference | g5/NVv4/T4 | Real-time inference serving |

| Spot / Preemptible | Any family | Fault-tolerant batch, training jobs with checkpointing |

Using Karpenter (AWS) or Node Auto-Provisioner (GKE) rather than static node pools enables the scheduler to provision exactly the right instance type for each pending pod — eliminating the stranded capacity that accumulates when all workloads share uniform node types.

Pillar 3 — GPU Optimisation: The New Cost Frontier

Why GPUs Change the Economics Entirely

NVIDIA GPU instances cost 10–50× more than equivalent CPU instances. An 8×A100 node pool on any hyperscaler costs $28–35/hr — $672–$840/day — whether the GPUs are active or idle.

The core governance problem: a quantised large language model running inference on an 80GB A100 might consume only 12GB of GPU memory and operate at 30–35% compute utilisation. That’s 65–70% of an expensive accelerator sitting idle, yet Kubernetes considers it fully occupied.

Default Kubernetes GPU scheduling treats GPUs as binary atomic resources — a pod either has an entire GPU or has none. There is no native sharing mechanism. CNCF production case studies show that advanced GPU scheduling can improve utilisation from 13% to 37% — nearly tripling efficiency — with some implementations pushing past 80%.

NVIDIA MIG: Hardware-Level GPU Partitioning

Multi-Instance GPU (MIG) is NVIDIA’s hardware partitioning technology available on A100, H100, and H200 GPUs. MIG divides a single physical GPU into up to 7 independent instances, each with dedicated streaming multiprocessors, memory bandwidth, L2 cache, and PCIe bandwidth.

NVIDIA A100 80GB — MIG Profile Options

─────────────────────────────────────────────────

Profile Instances Memory Use Case

─────────────────────────────────────────────────

1g.10gb × 7 10 GB Light inference (7B quant models)

2g.20gb × 3 20 GB Medium inference (13B models)

3g.40gb × 2 40 GB Large inference (70B quant)

4g.40gb × 1 40 GB Heavy inference / light training

7g.80gb × 1 80 GB Full GPU — large training

─────────────────────────────────────────────────

Economic impact: 10 inference workloads that previously required 10 dedicated A100s can run on 1–2 A100s with 1g.10gb MIG profiles. Performance benchmarks show up to a 40% increase in GPU utilisation in multi-tenant environments with MIG.

Pod configuration for MIG workloads:

# Small inference using 1g.10gb MIG slice

apiVersion: v1

kind: Pod

metadata:

name: llm-inference-small

namespace: ml-inference

labels:

team: ml-platform

product: recommendation-engine

workload-type: real-time-inference

cost-centre: prod-ml-001

spec:

schedulerName: kai-scheduler

containers:

- name: triton-server

image: nvcr.io/nvidia/tritonserver:24.01-py3

resources:

limits:

nvidia.com/mig-1g.10gb: 1MIG governance considerations:

- Partitioning modes cannot change without draining nodes — plan profiles based on workload mix before enabling

- Each MIG instance appears as a separate GPU to Kubernetes — enables per-instance cost attribution

- Requires NVIDIA GPU Operator v25+ and compatible drivers

- Hardware-level fault isolation: a crash in one MIG instance cannot affect others — production-safe for multi-tenant inference

GPU Time-Slicing: Software Sharing for Development Workloads

For workloads that do not require the isolation guarantees of MIG, time-slicing enables multiple pods to share a single GPU through software-based time-division multiplexing:

# nvidia-time-slicing-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: time-slicing-config

namespace: gpu-operator

data:

config.yaml: |

version: v1

sharing:

timeSlicing:

resources:

- name: nvidia.com/gpu

replicas: 10 # 10 pods share each physical GPUTime-slicing trade-offs:

| Property | MIG | Time-Slicing |

|---|---|---|

| Memory isolation | ✓ Hardware-level | ✗ None — shared |

| Fault isolation | ✓ Hardware-level | ✗ None |

| Hardware requirement | Ampere+ (A100, H100) | Any NVIDIA GPU |

| Instances per GPU | Up to 7 | Up to 48 (configurable) |

| Latency predictability | High — dedicated resources | Variable — context switching overhead |

| Best fit | Production inference, SLA workloads | Dev, notebooks, experimentation |

Workload Segmentation: Training vs. Inference

Training and inference have fundamentally different GPU governance requirements:

Training workloads — GPU-intensive, batch-oriented, tolerates interruption:

- Run on Spot / Preemptible GPU instances — saves 50–70% vs. on-demand

- Require gang scheduling — all pods in a distributed training run must start simultaneously

- Require checkpoint-and-restart — enables graceful recovery from Spot preemption

- Set training job SLA limits — auto-terminate jobs exceeding defined time or cost thresholds

Inference workloads — latency-sensitive, steady-state, requires availability SLA:

- Run on dedicated MIG-partitioned GPU instances — predictable performance, hardware isolation

- Use HPA with GPU utilisation metrics for demand-driven scaling

- Apply per-namespace token budget caps when serving AI API workloads with usage-based billing

Queue-Based Admission Control

The organisations achieving the best GPU economics implement queue-based admission control from day one. Rather than letting individual teams provision and hoard GPU nodes, they establish organisational queues with guaranteed quotas, borrowing policies, and fair-share algorithms. This alone can boost effective utilisation by 30–50% because idle resources are automatically redistributed.

Tools: Volcano (batch workloads), Kueue (native Kubernetes queue management), NVIDIA Run:ai (enterprise GPU orchestration).

Queue-based allocation naturally generates per-team GPU attribution — the governance structure that makes accurate chargeback possible.

GPU Lifecycle Automation

The most immediately recoverable GPU waste is idle capacity between jobs. Configure automated lifecycle management:

# Example: CronJob for GPU idle detection and scale-down

# (Deployed as a controller — pseudocode for policy intent)

policy:

name: gpu-idle-scale-down

trigger:

metric: gpu_utilisation_percent

threshold: 15

duration: 30m # Scale down after 30 min below threshold

action: scale_node_pool_to_zero

notification: slack:#platform-alerts

policy:

name: training-job-sla

trigger:

metric: job_runtime_hours

threshold: 48 # Alert at 48hrs, terminate at 72hrs

action:

- alert: owner_team

- at_72h: terminate_job

Expected impact of GPU lifecycle automation:

- Idle detection and reclamation recovers 15–20% of GPU capacity

- Training job SLA enforcement prevents runaway jobs consuming weeks of GPU budget undetected

- Off-peak batch scheduling on Spot/Preemptible GPUs saves 50–70% for non-time-sensitive workloads

The Missing Layer: Cost Attribution

Rightsizing and bin packing reduce waste. Attribution makes that reduction measurable, accountable, and improvable.

Without attribution, there is no answer to: Who owns this cost? Which product generated this spend? Is this GPU bill generating revenue?

Namespace-Level Cost Attribution: The Minimum Viable FinOps Unit

Apply six mandatory labels to every namespace — enforced at pod admission so no workload enters the cluster without complete attribution metadata:

| Label Key | Purpose | Example Values |

|---|---|---|

team | Owning engineering team for chargeback | ml-platform, data-eng, product-infra |

product | Product line for P&L attribution | recommendations, search, analytics |

environment | Cost bucket separation | production, staging, dev |

cost-centre | Finance allocation code | eng-ml-001, product-001 |

workload-type | Economics differentiation | batch-training, real-time-inference, data-pipeline |

focus-service | FOCUS standard service category | Enables cross-cloud cost normalisation |

Mapping Infrastructure Cost to Business Metrics

The goal of attribution is not to produce a better cost report — it is to produce business-legible metrics that connect Kubernetes spend to product outcomes:

| Kubernetes Metric | Business Metric |

|---|---|

| CPU cost per namespace | Cost per product feature running in that namespace |

GPU cost per workload-type: real-time-inference | Cost per inference served |

GPU cost per workload-type: batch-training | Cost per model training run |

| Storage cost per PVC label | Cost per GB of data under management |

| Network egress cost per workload | Cost per API call / per data transfer event |

| Total cluster cost / active users | Cloud cost per active user |

Forrester: Organisations that link cloud spend to business outcomes — not just infrastructure dashboards — demonstrate the most mature and defensible FinOps programmes.

The Rise of Real-Time Kubernetes FinOps

Static reporting is no longer sufficient. Modern Kubernetes environments require:

Continuous utilisation telemetry — real-time CPU, memory, GPU, and network utilisation per pod and namespace. Not daily or hourly aggregates that miss the ephemeral spike patterns driving rightsizing decisions.

Automated recommendation surfacing — rightsizing recommendations based on multi-week rolling window data, surfaced in CI/CD pipelines so engineers see cost impact before deploying.

Policy enforcement at pod admission — mandatory resource request validation and label enforcement at admission time, preventing overprovisioned workloads from entering the cluster.

Anomaly detection — spend trajectory monitoring that detects workloads deviating from their historical cost pattern, surfacing anomalies in minutes rather than at month-end reconciliation.

Automated governance actions — policy-driven rightsizing application, node scale-down, GPU idle detection, and training job SLA enforcement. Governance that acts without requiring manual FinOps review of each incident.

Deloitte and PwC: Continuous financial governance embedded into execution workflows consistently outperforms post-hoc cost cleanup — in Kubernetes environments more than any other infrastructure category.

Enterprises with mature FinOps systems see 40% better budget accuracy year-over-year. The mechanism is continuous governance — not periodic reviews.

What Great Kubernetes FinOps Looks Like

Leading organisations have moved Kubernetes FinOps from a periodic reporting activity to an embedded operational discipline:

They continuously rightsize workloads — VPA runs in every namespace, recommendations surface in CI/CD, and resource requests are updated on a rolling basis rather than frozen at deployment.

They optimise bin packing dynamically — bin-packing scheduler policies run continuously, Cluster Autoscaler scale-down thresholds are tuned aggressively, and node utilisation is tracked as a first-class platform metric.

They govern GPU usage aggressively — MIG profiles are matched to workload types, queue-based admission prevents resource hoarding, idle GPU detection triggers automated scale-down, and training jobs have SLA enforcement that terminates runaway runs.

They align engineering with cost accountability — namespace-level attribution generates automatic chargeback reports, cost per feature is visible to product teams in real time, and developers see cost impact in deployment pipelines before changes reach production.

They integrate FinOps into platform engineering — cost guardrails are enforced by the platform, not negotiated with engineering teams. Governance operates at the admission and provisioning layer — not in monthly retrospective meetings.

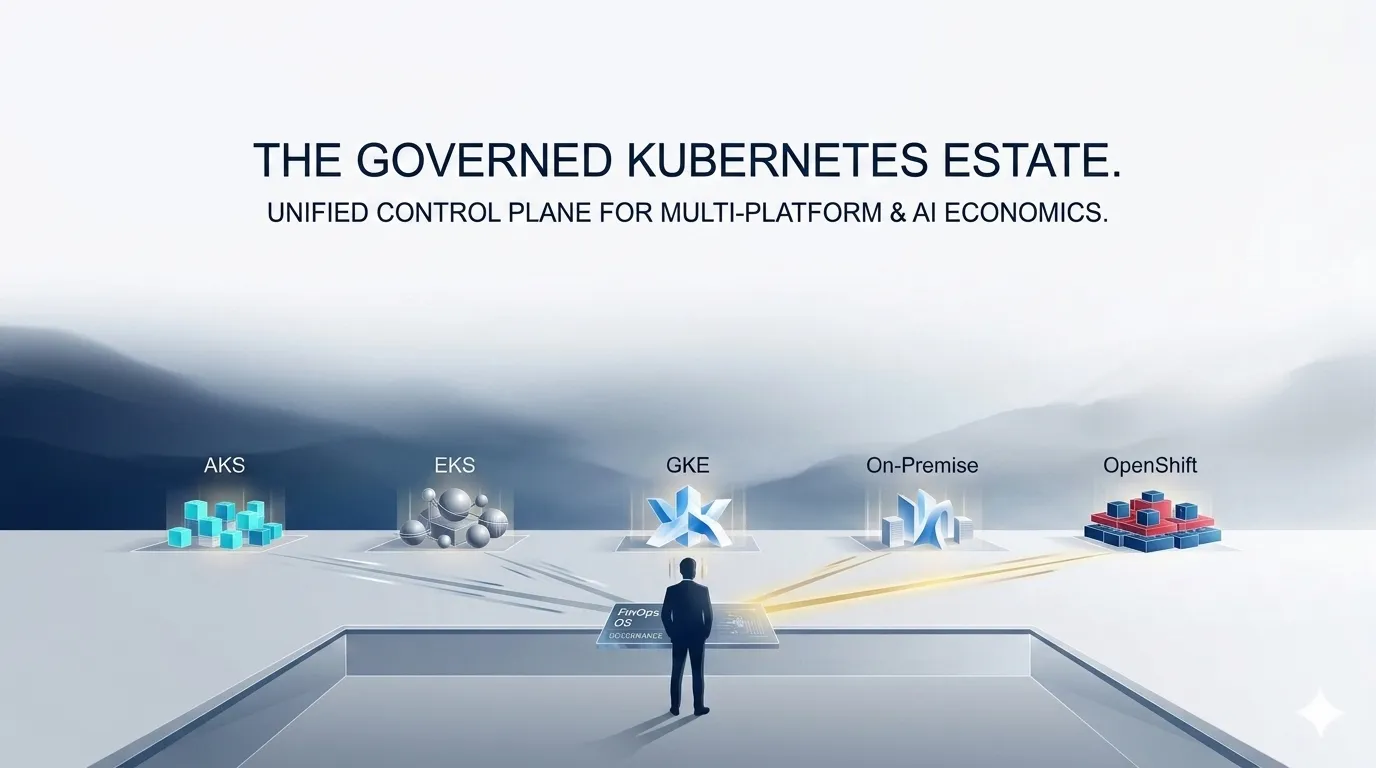

DigiUsher: A FinOps OS for Kubernetes

DigiUsher’s FinOps Operating System provides the governance layer that makes Kubernetes FinOps continuous, enforceable, and financially accountable:

Workload-level cost visibility — namespace, label, and pod-level cost attribution across AKS, EKS, GKE, OpenShift, and on-premises, normalised to FOCUS 1.x for cross-cluster comparability

Real-time rightsizing intelligence — continuous telemetry analysis surfaces rightsizing recommendations as governance signals, integrated with CI/CD pipelines and surfaced per namespace in FinOps dashboards

GPU and AI cost governance — MIG-aware cost attribution per GPU instance, idle GPU detection with automated scale-down triggers, training job SLA enforcement, and inference cost attribution per team and product

Mandatory policy enforcement — tagging requirements enforced at pod admission, budget guardrails that trigger automated actions when namespace spend thresholds are approached, continuous lifecycle automation

Cross-platform coverage — Kubernetes cost data alongside AI APIs, Snowflake, Databricks, SaaS, and cloud Marketplace charges in one FOCUS-normalised financial model

Available as SaaS, Managed SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

The outcome: Kubernetes stops being the most misunderstood cost driver in your cloud bill and becomes a financially governed, continuously optimised competitive infrastructure.

Frequently Asked Questions

What is FinOps for Kubernetes and why does it require a different approach from cloud FinOps?

FinOps for Kubernetes is financial governance applied at the workload level — pod, namespace, and cluster — rather than the infrastructure level. It requires a different approach because Kubernetes abstracts underlying VMs: one VM hosts dozens of containers from multiple teams, one application spans multiple clusters, and workloads appear and disappear in seconds. Traditional cloud FinOps reports on VM cost; Kubernetes FinOps must attribute workload cost using namespace, pod, and label metadata, and govern the overprovisioned resource requests that generate cloud billing without productive output.

What is Kubernetes rightsizing and how do I do it correctly?

Kubernetes rightsizing sets CPU and memory requests and limits to match actual workload behaviour rather than developer estimates. Only 13% of requested CPU is actually used on average. The correct method uses percentile-based analysis: CPU requests at P50, CPU limits at P95, memory requests at P95, memory limits at P99. Implement with Vertical Pod Autoscaler (VPA) starting in recommendation mode, then Auto mode with PodDisruptionBudgets. Use multi-week rolling window data — not snapshots — as workload behaviour changes continuously.

What is bin packing in Kubernetes and how does it reduce costs?

Bin packing schedules pods onto the fewest possible nodes — filling each node to near-capacity before provisioning new ones. Default spread-first scheduling distributes pods evenly, creating nodes at 30–50% utilisation that the autoscaler cannot terminate. Bin packing consolidates workloads, raises node utilisation to 75–85%, and enables the autoscaler to terminate empty nodes. Implement by configuring the MostAllocated score plugin as primary in kube-scheduler configuration, alongside aggressive Cluster Autoscaler scale-down thresholds.

How does NVIDIA MIG improve GPU cost efficiency in Kubernetes?

MIG (Multi-Instance GPU) partitions a single A100 or H100 into up to 7 hardware-isolated instances, each with dedicated memory and compute. A quantised inference workload using 12GB of an 80GB A100 at 30% compute can be served by a 1g.10gb MIG instance — freeing the remaining GPU capacity for 6 additional inference workloads. MIG achieves 40% GPU utilisation improvement in multi-tenant environments. 10 inference jobs that previously required 10 A100s can run on 1–2 A100s with MIG profiles.

What is the difference between GPU MIG and GPU time-slicing in Kubernetes?

MIG provides hardware-level partitioning on Ampere GPUs (A100, H100) — dedicated memory, compute, and fault isolation per partition. Suitable for production inference with SLA requirements. Time-slicing is software-based sharing on any NVIDIA GPU — multiple pods share a GPU’s execution context through time multiplexing, with no memory or fault isolation. Suitable for development, notebooks, and experimentation where latency jitter is acceptable. Use MIG for production, time-slicing for development, full GPU for training.

How do you implement cost attribution for Kubernetes workloads?

Apply six mandatory labels to every namespace: team, product, environment, cost-centre, workload-type, and focus-service. Enforce at pod admission using admission controllers or a FinOps OS so no pod enters without complete metadata. Map namespace costs to business metrics: cost per feature, cost per inference, cost per training run. Use the FinOps Foundation FOCUS standard to normalise attributed costs across cloud providers and Kubernetes platforms.

Why does traditional FinOps fail for Kubernetes environments?

Traditional FinOps operates at the infrastructure layer — one VM equals one cost unit. Kubernetes abstracts this: one VM hosts dozens of containers from multiple teams. Cloud billing shows VM cost but not which pods ran on it, which team owns them, or whether requested resources were used. A cluster billed at £100,000/month may generate only £35,000/month of productive workload value — with 65% of resources idle in overprovisioned containers. Standard cloud cost tools cannot surface or act on this structural waste.

How does DigiUsher’s FinOps OS govern Kubernetes costs differently?

DigiUsher governs at the workload level through four capabilities: workload-level cost visibility with namespace and pod attribution across AKS, EKS, GKE, OpenShift, and on-prem normalised to FOCUS 1.x; real-time rightsizing insights surfaced as governance signals in CI/CD pipelines; GPU and AI cost governance with MIG-aware attribution, idle detection, and training job SLA enforcement; and mandatory policy enforcement with admission-time tag validation and automated budget guardrails. Governance that acts before spend occurs — not monthly reports explaining waste that has already accumulated.

References

- Wozz Production Cluster Study 2026 — Memory Overprovisioning in 3,042 Clusters

- Sedai — Kubernetes Cost and Resource Optimisation Guide 2026

- CIO — How Kubernetes Is Finally Solving the GPU Utilisation Crisis

- Spheron — Kubernetes GPU Orchestration 2026: DRA, KAI Scheduler

- Amnic — Top Cloud Cost Trends 2025 and 2026

- Cloud Wastage Statistics 2025–2026

- NVIDIA MIG Documentation — Kubernetes GPU Operator

- FinOps Foundation — FOCUS Specification

- CNCF Survey 2024 — Kubernetes Adoption

- KubeCon Europe 2026 proceedings

- McKinsey — Cloud Cost Optimisation: Continuous vs. Periodic Reviews

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

AKS vs EKS vs GKE vs On-Prem vs OpenShift: Cost Governance Deep Dive

Not all Kubernetes platforms are economically equal. This FinOps deep dive compares AKS, EKS, GKE, on-prem Kubernetes, and OpenShift across cost visibility, pricing structure, optimisation potential, and governance capability — with a practical framework for making Kubernetes platform economics a competitive advantage in 2026.

Explore article

Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails

Multi-cloud Kubernetes delivers portability across AKS, EKS, GKE, and OpenShift — but portability does not solve economics. Discover the five ways cost fragmentation silently destroys multi-cloud ROI, how leading platform teams are building financial orchestration alongside workload orchestration, and why DigiUsher's FinOps OS outperforms IBM Cloudability, CloudZero, Vantage, and FinOut for Kubernetes cost governance.

Explore article

Kubernetes Economics: Why Containers Multiply Cloud Waste

99% of Kubernetes clusters are overprovisioned. The average cluster wastes 47% of provisioned resources. At KubeCon Europe 2026, the industry admitted what FinOps practitioners already knew: Kubernetes solves deployment — it amplifies inefficiency. This deep dive explains the five hidden financial mechanisms behind Kubernetes waste, why traditional FinOps cannot fix them, and what a FinOps OS layer does that point tools cannot.

Explore article