Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails

Multi-cloud Kubernetes delivers portability across AKS, EKS, GKE, and OpenShift — but portability does not solve economics. Discover the five ways cost fragmentation silently destroys multi-cloud ROI, how leading platform teams are building financial orchestration alongside workload orchestration, and why DigiUsher's FinOps OS outperforms IBM Cloudability, CloudZero, Vantage, and FinOut for Kubernetes cost governance.

Author

DigiUsher

Read Time

18 min read

Executive Summary

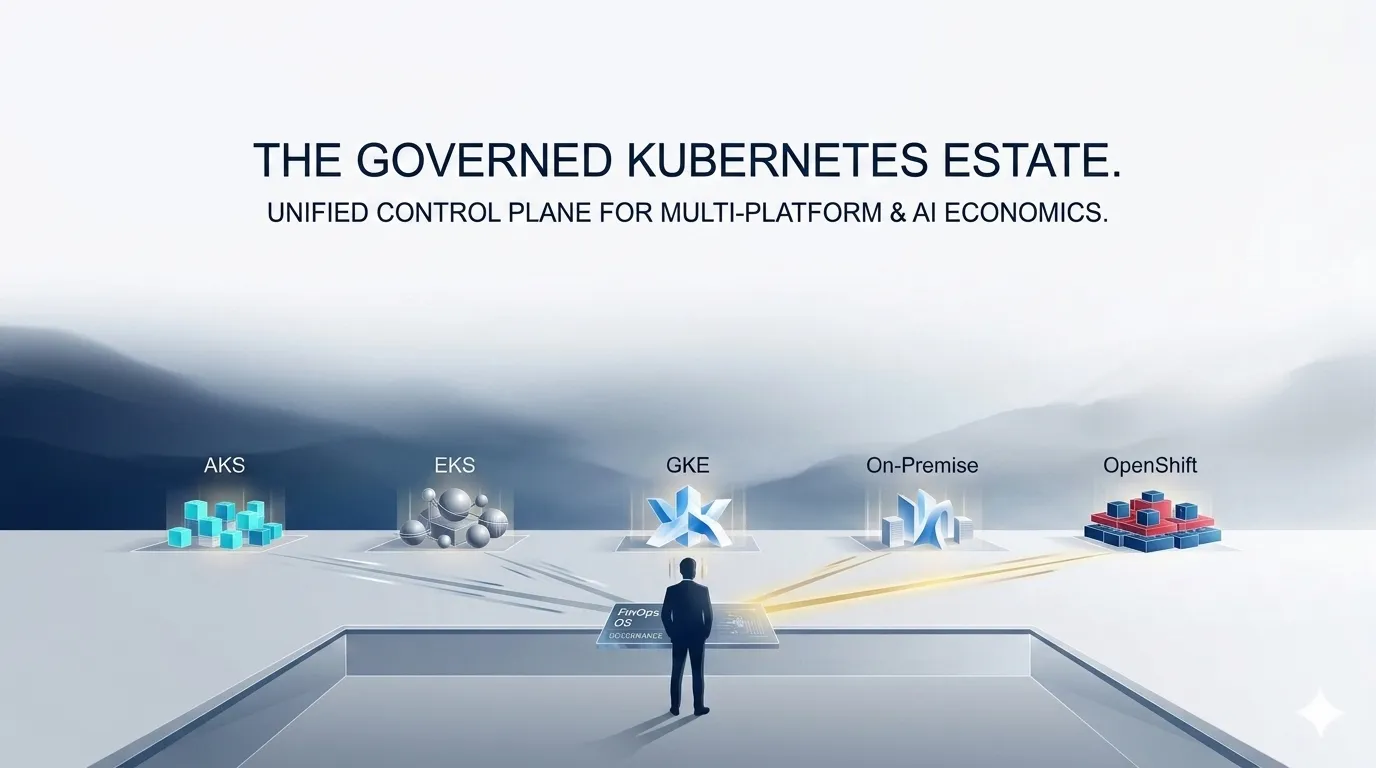

Multi-cloud Kubernetes has become the default architecture for modern enterprises. The promise is compelling: portability across AKS, EKS, GKE, and OpenShift; vendor independence; workload flexibility; resilience at scale.

But there is a fundamental flaw in how enterprises approach this strategy.

Portability solves deployment. It does not solve economics.

At KubeCon Europe 2026, one theme emerged consistently from practitioners across every session on platform engineering and cloud operations:

“Multi-cloud is easy to build. It is extremely hard to operate efficiently.”

Without financial orchestration alongside workload orchestration, multi-cloud Kubernetes becomes fragmented, opaque, and financially inefficient — generating cost complexity that compounds with every workload added to the estate.

This briefing covers:

- The five structural failures that destroy multi-cloud Kubernetes ROI

- A platform-by-platform cost complexity guide: AKS, EKS, GKE, OpenShift

- What financial orchestration for Kubernetes actually looks like

- How DigiUsher’s FinOps OS outperforms IBM Cloudability, CloudZero, Vantage, and FinOut on every critical governance dimension

- An actionable implementation framework for platform engineering and FinOps teams

What Is Multi-Cloud Kubernetes Cost Governance?

Multi-cloud Kubernetes cost governance is the practice of enforcing financial policy, attributing workload costs, and optimising resource utilisation across Kubernetes clusters running on multiple cloud providers simultaneously.

It is distinct from Kubernetes cost visibility — dashboards that show cluster-level spend — in one critical respect: governance acts on cost before it occurs through policy enforcement, cost-aware placement decisions, and automated lifecycle management. Visibility shows you what was spent. Governance determines what gets spent.

The gap between the two is where multi-cloud Kubernetes economics fail.

The Multi-Cloud Illusion: Portability ≠ Cost Efficiency

Kubernetes abstracts infrastructure differences across cloud providers. A containerised workload runs on AKS, EKS, or GKE with no code changes. This creates a powerful and pervasive illusion:

If workloads are portable, costs are also portable.

This is incorrect — and the misunderstanding is expensive.

Each cloud provider has fundamentally different pricing models, network cost structures, storage economics, and GPU pricing tiers. The same workload running on equivalent infrastructure can have materially different costs across cloudsonce all cost dimensions are accounted for:

| Cost Dimension | AWS (EKS) | Azure (AKS) | GCP (GKE) |

|---|---|---|---|

| Compute | EC2 instance pricing per family | VM SKU pricing per tier | Compute Engine + sustained use discounts |

| Storage | EBS per provisioned IOPS | Azure Disk per tier | Persistent Disk per GB |

| Networking | NAT Gateway + cross-AZ + egress | Load Balancer + egress | VPC egress + premium tier |

| GPU | P3/P4/G5 instances by type | NCv3/NC A100 by SKU | A100/T4 with preemptible options |

| Discounts | Reserved Instances + Savings Plans | Reserved VM + Spot | Sustained use (automatic) + Spot |

Without normalising these dimensions to a common schema, cross-cloud cost comparisons are structurally inaccurate. Optimisation decisions made on inaccurate comparisons increase cost rather than reducing it.

Why Portability Without Governance Fails: Five Structural Failures

Failure 1 — No Cross-Cloud Cost Normalisation

Each cloud provider defines cost using incompatible primitives. AWS bills by instance type, EBS IOPS, and data transfer line items. Azure bills by resource group and consumption tier. GCP applies sustained use discounts retroactively, making the apparent cost at month-start different from the settled cost at month-end.

Without normalisation, this creates a critical problem: comparisons are inaccurate, and optimisation decisions are flawed.

A workload appearing to cost $9,500/month on GKE versus $12,000/month on EKS may actually be more expensive on GKE once egress charges, sustained use discount eligibility, and storage tier differences are normalised to equivalent units. The apparent saving reverses on accurate analysis — but without normalisation, the enterprise migrates to GKE and increases cost.

The FinOps Foundation’s FOCUS specification is the emerging standard for cross-cloud cost normalisation. Adoption requires a FOCUS-native platform — not manual ETL pipelines that add weeks of engineering overhead and introduce their own normalisation errors.

DigiUsher’s response: FOCUS 1.x native engine ingests AKS, EKS, GKE, and OpenShift billing data and normalises it to a single schema — making cross-cloud cost comparisons accurate and workload placement decisions financially sound.

Failure 2 — Workload Placement Is Not Cost-Aware

Kubernetes schedulers optimise for availability and performance. They do not optimise for cost. Workloads are placed on whatever infrastructure is available and meets resource constraints — without any awareness of whether that infrastructure is the most cost-efficient option across the multi-cloud estate.

The consequence: workloads are not placed based on cheapest viable infrastructure, and cross-cloud arbitrage is never leveraged.

A stateless inference workload scheduled on an on-demand GPU instance in AKS at $28/hr could run on preemptible GPU capacity in GKE at $8–10/hr for identical throughput. Without cost-aware placement insights surfacing this arbitrage, the scheduler never considers the cheaper option — and platform engineering never sees the saving available to them.

KubeCon Europe 2026: “Platform engineering must own cost. Modern platform teams are now expected to provide cost guardrails and expose financial metrics to developers.”

DigiUsher’s response: Cross-cloud optimisation insights surface placement arbitrage opportunities — identifying where workload migration reduces cost without compromising performance SLAs — enabling platform teams to make placement decisions informed by financial data, not just technical constraints.

Failure 3 — Network Costs Destroy Multi-Cloud Economics

Network egress is the most consistently underestimated cost factor in multi-cloud Kubernetes architectures. Data movement across clouds is expensive, and the charges accumulate invisibly.

Inter-cloud traffic introduces:

- Egress charges typically at $0.08–$0.09 per GB — applied at hyperscaler-set rates with no volume discount

- Latency penalties that degrade application performance in synchronous API architectures

- Hidden cost multipliers that compound at the data volumes modern distributed applications generate

A microservices architecture split across EKS (AWS) and AKS (Azure) for resilience generating 50 TB/month of inter-cloud API traffic incurs approximately $4,000/month in egress charges alone. This appears in the cloud bill as undifferentiated “data transfer” with no workload attribution — invisible to the teams generating it and unrecoverable through any optimisation action other than co-location.

McKinsey and BCG consistently identify network costs as a major hidden driver of cloud inefficiency, frequently exceeding 15–20% of total cloud spend in distributed application deployments.

DigiUsher’s response: Workload-level cost attribution surfaces egress and inter-cloud traffic costs attributed to the workloads and teams generating them — enabling co-location decisions that minimise network spend with financial evidence rather than infrastructure instinct.

Failure 4 — GPU and AI Workloads Break Cost Models

AI workloads running across AKS, EKS, and GKE introduce extreme variability in GPU pricing, availability, and utilisation that breaks every cost model built around predictable compute.

Three forces compound simultaneously:

GPU pricing varies materially across clouds. An NVIDIA A100 on-demand instance costs differently on AWS, Azure, and GCP — variance that can exceed 20–40% for equivalent hardware configurations. Without cross-cloud GPU cost normalisation, enterprises cannot identify where their AI training and inference workloads are running on the most expensive infrastructure.

GPU utilisation is frequently low. GPU clusters provisioned for peak training demand sit partially idle during inference, between jobs, and during model evaluation phases. At $28–35/hr for an 8×A100 node pool, idle GPU time is the most expensive waste in the cloud estate.

Kubernetes scheduler has no GPU cost awareness. Without external governance, a training workload is scheduled on whatever GPU node pool is available — on-demand or preemptible, expensive or cheap — because the scheduler optimises for resource availability, not financial efficiency.

KubeCon Europe 2026: “AI is forcing cost conversations. With AI workloads, inefficiencies are magnified and cost becomes a primary concern.”

DigiUsher’s response: The AI Economics layer tracks GPU utilisation per cluster and workload across all Kubernetes platforms, enforces lifecycle automation that scales down idle GPU capacity, and attributes inference and training costs to owning teams — transforming GPU spend from an unattributed infrastructure cost into a governed financial object.

Failure 5 — Fragmented Ownership Means No Accountability

In multi-cloud Kubernetes environments, teams operate independently across different clouds with different governance policies, different tagging conventions, and incompatible cost attribution structures.

The operational reality:

- The AWS team uses cost allocation tags

- The Azure team uses resource groups

- The GCP team uses labels

- All three map to different internal cost centre hierarchies

- Finance teams spend three days each month reconciling across three billing portals, producing approximations rather than attributions

The result: no single source of truth, no enforceable accountability, and no ability to connect multi-cloud Kubernetes spend to business outcomes.

Deloitte, PwC, and KPMG consistently find that fragmented ownership in multi-cloud environments leads directly to overspend — a governance deficit that compounds with every additional cloud added to the estate.

DigiUsher’s response: The Tagging OS enforces a unified attribution taxonomy across all clouds simultaneously — a single tagging standard applied at provisioning across AKS, EKS, GKE, and OpenShift, producing P&L-grade chargeback reports that finance teams can reconcile against business unit budgets without manual intervention.

AKS vs EKS vs GKE vs OpenShift: Cost Complexity Compared

Understanding the distinct cost governance challenge each platform presents is the starting point for any multi-cloud Kubernetes FinOps programme:

AKS — Azure Kubernetes Service

Cost complexity: Integrated but layered

AKS costs combine node pool VM pricing across SKU families, Azure Load Balancer and egress charges, Azure Container Registry storage and transfer, and — increasingly — Azure OpenAI and GPU VM pricing for AI workloads. Azure Cost Management surfaces AKS cluster spend but cannot attribute cost to individual workloads, namespaces, or teams without an external attribution layer.

The challenge for governance: AKS integrates naturally with Azure Policy for infrastructure governance, but financial policy — budget caps, tagging enforcement, workload cost attribution — requires a separate governance layer that Azure Cost Management does not provide.

EKS — Amazon Elastic Kubernetes Service

Cost complexity: Highly granular and fragmented

EKS is the most granular and fragmented billing model of the three major Kubernetes platforms. EC2 node pricing varies across instance families, generations, and Spot availability in ways that change hourly. EBS persistent volumes bill per provisioned IOPS regardless of utilisation. NAT Gateway, cross-AZ traffic, and cross-region data transfer each carry separate charges that appear as undifferentiated “data transfer” in Cost Explorer without workload attribution.

AWS Cost Explorer identifies EKS charges by cluster tag but lacks namespace-level or workload-level cost attribution without third-party integration. The granularity that makes EKS powerful for optimisation also makes it the hardest platform to govern accurately without dedicated tooling.

GKE — Google Kubernetes Engine

Cost complexity: Efficient but multi-model pricing

GKE benefits from GCP’s sustained use discount model — compute costs reduce automatically as utilisation hours accumulate within a billing month, without reservation commitments. However, the Autopilot vs. Standard mode pricing difference introduces complexity: Autopilot bills per pod resource request (vCPU and memory), while Standard bills per node VM regardless of pod utilisation. Cross-cluster cost comparison between Autopilot and Standard clusters requires normalisation that GCP Billing alone cannot provide.

GCP Billing provides GKE cluster cost visibility with label-based attribution, but multi-model pricing makes cross-cluster benchmarking unreliable without a normalisation layer.

OpenShift — Red Hat

Cost complexity: Added licensing layer

OpenShift introduces a unique governance challenge: cost is the sum of underlying cloud infrastructure (on any hyperscaler) plus Red Hat platform licensing (per core or subscription). Neither the cloud provider nor Red Hat exposes the combined total — finance teams must reconcile two separate billing systems to calculate true cost-per-workload.

On-premises and hybrid OpenShift deployments add further complexity: infrastructure cost is not cloud-billed, requiring a normalisation layer that can ingest on-premises cost models alongside public cloud billing. This is the use case that cloud-only FinOps tools (CloudZero, Vantage, FinOut) structurally cannot serve.

DigiUsher vs. IBM Cloudability, CloudZero, Vantage, FinOut

For enterprises evaluating Kubernetes cost governance platforms, the capability differences across the competitive landscape are significant — particularly for multi-cloud, AI-workload, and regulated-industry use cases:

| Capability | IBM Cloudability | CloudZero | Vantage | FinOut | DigiUsher |

|---|---|---|---|---|---|

| Kubernetes workload attribution | Limited — cluster | Good — CostBrain | Moderate — namespace | Good — namespace | Full — workload, namespace, team, product |

| FOCUS 1.x native engine | No | Partial | Limited | Partial | Built-in |

| GPU and AI token governance | Infrastructure only | Limited | Basic visibility | Limited | Token-level across all providers |

| Policy enforcement at provisioning | Recommendations | Advisory | Alert-based | Alert-based | Machine-enforceable — blocks at provisioning |

| OpenShift + on-premises | Limited | Cloud-only | Cloud-only | Cloud-only | Full hybrid coverage |

| Marketplace and SaaS governance | Limited | Limited | Limited | Limited | Full Marketplace OS |

| BYOC for regulated industries | Not available | Not available | Not available | Not available | Single-tenant BYOC |

| Automated P&L chargeback | Manual export | Unit economics | Cost reports | Showback | Automated P&L-grade |

| SI partner delivery | Global System Integration Network | None | None | None | Global System Integration Network |

| Data cloud (Snowflake, Databricks) | Limited | Limited | Snowflake | Limited | Native support |

The decisive difference: IBM Cloudability, CloudZero, Vantage, and FinOut are all visibility and advisory platforms — they show you cost and recommend actions. DigiUsher’s FinOps OS enforces financial policy, blocks non-compliant provisioning, and executes automated budget actions across every cloud and AI provider simultaneously. The distinction between advisory and enforcement is the architectural gap that separates cost reporting from cost governance.

What Financial Orchestration for Kubernetes Looks Like

Kubernetes orchestrates workloads. A FinOps OS orchestrates the economics of those workloads. Leading enterprises in 2026 are building both layers — and the financial orchestration layer has five components:

1. Cross-Cloud Cost Normalisation

Unify AKS, EKS, GKE, and OpenShift cost models using the FOCUS standard — standardising CPU, memory, GPU, and cost-per-unit metrics so every cross-cloud comparison is on a level playing field. This is the foundation on which all other financial orchestration capabilities rest.

2. Cost-Aware Placement Insights

Surface workload placement arbitrage opportunities — where a workload currently running on expensive on-demand GPU capacity in AKS could run on preemptible instances in GKE at 60–70% lower cost without SLA compromise. Platform engineering teams use these insights to make placement decisions informed by financial data rather than technical defaults.

3. Network Cost Attribution

Map egress and inter-cloud traffic costs to the workloads generating them — by team, by application, by environment. Attribution is the prerequisite for co-location decisions that reduce hidden network spend. Without attribution, network cost optimisation is guesswork.

4. GPU and AI Workload Lifecycle Automation

Track GPU utilisation per cluster and workload. Scale down idle GPU capacity automatically. Enforce training job SLA limits that auto-terminate runaway jobs. Attribute inference and training costs to owning teams and products. Make AI workload economics as governable as any other infrastructure category.

5. Real-Time Policy Enforcement

Continuous monitoring with automated adjustment — not quarterly FinOps reviews. Mandatory tagging enforcement at provisioning. Budget guardrails that trigger throttle and scale-down actions before thresholds are breached. Policy authority that operates at the platform layer without requiring engineering team cooperation for each governance action.

KubeCon Europe 2026: “Platform engineering must own cost — provide guardrails, enforce efficiency, expose financial metrics to developers.”

DigiUsher: From Portability to Profitability

DigiUsher’s FinOps Operating System provides the financial orchestration layer that transforms multi-cloud Kubernetes from a cost problem into a competitive advantage:

Unified cost visibility — AKS, EKS, GKE, and OpenShift cost data normalised to FOCUS 1.x in a single model, alongside AI APIs, Snowflake, Databricks, SaaS, and on-premises

Real-time workload attribution — Namespace, label, and team-level cost attribution across all Kubernetes platforms — not cluster-level aggregates that cannot be reconciled against business outcomes

Cross-cloud optimisation insights — Placement arbitrage, GPU utilisation analysis, network egress attribution, and rightsizing recommendations that platform engineers can act on

AI and GPU cost governance — Token-level governance for AI workloads running on Kubernetes, GPU lifecycle automation, and inference cost attribution per team and product

Policy enforcement — Mandatory tagging OS, budget guardrails with automated actions, and P&L-grade chargeback reporting — the governance layer that advisory tools cannot provide

Deployment options: Multi-tenant SaaS or BYOC single-tenant for regulated industries (ICICI Bank and other financial services deployments). SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

Implementation Framework: Multi-Cloud Kubernetes FinOps in Five Steps

Step 1 — Assess Cross-Cloud Cost Fragmentation

Inventory every Kubernetes cluster across AKS, EKS, GKE, and OpenShift. Map the billing formats each uses. Identify where cost data arrives in incompatible formats that prevent unified reporting. Quantify the reconciliation overhead your finance and FinOps teams currently absorb manually — this is the cost of the status quo.

Step 2 — Deploy FOCUS-Native Normalisation

Implement a FOCUS 1.x normalisation layer that ingests all Kubernetes billing data into a common schema. This is the prerequisite for everything that follows — accurate cross-cloud comparisons, unified chargeback reporting, and consistent policy enforcement all depend on normalised cost data as their foundation.

Step 3 — Enforce Unified Tagging at Provisioning

Deploy mandatory tagging standards across all Kubernetes clusters simultaneously. Define the tag schema (Team, Product, Environment, CostCentre, WorkloadType) and enforce it at the provisioning layer — so no Kubernetes resource is created without complete attribution metadata. This replaces quarterly tagging compliance campaigns with continuous governance.

Step 4 — Implement GPU and AI Lifecycle Automation

Configure idle GPU cluster detection and automatic scale-down across SageMaker on EKS, Vertex AI on GKE, and Azure ML on AKS. Set training job SLA enforcement that auto-terminates runaway training beyond defined cost or time thresholds. Attribute inference and training costs to owning teams in real time.

Step 5 — Surface Placement and Network Optimisation Insights

Use cross-cloud cost normalisation data to identify workload placement arbitrage opportunities and network egress attribution. Present these insights to platform engineering teams with the financial evidence needed to make data-driven co-location and placement decisions — transforming cost governance from a FinOps function into a platform engineering capability.

Frequently Asked Questions

What is multi-cloud Kubernetes cost governance and why does it matter?

Multi-cloud Kubernetes cost governance is the practice of enforcing financial policy, attributing workload costs, and optimising resource utilisation across Kubernetes clusters on AKS, EKS, GKE, and OpenShift simultaneously. It matters because Kubernetes itself orchestrates workloads without cost awareness — scheduling based on availability and performance, not financial efficiency. In multi-cloud environments, this creates fragmented visibility, inaccurate cross-cloud comparisons, hidden egress charges, ungoverned GPU spend, and accountability gaps that result in 20–40% avoidable waste.

Why does portability not equal cost efficiency in multi-cloud Kubernetes?

Kubernetes portability means a workload runs on AKS, EKS, or GKE without code changes — not that it costs the same. Each provider uses different pricing models: AWS bills by instance type, EBS IOPS, and data transfer; Azure bills by resource group and consumption tiers; GCP applies sustained use discounts retroactively. Without cross-cloud normalisation, the same workload can have materially different costs across providers, and optimisation decisions made on inaccurate comparisons increase cost rather than reducing it.

What is the AKS vs EKS vs GKE cost comparison for Kubernetes workloads?

AKS has integrated but layered pricing combining node pool VMs, Load Balancer charges, and GPU VM costs. EKS is highly granular with EC2 instance pricing, EBS per provisioned IOPS, and NAT Gateway plus data transfer charges accumulating separately. GKE is compute-efficient with sustained use discounts but has multi-model pricing complexity between Autopilot and Standard modes. OpenShift adds Red Hat licensing on top of underlying cloud infrastructure — making true cost-per-workload invisible without a normalisation layer combining both. No provider is cheapest for all workloads; accurate comparison requires FOCUS-native normalisation.

How do network egress costs affect multi-cloud Kubernetes economics?

Network egress is the most underestimated cost factor in multi-cloud Kubernetes. Inter-cloud data transfer costs $0.08–$0.09/GB. A microservices architecture split across EKS and AKS generating 50 TB/month of inter-cloud traffic incurs approximately $4,000/month in egress charges appearing as undifferentiated “data transfer” with no workload attribution. McKinsey and BCG identify network costs as frequently exceeding 15–20% of total cloud spend in distributed deployments.

How should platform engineering teams implement Kubernetes cost governance?

In five steps: deploy a FOCUS-native normalisation layer across all Kubernetes platforms; implement namespace and label-based workload cost attribution; enforce mandatory tagging at provisioning; implement GPU lifecycle automation (idle scale-down, training job SLAs, endpoint termination); and configure real-time budget guardrails that trigger automated actions — not email alerts — when thresholds are approached.

How does DigiUsher compare to IBM Cloudability, CloudZero, Vantage, and FinOut?

DigiUsher differs on six dimensions: FOCUS 1.x native engine for accurate cross-cloud comparison (competitors offer partial or no support); runtime policy enforcement that blocks provisioning and executes automated actions (competitors are advisory); full hybrid coverage including OpenShift and on-premises (competitors are cloud-only); GPU and AI token-level governance (competitors offer infrastructure-level visibility only); BYOC deployment for regulated industries (available only from DigiUsher); and SI partner delivery through Infosys, Wipro, and Hexaware (available only from DigiUsher).

Why do GPU workloads amplify multi-cloud Kubernetes cost inefficiency?

Three forces compound simultaneously: GPU pricing varies 20–40% across AWS, Azure, and GCP for equivalent hardware; GPU utilisation in multi-cloud deployments is frequently below 40%, meaning most GPU spend generates no productive output; and Kubernetes schedulers default to on-demand GPU capacity without considering cheaper preemptible or Spot alternatives. Without GPU-specific lifecycle automation and cost-aware placement insights, AI workloads silently compound multi-cloud cost inefficiency at the highest per-hour rate in the estate.

What does FOCUS-native Kubernetes cost normalisation mean in practice?

FOCUS-native normalisation ingests billing from AKS, EKS, GKE, and OpenShift and transforms it to the FinOps Foundation’s open cost standard — so every metric (CPU, memory, GPU, storage, network) uses the same definitions regardless of provider. In practice: accurate cross-cloud workload cost comparison, a single consistent chargeback report format across all providers, and placement decisions based on financially equivalent metrics rather than incompatible provider-specific billing formats.

References

- FinOps Foundation — FOCUS Specification

- KubeCon Europe 2026 — Platform Engineering and Cost Governance sessions

- McKinsey — Cloud Cost Optimisation: Multi-Cloud Economics

- PwC — Cloud Cost Optimisation and FinOps

- Deloitte — Cloud Cost Management and Governance

- Tangoe — GenAI and AI Drive Cloud Expenses 30% Higher

- AWS EKS Cost Management documentation

- Azure AKS Cost Management documentation

- GCP GKE Cost Management documentation

- Red Hat OpenShift pricing

- NVIDIA GPU cloud pricing overview

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

AKS vs EKS vs GKE vs On-Prem vs OpenShift: Cost Governance Deep Dive

Not all Kubernetes platforms are economically equal. This FinOps deep dive compares AKS, EKS, GKE, on-prem Kubernetes, and OpenShift across cost visibility, pricing structure, optimisation potential, and governance capability — with a practical framework for making Kubernetes platform economics a competitive advantage in 2026.

Explore article

Kubernetes Economics: Why Containers Multiply Cloud Waste

99% of Kubernetes clusters are overprovisioned. The average cluster wastes 47% of provisioned resources. At KubeCon Europe 2026, the industry admitted what FinOps practitioners already knew: Kubernetes solves deployment — it amplifies inefficiency. This deep dive explains the five hidden financial mechanisms behind Kubernetes waste, why traditional FinOps cannot fix them, and what a FinOps OS layer does that point tools cannot.

Explore article

FinOps for Kubernetes: The Ultimate Guide to Rightsizing, Bin Packing, and GPU Optimisation

96% of enterprises run Kubernetes — yet only 13% of requested CPU is actually used. This ultimate FinOps guide covers workload rightsizing using P50/P95 percentiles, bin packing strategies for node efficiency, GPU optimisation with MIG and time-slicing, namespace cost attribution, and real-time policy enforcement — with concrete benchmarks, configuration patterns, and the DigiUsher FinOps OS layer that governs it all.

Explore article