Kubernetes Economics: Why Containers Multiply Cloud Waste

99% of Kubernetes clusters are overprovisioned. The average cluster wastes 47% of provisioned resources. At KubeCon Europe 2026, the industry admitted what FinOps practitioners already knew: Kubernetes solves deployment — it amplifies inefficiency. This deep dive explains the five hidden financial mechanisms behind Kubernetes waste, why traditional FinOps cannot fix them, and what a FinOps OS layer does that point tools cannot.

Author

DigiUsher

Read Time

19 min read

Executive Summary

Kubernetes was designed to maximise efficiency. In practice, for most enterprises, it does the opposite.

The data from 2026 production cluster analysis is unambiguous:

- 99% of Kubernetes clusters are overprovisioned — carrying more capacity than they actually use

- The average cluster wastes 47% of its provisioned resources — not through carelessness, but because Kubernetes defaults optimise for safety, not cost

- Only 13% of requested CPU is consumed on average — an 8× gap between what enterprises pay for and what workloads actually need

- Average GPU utilisation is 23% — meaning 77% of the most expensive compute in the estate is idle and billing continuously

At KubeCon Europe 2026 in Amsterdam — 13,350 attendees, representing 19.9 million cloud-native developers globally — one narrative emerged clearly: the industry has mastered Kubernetes deployment but has not solved Kubernetes economics.

“The platform is ready. What’s missing is execution.” — SiliconANGLE, KubeCon EU 2026

This briefing explains the five financial mechanisms through which containers multiply cloud waste, why the cloud bill hides them, why traditional FinOps cannot govern them, and what a FinOps Operating System layer does that point tools structurally cannot.

What Is Kubernetes Cloud Waste?

Kubernetes cloud waste is the gap between provisioned and actually consumed resources across CPU, memory, GPU, storage, and network dimensions — driven by the way Kubernetes abstracts infrastructure from cost.

The defining characteristic of Kubernetes waste is that it is invisible in standard cloud billing. Cloud providers bill for VM instances — not for the containers running on them, not for the resources those containers requested, and not for the gap between requested and consumed resources. A Kubernetes cluster spending £100,000/month in VM costs may be delivering only £35,000/month of actual workload value, with the remaining £65,000 funding idle headroom that the billing system reports as normal compute usage.

This invisibility is not incidental. It is structural.

What the cloud bill shows:

EC2 / AKS / GKE node cost: £100,000/month

─────────────────────────────────────────────

What is actually happening inside:

Pod A: requests 2 CPU, uses 0.2 CPU ← 90% waste

Pod B: requests 4GB RAM, uses 400MB ← 90% waste

GPU node: 23% utilisation ← 77% waste

Idle nodes (spread scheduling): 12 ← 100% waste

─────────────────────────────────────────────

Effective spend: ~£35,000/month

Silent waste: ~£65,000/month

Baseline cloud waste across enterprises ranges between 28% and 35% of total cloud spend — with Kubernetes-specific overallocation adding 20–30% resource headroom not consumed in steady state, on top of the baseline waste that exists across all cloud workloads.

The Kubernetes Paradox: More Abstraction, More Waste

Kubernetes promises efficient resource utilisation, dynamic scaling, and optimal workload scheduling. These promises are technically achievable. They are rarely achieved in practice.

The paradox is architectural: the abstraction that makes Kubernetes powerful for deployment is the same abstraction that hides inefficiency from the teams responsible for cost.

In a pre-Kubernetes world, one VM equalled one workload. Cost was directly attributable to what it funded. In a Kubernetes world, one VM hosts dozens of containers from multiple teams, serving multiple products, across multiple environments. Cost becomes collective, anonymous, and unattributable — which means it becomes ungoverned.

“Scaling inefficient workloads simply amplifies inefficiency.” — KubeCon EU 2026

The numbers from 2026 confirm what practitioners at KubeCon have been saying privately for two years: the average Kubernetes cluster wastes 47% of its provisioned resources — not because engineers are careless, not because workloads are inefficient, but because Kubernetes defaults optimise for safety, not cost.

Five Financial Mechanisms That Multiply Kubernetes Waste

Mechanism 1 — Overprovisioning by Design

The structural problem: Kubernetes scheduling requires declaring CPU and memory requests before workloads run. These requests determine both scheduling (how many pods fit on a node) and billing (cloud providers charge for provisioned capacity, not consumed capacity). In practice, they are set to prevent failures — not to reflect actual usage.

After analysing 3,042 production clusters across 600+ companies in January 2026, 68% of pods were found to request 3–8× more memory than they actually use. Studies show only 13% of requested CPU is used on average, while 20–45% of requested resources actually power workloads.

The financial mechanism: Cloud providers bill for requested resources, not consumed resources. A pod requesting 4GB RAM and using 400MB generates billing for 4GB — continuously, buried in VM instance charges with no workload attribution, no visibility signal, and no automatic correction.

The human factor: The average company wastes $847 per month on memory over-provisioning alone. Real-world examples showed companies cutting costs from $47,200 to $11,100 monthly — a 76% reduction — simply by right-sizing memory requests. Three behaviours drive the problem:

- Tutorial cargo-culting — 73% of

2Gimemory configurations trace back to outdated documentation; developers copy-paste resource specs from tutorials written years ago - The post-OOM reflex — 64% of teams add 2–4× memory headroom after a single staging OOM incident, permanently inflating requests

- Deploy-time amnesia — resource requests set at first deployment and never revisited, even as workload behaviour changes over months

The equation: Reserved ≠ Used. But Reserved = Paid. And the gap between them is silent, continuous, and compounding.

Mechanism 2 — Autoscaling Amplifies Inefficiency

The common misconception: Autoscaling is a cost-efficiency tool. It is not. It is a throughput tool that scales what you give it — misconfigured workloads included.

The Horizontal Pod Autoscaler scales based on observed utilisation relative to resource requests, not on whether those requests accurately reflect workload needs. When requests are inflated, HPA creates additional replicas of an overprovisioned workload — each new replica maintaining the same low actual utilisation, multiplying the waste proportionally.

The financial mechanism: A workload requesting 1000m CPU and actually using 100m, scaled from 5 to 50 replicas by a traffic event, maintains exactly 10% actual CPU utilisation across all 50 replicas. The autoscaler has fired a scale-out event that provisions new Cluster Autoscaler nodes, which then sit at 10% utilisation. Cost has multiplied by 10× without any signal to the FinOps team that this occurred — it appears as a normal traffic-correlated cost increase rather than amplified inefficiency.

“Scaling inefficient workloads simply amplifies inefficiency.” — KubeCon EU 2026 (Efficiently Connected)

What this means for peak events: Product launches, quarter-end processing, and AI inference spikes are precisely the moments when autoscaling fires at maximum scale. They are also the moments when pre-existing overprovisioning multiplies into its largest single cost impact of the year — visible only in the next month’s cloud bill.

Mechanism 3 — Idle and Fragmented Capacity

The scheduling conflict: Kubernetes’ default spread-first scheduling distributes pods across available nodes to maximise resilience. This is the correct design choice for availability. It is the wrong outcome for cost.

Spread scheduling creates stranded capacity — nodes that are 30–50% allocated but cannot be terminated by the Cluster Autoscaler because they are not empty. The Cluster Autoscaler scale-down threshold (default: 50% utilisation) means nodes at 51% allocation are permanently retained even if 90% of their capacity is idle between the scheduled workloads spread across them.

A CNCF microsurvey on Kubernetes FinOps found the top drivers of rising cluster spend were overprovisioning (70%), lack of ownership (45%), and unused resources plus technical debt (43%). Resource wastage by workload type is stark: Jobs and CronJobs waste 60–80% of allocated cluster resources. StatefulSets waste 40–60%. Even well-tuned Deployments waste 30–50%.

The non-production multiplier: 44% of cloud spend covers non-production resources. Since they’re needed only during the 40-hour workweek, they’re often left idle for the other 128 hours — 76% of the time — yet billed continuously. Kubernetes clusters provisioned for developer convenience in test and staging environments are the largest single source of idle capacity waste in most organisations’ Kubernetes estate.

Mechanism 4 — Container Image Bloat

The invisible cost: Container images accumulate unused dependencies, cached build artefacts, development tooling, and layers from public base images containing far more than production workloads require. Academic research finds container images can be up to 80% bloated with components never executed at runtime.

The financial impact compounds across three dimensions that do not appear as direct line items in cloud billing:

| Cost Dimension | How It Manifests | Why It’s Invisible |

|---|---|---|

| Registry storage | Per-GB charges in ECR, GCR, ACR | Aggregated into storage line items with no image attribution |

| Network transfer | Per-GB at every image pull, including cross-region pulls | Appears as undifferentiated data transfer |

| Operational latency | Slower cold starts → degraded autoscaling effectiveness | Lost utilisation, not a billing charge |

The startup time cost: Bloated images increase pod startup time — the period between pod scheduling and pod readiness. During this window, the pod is consuming node capacity without serving traffic. For autoscaling scenarios with rapid demand growth, startup latency forces over-provisioning of standby capacity to compensate — generating a feedback loop between image bloat and cluster overprovisioning.

Mechanism 5 — GPU Waste: The Most Expensive Kubernetes Inefficiency

The scale of the problem: Average GPU utilisation across measured workloads is 23%, meaning 77% of provisioned GPU capacity is wasted at any given time. GPU idling and queuing inefficiencies drive 15–25% waste in AI/ML clusters — and with 66% of organisations already using Kubernetes to manage generative AI inference workloads, this is rapidly becoming the largest per-dollar waste category in enterprise cloud infrastructure.

Why Kubernetes makes GPU waste structural:

Kubernetes treats GPUs as binary atomic resources — a pod either receives an entire physical GPU or none. There is no native sharing mechanism. A quantised large language model on an NVIDIA A100 (80GB) may consume 12GB of GPU memory at 30–35% compute utilisation. Kubernetes considers that GPU fully occupied — 65–70% of an accelerator costing $28–35/hr sits idle while billing at 100% of the on-demand rate.

The team hoarding problem: Organisations achieving the best GPU economics implement queue-based admission control from day one. Rather than letting individual teams provision and hoard GPU nodes, they establish organisational queues with guaranteed quotas, borrowing policies, and fair-share algorithms. This alone can boost effective utilisation by 30–50% because idle resources are automatically redistributed.

KubeCon Europe 2026: “With 66% of organisations already using Kubernetes to manage generative AI inference workloads, this is rapidly becoming the largest compute use case in human history.” — CNCF Keynote

The financial compounding: GPU waste differs from CPU waste not just in magnitude but in velocity. A CPU node at 10% utilisation wastes 90% of a $0.096/hr instance. A GPU node at 23% utilisation wastes 77% of a $28–35/hr instance. At enterprise AI scale, GPU waste is not a cost optimisation opportunity — it is a financial emergency.

The Kubernetes Hidden Cost Stack

Kubernetes introduces five cost layers between the workload and the cloud bill:

Kubernetes Hidden Cost Stack

────────────────────────────────────────────────────────────────

Layer Cost Driver Visibility

────────────────────────────────────────────────────────────────

Cloud Infrastructure VM instance hours HIGH — in bill

(VMs / Nodes) Node type × count × uptime as instance cost

Kubernetes Managed control plane MEDIUM — separate

Control Plane (~$0.10/hr on EKS) line item or free

Container Runtime Image storage, pull costs LOW — buried in

Registry and transfer storage/transfer

Application Workloads Requested vs. used VERY LOW — hidden

Resource request gap in VM charges

AI / Data Pipelines GPU utilisation rate MINIMAL — no native

Inference/training ratio billing attribution

────────────────────────────────────────────────────────────────

Each layer adds abstraction, complexity, and cost opacity. The financial irony: the layers that generate the most waste have the least visibility in the billing system.

The result: teams that optimise the highly visible layer (negotiating reserved instance discounts on overprovisioned VM fleets) while the invisible layers (container request inflation, GPU idle time, bin-packing inefficiency) continue to compound. The cloud bill goes down slightly; the waste percentage remains unchanged.

What KubeCon Europe 2026 Told the Industry

KubeCon Amsterdam 2026 — the largest gathering of cloud-native practitioners in the world — surfaced five admissions that validate what FinOps practitioners have been observing for two years:

“The platform is ready. What’s missing is execution.” Kubernetes deployment is no longer the challenge. The unsolved problem is operating it cost-efficiently — a capability that requires financial governance layered into the platform itself, not applied retrospectively through cloud billing reviews.

“66% of organisations run AI on Kubernetes, with limited production maturity.” Two-thirds of organisations have moved generative AI inference onto Kubernetes, but only a small minority report running it confidently in production. The gap between adoption and maturity is where GPU waste lives — and where it will compound most aggressively in 2026.

“For FinOps not to be a catch-up game, it must be integrated into the delivery platform.” The most direct admission from KubeCon: FinOps applied after the fact, through retrospective cloud bill analysis, cannot govern a system that generates cost dynamically and ephemerally. Governance must be embedded at the platform layer — in admission controllers, scheduling policies, and resource quota enforcement.

“The hard part isn’t acquiring GPUs. It’s getting workloads into production on them.” Operational toil — lifecycle management, cost attribution, policy enforcement, multi-tenancy — is the primary barrier to AI production readiness. The organisations solving this are those that treat GPU economics as a financial governance problem, not a hardware procurement problem.

“Lots of working demos, very few production setups people trust.” The trust gap in AI production deployment reflects the governance maturity gap in Kubernetes economics. Enterprises that cannot answer “what does this AI workload cost per inference?” cannot make financially responsible decisions about which AI capabilities to scale.

Why Traditional FinOps Cannot Fix Kubernetes Economics

Traditional cloud FinOps was designed for an infrastructure model that Kubernetes replaced. Four structural mismatches prevent it from governing Kubernetes waste:

The VM Abstraction Problem

Traditional FinOps attributes cost at the VM level. Kubernetes cost exists at the workload level — dozens of containers from multiple teams share a single VM. Without workload-level attribution using namespace, pod, and label metadata, finance teams see instance cost but cannot identify which teams, products, or features generated it.

The Reserved Instance Trap

Traditional FinOps optimises reserved instance coverage for committed-use discounts. Applied to overprovisioned Kubernetes clusters, this locks in waste at a discount rather than eliminating it. The key rule: do not commit to reservations until utilisation is stable. Locking in a commitment on overprovisioned clusters just locks in waste at a discount. Rightsize first, observe real consumption for 60–90 days, then commit.

The Request-vs-Usage Gap

Traditional FinOps measures utilisation against provisioned VMs. Kubernetes waste exists in the gap between VM capacity, requested resources, and actual usage — a three-way mismatch that standard cloud billing cannot surface. A cluster at 80% node utilisation (appearing highly efficient to traditional FinOps) may be at 10% actual CPU utilisation if node capacity is filled with overprovisioned pods.

The Monthly Report Problem

Traditional FinOps produces monthly cost reports. Kubernetes waste is dynamic, ephemeral, and workload-driven — generated and accumulated continuously. Autoscaling events, GPU idle periods, and bin-packing inefficiencies compound daily. Monthly retrospective reporting discovers waste that has already accumulated for 30 days; real-time governance prevents it from occurring.

CNCF FinOps Survey: The top drivers of rising Kubernetes spend are overprovisioning (70%), lack of ownership (45%), and unused resources plus technical debt (43%). Traditional FinOps tools are not architected to address any of them.

From Container Chaos to Financial Control: The FinOps OS Layer

Kubernetes does not fail at deployment. It lacks financial governance — the enforcement layer that turns cost visibility into cost control.

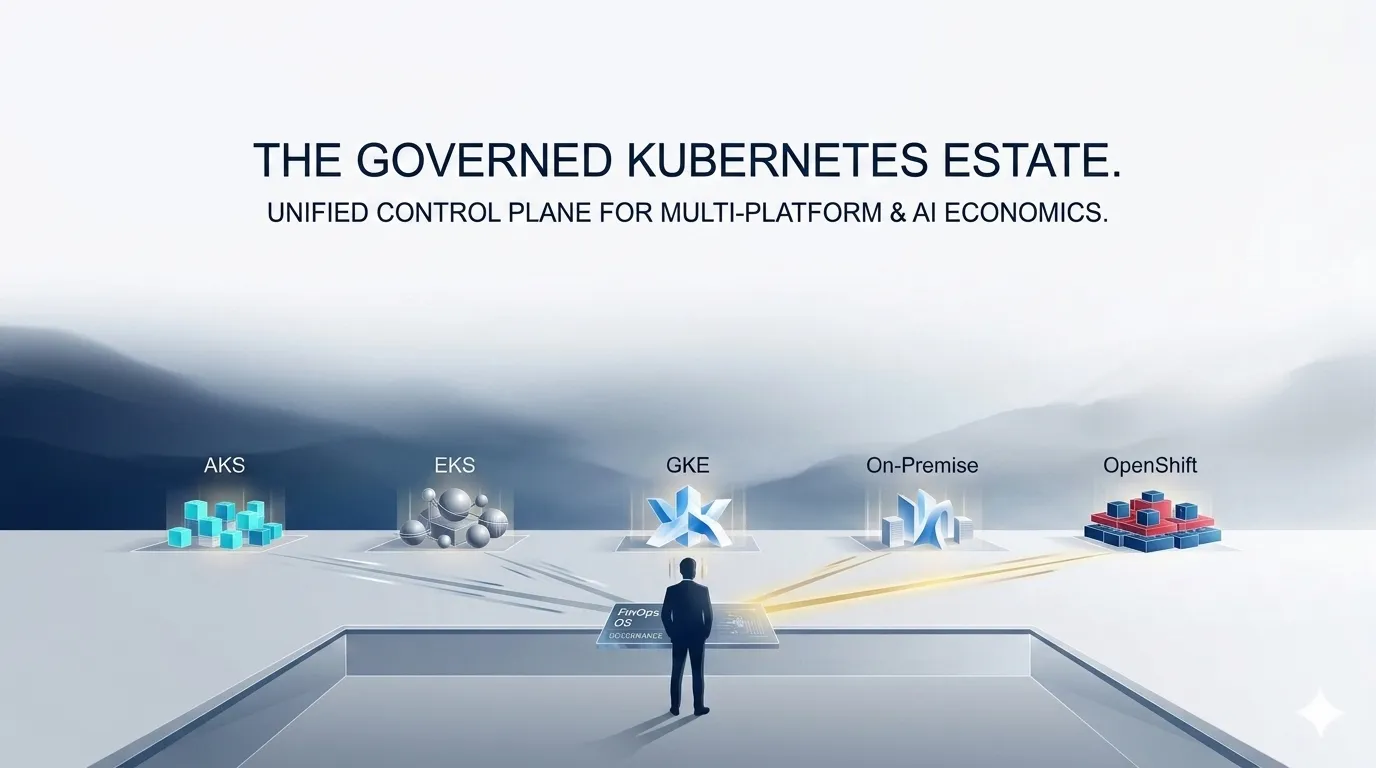

DigiUsher’s FinOps Operating System provides this layer across all five waste mechanisms:

Against overprovisioning: Continuous rightsizing intelligence based on multi-week rolling window utilisation data, surfaced as governance signals in CI/CD pipelines. Mandatory resource request validation at pod admission — workloads that violate configured request bounds are blocked before they enter the cluster.

Against autoscaling amplification: Namespace-level budget guardrails that track autoscaling cost impact in real time and trigger automated actions — not retrospective alerts — when spend trajectories diverge from approved targets.

Against idle capacity: Bin-packing efficiency analysis that surfaces node consolidation opportunities. Cluster Autoscaler policy enforcement with continuously tuned scale-down thresholds. Non-production environment lifecycle automation that terminates idle clusters outside business hours.

Against image bloat: Container registry cost attribution that surfaces per-image storage and transfer costs by team — creating the cost signal that motivates multi-stage build discipline as an engineering practice, not a compliance exercise.

Against GPU waste: Real-time GPU utilisation tracking per cluster and workload. Idle GPU detection with automated scale-down triggers. MIG-aware cost attribution per GPU instance across AKS, EKS, GKE, and on-prem. Queue-based admission insights that surface GPU hoarding and redistribution opportunities. Training job SLA enforcement that auto-terminates runaway jobs before they exhaust GPU budget.

The unified output: Workload-level cost attribution across every Kubernetes platform and cloud provider, normalised to FOCUS 1.x — producing P&L-grade chargeback reports that connect container cost to team, product, and business outcome, automatically.

Available as SaaS or BYOC for regulated industries. SOC 2® Type II and GDPR certified. Delivered globally through Infosys, Wipro, and Hexaware.

Kubernetes is powerful. Without governance, it is also a multiplier of cloud inefficiency — at exactly the rate your infrastructure scales.

What the Best Organisations Do Differently in 2026

The enterprises achieving the lowest Kubernetes waste rates in 2026 share five disciplines that differentiate them from the majority:

They measure the right metrics. Not VM utilisation — workload utilisation. CPU and memory requested vs. consumed per namespace. GPU utilisation rate per cluster. Node utilisation rate as a bin-packing efficiency signal. These metrics do not appear in standard cloud billing; they require workload-level instrumentation.

They enforce governance at the platform layer. Resource request validation at pod admission. Mandatory tagging enforced before workloads enter the cluster. Budget guardrails that trigger automated actions. Governance embedded in the platform — not applied retrospectively in monthly reviews.

They rightsize before they commit. Reserved instances and committed-use contracts are powerful cost tools applied to correctly sized workloads. Applied to overprovisioned clusters, they lock in waste. The sequence is: measure actual utilisation → rightsize to P95 → observe for 60–90 days → commit to reservations on verified baselines.

They treat GPU economics as a financial governance priority. GPU waste is not a GPU scheduling problem — it is a financial discipline problem. Organisations with low GPU waste have queue-based admission control, MIG partitioning matched to workload types, and training job lifecycle enforcement. They track cost per GPU-hour per team, not cost per GPU node per month.

They integrate FinOps into platform engineering. As KubeCon 2026 confirmed: for FinOps not to be a catch-up game, it must be integrated into the delivery platform itself. Cost is surfaced to developers at deployment time, not discovered by finance teams at month-end.

Frequently Asked Questions

Why does Kubernetes increase cloud waste rather than reducing it?

Kubernetes increases cloud waste because it was designed to maximise availability and fault tolerance — not cost efficiency. Its scheduling model requires overprovisioned resource requests to prevent failures, and its abstraction layer hides workload-level cost from billing systems. 99% of clusters are overprovisioned, with average CPU utilisation of just 13% against requested capacity. The abstraction that makes Kubernetes powerful for deployment is the same abstraction that hides inefficiency from engineers and finance teams. Kubernetes does not create waste — it amplifies waste that already exists in how teams provision and configure workloads.

What is Kubernetes cloud waste and how do you measure it?

Kubernetes cloud waste is the gap between provisioned and actually consumed resources — CPU, memory, GPU, storage, and network — in Kubernetes clusters. Analysis of 500 production clusters in March 2026 found the average cluster wastes 47% of provisioned resources. Measure it by comparing requested resources against actual utilisation using kubelet metrics or Prometheus: CPU utilisation rate (actual/requested, target above 70%), memory utilisation rate, node utilisation rate (allocated as percentage of node capacity), and GPU utilisation rate (active compute as percentage of GPU-hours billed).

Why does Kubernetes autoscaling sometimes increase costs rather than reducing them?

Autoscaling scales what you give it — including misconfigured workloads. The HPA scales based on utilisation relative to resource requests, not on whether those requests are accurate. A workload requesting 1000m CPU and using 100m at 50% HPA target can trigger scale-out at 500m apparent utilisation while real CPU usage remains 100m. Each new replica maintains the same 10% actual utilisation. Autoscaling has multiplied an overprovisioning problem by the number of replicas — generating a cost event that appears as a traffic-correlated increase rather than amplified inefficiency.

How does container image bloat affect Kubernetes cost?

Container image bloat increases cost across three dimensions invisible in cloud billing: registry storage charges, network transfer charges at every image pull (including cross-region), and operational latency from slower cold starts that degrade autoscaling effectiveness and force standby capacity over-provisioning. Academic research shows images can be 80% bloated with components never used at runtime — a 2GB image may contain only 400MB of executing code.

What makes GPU waste the most expensive form of Kubernetes inefficiency?

GPU instances cost 10–50× more than CPU instances. Average GPU utilisation is just 23% — meaning 77% of provisioned GPU capacity is idle at any moment. An 8×A100 node pool at $28–35/hr bills at full rate whether GPUs are training, inferring, or empty. GPU waste differs from CPU waste not just in magnitude but in velocity: at enterprise AI scale with 66% of organisations running GenAI on Kubernetes, ungoverned GPU economics represent the fastest-growing waste category in cloud infrastructure.

How does traditional FinOps fail for Kubernetes?

Four structural mismatches: traditional FinOps attributes cost at the VM level while Kubernetes cost is workload-level; it optimises reserved instance coverage on overprovisioned clusters, locking in waste at a discount; it measures utilisation against VMs, missing the three-way gap between VM capacity, requested resources, and actual usage; and it produces monthly reports while Kubernetes waste is generated continuously and dynamically. The CNCF identifies overprovisioning (70%), lack of ownership (45%), and unused resources (43%) as top drivers of rising Kubernetes spend — none of which standard cloud cost tools can address.

What is the Kubernetes economics problem at the board level?

Three board-level risks: Kubernetes is the largest single source of cloud inefficiency for most technology enterprises, yet cloud bills show only VM costs — making the problem invisible to CFOs without dedicated tooling. AI workloads running on Kubernetes multiply GPU waste economics — 77% idle GPU capacity at $28–35/hr per node pool generates proportionally higher waste than CPU clusters. With global cloud waste at $182B in 2026 and Kubernetes contributing 20–30% of cloud spend, ungoverned Kubernetes economics represent material P&L risk requiring governance equivalent to capital expenditure controls.

How does DigiUsher’s FinOps OS address Kubernetes economics?

Through governance rather than visibility. Mandatory tagging at pod admission, workload-level attribution across AKS/EKS/GKE/OpenShift/on-prem normalised to FOCUS 1.x, GPU lifecycle automation with idle scale-down and training job SLA enforcement, and automated budget guardrails that act before thresholds are breached. Point tools provide recommendations. DigiUsher’s FinOps OS enforces governance — before cost is incurred, not after it appears in the monthly bill.

References

- Wozz — Kubernetes Memory Overprovisioning Study, January 2026

- SpendArk — The State of Cloud Waste 2026: $100B+ in Unnecessary Spend

- Sedai — Kubernetes Cost and Resource Optimisation Guide 2026

- BairesDev — Kubernetes Cost Optimisation: Stop Overprovisioning by Default

- DataStackHub — Cloud Wastage Statistics 2025–2026

- SiliconANGLE — KubeCon Europe 2026: The AI Execution Gap Meets Cloud-Native Reality

- KubeCon in Numbers — Cloud Native, AI Infrastructure, Convergence (LucaBerton)

- PerfectScale / DoiT — KubeCon CloudNativeCon Europe 2026 Recap

- TechTarget — KubeCon EU 2026: Infrastructure Catches Up to AI

- CIO — How Kubernetes Is Finally Solving the GPU Utilisation Crisis

- Flexera — State of the Cloud Report 2025

- FinOps Foundation — FOCUS Specification

- CNCF — Q1 2026 State of Cloud Native Development

Request a Demo

See how these ideas translate into measurable cloud and AI savings.

Book a tailored DigiUsher walkthrough to connect the strategy in this article to your team's cost visibility, governance, and optimization priorities.

Continue Reading

More from the DigiUsher editorial team.

Multi-Cloud Kubernetes Strategy: Why Portability Without Cost Governance Fails

Multi-cloud Kubernetes delivers portability across AKS, EKS, GKE, and OpenShift — but portability does not solve economics. Discover the five ways cost fragmentation silently destroys multi-cloud ROI, how leading platform teams are building financial orchestration alongside workload orchestration, and why DigiUsher's FinOps OS outperforms IBM Cloudability, CloudZero, Vantage, and FinOut for Kubernetes cost governance.

Explore article

AKS vs EKS vs GKE vs On-Prem vs OpenShift: Cost Governance Deep Dive

Not all Kubernetes platforms are economically equal. This FinOps deep dive compares AKS, EKS, GKE, on-prem Kubernetes, and OpenShift across cost visibility, pricing structure, optimisation potential, and governance capability — with a practical framework for making Kubernetes platform economics a competitive advantage in 2026.

Explore article

FinOps for Kubernetes: The Ultimate Guide to Rightsizing, Bin Packing, and GPU Optimisation

96% of enterprises run Kubernetes — yet only 13% of requested CPU is actually used. This ultimate FinOps guide covers workload rightsizing using P50/P95 percentiles, bin packing strategies for node efficiency, GPU optimisation with MIG and time-slicing, namespace cost attribution, and real-time policy enforcement — with concrete benchmarks, configuration patterns, and the DigiUsher FinOps OS layer that governs it all.

Explore article